I have some data of with the relationship

Y=commonFactor+error1 and X=Alpha+Beta*commonFactor+error2

I want to test the hypothesis that Beta is non-zero, or that there is a significant relationship between my measured X and Y, or that they share a common factor. I've read that null hypothesis testing isn't possible in cases of MA/SMA/RMA, but I think that shouldn't apply to deming/orthogonal regression right? But i've looked through every single R package on Deming/orthogonal/total least squares regression I can find, and none of them offer a test of the non-zero hypothesis, so I think I have to manually implement it, but I don't understand statistics well enough to come up with the formula to test. any help would be appreciated.

EDIT: it appears a reasonable way to compare the fit of a total least squares model and an OLS model is to compare the likelihoods (by first converting to AIC perhaps). But if they can be compared this way, why? it seems like total least squares is explaining something different, perhaps the joint distribution of X and Y, from the OLS which only calculates the likelihood of just one of the variables?

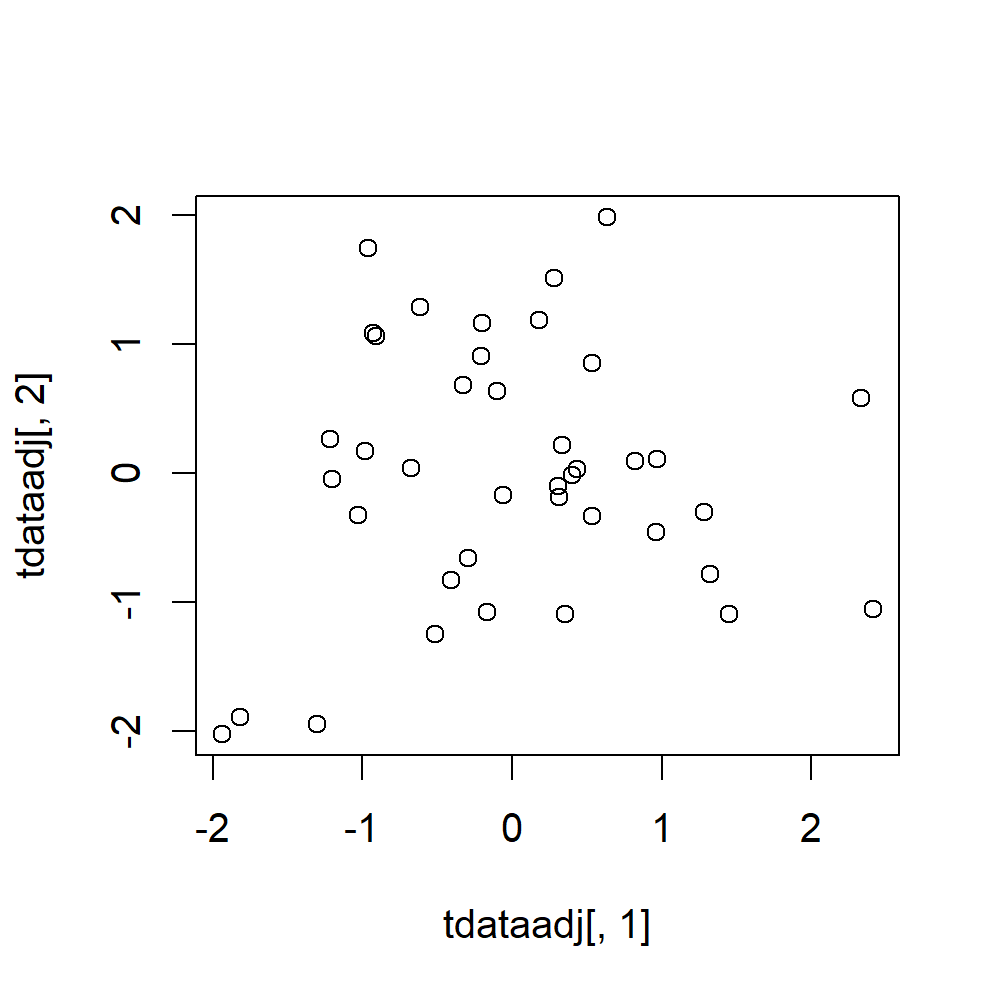

EDIT 2: In trying to compare OLS and TLS (total least squares) it is useful to have some code to create and analyze data, hence:

# R code to create data and compare OLS to TLS properties/performance. tcommon<-rnorm(numPoints) # common value te1<-rnorm(numPoints) # error te2<-rnorm(numPoints) # error te3<-rnorm(numPoints) # error te4<-rnorm(numPoints) # error # create first dataset "tdata" where X and Y have error tx<-tcommon+te1+te2; ty<-tcommon+te3+te4 tdata<-list();tdata$X<-tx; tdata$Y<-ty; tdata<-as.data.frame(tdata) # create second dataset "tdata2" where X has no error and Y has the X error # subtracted from it. tdata2<-list();tdata2$X<-tcommon; tdata2$Y<-tcommon+te3+te4-te1-te2; tdata2<-as.data.frame(tdata2) # convert dataframes to vectors for methods which need that format of data dtaX1<-tdata$X; dtaX2<-tdata2$X; dtaY1<-tdata$Y; dtaY2<-tdata2$Y # Analyze data set one with OLS tlm1XY<-lm(Y~X,data=tdata); tlm1YX<-lm(X~Y,data=tdata) # Analyze data set one with TLS # the "tls" function is copy and pasted from https://rdrr.io/bioc/DTA/src/R/wtls.R dta1<-tls(dtaY1~dtaX1+0) dta2<-tls(dtaY2~dtaX2+0)