Elastic Cloud Managed OTLP Endpoint (mOTLP)

Serverless Observability Serverless Security ECH Self-Managed

The Elastic Cloud Managed OTLP Endpoint allows you to send OpenTelemetry data directly to Elastic Cloud using the OTLP protocol, with Elastic handling scaling, data processing, and storage. The Managed OTLP endpoint can act like a Gateway Collector, so that you can point your OpenTelemetry SDKs or Collectors to it.

The Elastic Cloud Managed OTLP Endpoint endpoint is not available for Elastic self-managed, ECE or ECK clusters. To send OTLP data to any of these cluster types, deploy and expose an OTLP-compatible endpoint using the EDOT Collector as a gateway. Refer to EDOT deployment docs for more information.

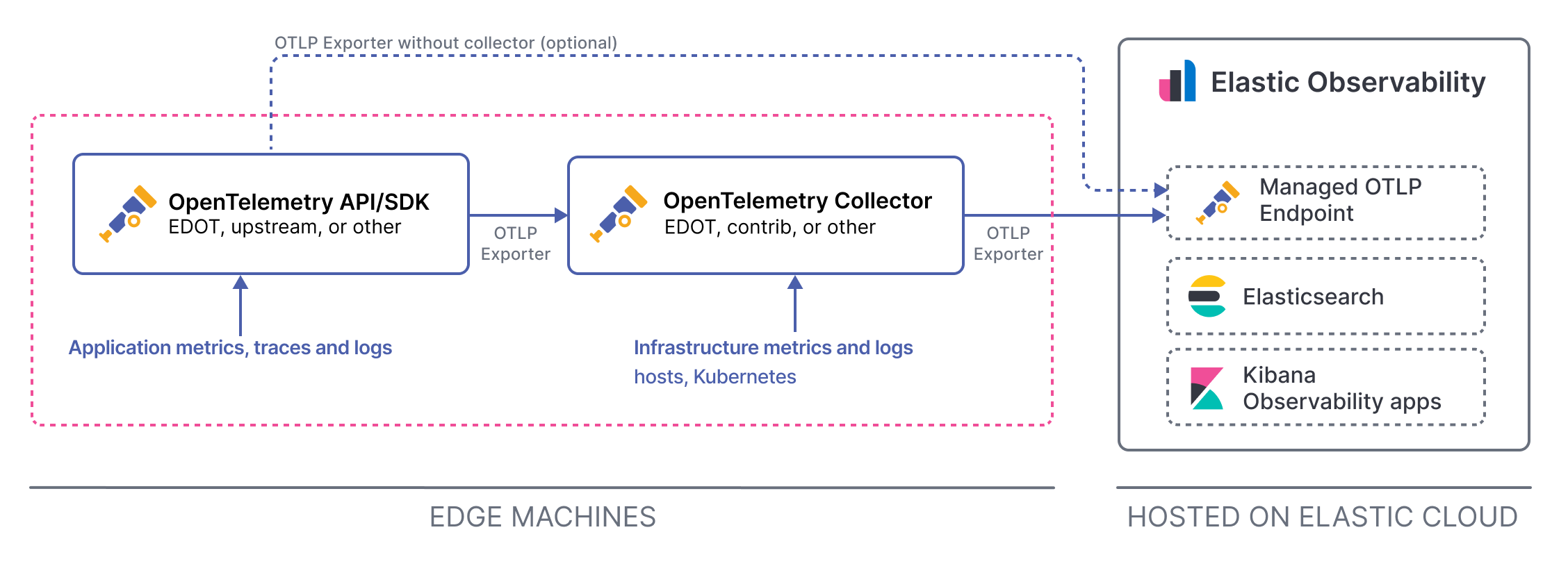

This diagram shows data ingest using Elastic Distribution of OpenTelemetry and the Elastic Cloud Managed OTLP Endpoint:

Telemetry is stored in Elastic in OTLP format, preserving resource attributes and original semantic conventions. If no specific dataset or namespace is provided, the data streams are: traces-generic.otel-default, metrics-generic.otel-default, and logs-generic.otel-default.

For a detailed comparison of how OTel data streams differ from classic Elastic APM data streams, refer to OTel data streams compared to classic APM.

To use the Elastic Cloud Elastic Cloud Managed OTLP Endpoint you need the following:

- An Elastic Cloud Serverless project or an Elastic Cloud Hosted (ECH) deployment.

- An OTLP-compliant shipper capable of forwarding logs, metrics, or traces in OTLP format. This can include:

- OpenTelemetry Collector (EDOT, Contrib, or other distributions)

- OpenTelemetry SDKs (EDOT, upstream, or other distributions)

- EDOT Cloud Forwarder

- Any other forwarder that supports the OTLP protocol.

You don't need APM Server when ingesting data through the Managed OTLP Endpoint. The APM integration (.apm endpoint) is a legacy ingest path that only supports traces and translates OTLP telemetry to ECS, whereas Elastic Cloud Managed OTLP Endpoint natively ingests OTLP data.

For Elastic Cloud Hosted deployments, Elastic Cloud Managed OTLP Endpoint is currently supported in the following AWS regions: ap-southeast-1, ap-northeast-1, ap-south-1, eu-west-1, eu-west-2, us-east-1, us-west-2, us-east-2. Support for additional regions and cloud providers is in progress and will be expanded over time.

To send data to Elastic through the Elastic Cloud Managed OTLP Endpoint, follow the Send data to the Elastic Cloud Managed OTLP Endpoint quickstart.

To retrieve your Elastic Cloud Managed OTLP Endpoint endpoint address and API key, follow these steps:

- In Elastic Cloud, create an Observability project or open an existing one.

- Go to Add data, select Applications and then select OpenTelemetry.

- Copy the endpoint and authentication headers values.

Alternatively, you can retrieve the endpoint from the Manage project page and create an API key manually from the API keys page.

Stack

- In Elastic Cloud, create an Elastic Cloud Hosted deployment or open an existing one.

- Go to Add data, select Applications and then select OpenTelemetry.

- Copy the endpoint and authentication headers values.

To configure OpenTelemetry SDKs to send data directly to the Elastic Cloud Managed OTLP Endpoint, set the OTEL_EXPORTER_OTLP_ENDPOINT and OTEL_EXPORTER_OTLP_HEADERS environment variable.

For example:

export OTEL_EXPORTER_OTLP_ENDPOINT="https://<motlp-endpoint>" export OTEL_EXPORTER_OTLP_HEADERS="Authorization=ApiKey <key>" You can route logs to dedicated datasets by setting the data_stream.dataset attribute to the log record. This attribute is used to route the log to the corresponding dataset.

For example, if you want to route the EDOT Cloud Forwarder logs to custom datasets, you can add the following attributes to the log records:

processors: transform: log_statements: - set(log.attributes["data_stream.dataset"], "aws.cloudtrail") where log.attributes["aws.cloudtrail.event_id"] != nil You can also set the OTEL_RESOURCE_ATTRIBUTES environment variable to set the data_stream.dataset attribute for all logs. For example:

export OTEL_RESOURCE_ATTRIBUTES="data_stream.dataset=app.orders" The Elastic Cloud Managed OTLP Endpoint endpoint is designed to be highly available and resilient. However, there are some scenarios where data might be lost or not sent completely. The Failure store is a mechanism that allows you to recover from these scenarios.

The Failure store is always enabled for Elastic Cloud Managed OTLP Endpoint data streams. This prevents ingest pipeline exceptions and conflicts with data stream mappings. Failed documents are stored in a separate index. You can view the failed documents from the Data Set Quality page. Refer to Data set quality.

The following limitations apply when using the Elastic Cloud Managed OTLP Endpoint:

- Tail-based sampling (TBS) is not available.

- Universal Profiling is not available.

- Only supports histograms with delta temporality. Cumulative histograms are dropped.

- Latency distributions based on histogram values have limited precision due to the fixed boundaries of explicit bucket histograms.

For more information on billing, refer to Elastic Cloud pricing.

Requests to the Elastic Cloud Managed OTLP Endpoint are subject to rate limiting and throttling. If you send data at a rate that exceeds the limits, your requests might be rejected.

The following rate limits and burst limits apply:

| Deployment type | Rate limit | Burst limit | Dynamic scaling |

|---|---|---|---|

| Serverless | 30 MB/s | 60 MB/s | Not available |

| ECH | 1 MB/s (initial) | 2 MB/s (initial) | Yes |

As long as your data ingestion rate stays at or below the rate limit and burst limit, your requests are accepted.

For the Elastic Cloud Serverless trial, the rate limit is reduced to 15 MB/s and the burst limit is 30 MB/s.

Stack ECH

For Elastic Cloud Hosted deployments, rate limits can scale up or down dynamically based on backpressure from Elasticsearch. Every deployment starts with a 1 MB/s rate limit and 2 MB/s burst limit. The system automatically adjusts these limits based on your Elasticsearch capacity and load patterns. Scaling requires time, so sudden load spikes might still result in temporary rate limiting.

If you send data that exceeds the available limits, the Elastic Cloud Managed OTLP Endpoint responds with an HTTP 429 Too Many Requests status code. A log message similar to this appears in the OpenTelemetry Collector's output:

{ "code": 8, "message": "error exporting items, request to <ingest endpoint> responded with HTTP Status Code 429" } The causes of rate limiting differ by deployment type:

- Elastic Cloud Serverless: You exceed the 15 MB/s rate limit or 30 MB/s burst limit.

- Elastic Cloud Hosted: You send load spikes that exceed current limits (temporary

429s) or your Elasticsearch cluster can't keep up with the load (consistent429s).

After your sending rate goes back to the allowed limit, or after the system scales up the rate limit for Elastic Cloud Hosted, requests are automatically accepted again.

The solutions to rate limiting depend on your deployment type:

For Elastic Cloud Hosted deployments, if you're experiencing consistent 429 errors, the primary solution is to increase your Elasticsearch capacity. Because rate limits are affected by Elasticsearch backpressure, scaling up your Elasticsearch cluster reduces backpressure and, over time, increases the ingestion rate for your deployment.

To scale your deployment:

Temporary 429s from load spikes typically resolve on their own as the system scales up, as long as your Elasticsearch cluster has sufficient capacity.

For Elastic Cloud Serverless projects, you can either decrease data volume or request higher limits.

To increase the rate limit, contact Elastic Support.