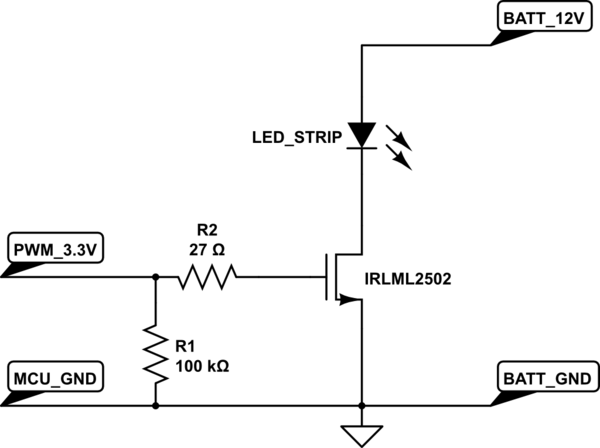

I want to drive LEDs using an Arduino Due and a MOSFET. Below is the circuit I am using, taken from another post: Driving LED strip from microcontroller

I replaced the transistor in the picture with a IRLB8721PbF. Datasheet:https://cdn-shop.adafruit.com/datasheets/irlb8721pbf.pdf

The LED_STRIP is replaced with two LH351B white LEDs connected in series. Datasheet:http://www.samsung.com/global/business/business-images/led/file/product/lighting/201504/Data_Sheet_LH351B_Rev.4.3d.pdf

The forward voltage of the LH351B is approximately 3V, so I am using 6V as my power supply. Basically I want to keep turning the LEDs on and off. I noticed that when I increased the switching frequency, for example 100kHZ, the LEDs were dimmer than what it was when switching at a slower frequency like 1kHz. What could be causing the decrease in brightness?

I believe LED should be able to switch at the nano seconds range? And the MOSFET I am using has rise/fall time at nano seconds as well. Does it have to do with the microcontroller? Below is the code I am using, just a simple digitalWrite using port manipulation:

PIOD->PIO_SODR = 1<<8; //HIGH on pin 12 //digitalWrite(12, HIGH); delayMicroseconds(10); PIOD->PIO_CODR = 1<<8; //LOW on pin 12 //digitalWrite(12,LOW); delayMicroseconds(10); } --EDITED--

full code:

#include <SPI.h> #include <SD.h> File dataFile; void setup() { pinMode(12, OUTPUT); Serial.begin(210000); if (!SD.begin(10)) { Serial.println("Card failed, or not present"); return; } Serial.println("card initialized."); } void loop() { int myData_read; dataFile = SD.open("DATALOG.dat"); myData_read = dataFile.read(); //Serial.println(myData_read); for(int i=0; i < 8; i++){ if((myData_read & (1<<i)) >> i){ PIOD->PIO_SODR = 1<<8; //HIGH on pin 12 //digitalWrite(12, HIGH); delayMicroseconds(5); } else { PIOD->PIO_CODR = 1<<8; //LOW on pin 12 //digitalWrite(12,LOW); delayMicroseconds(5); } } dataFile.close(); }