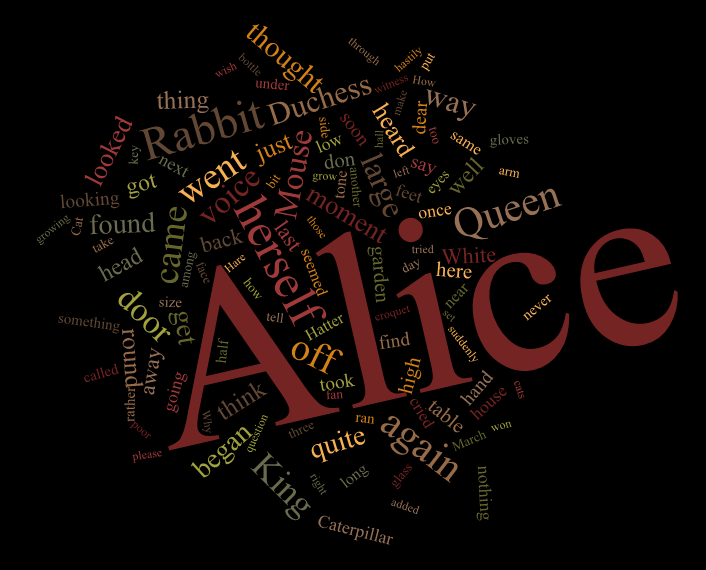

A preview

Before I show any code, here's a preview of what is possible with some tweaking:

First try

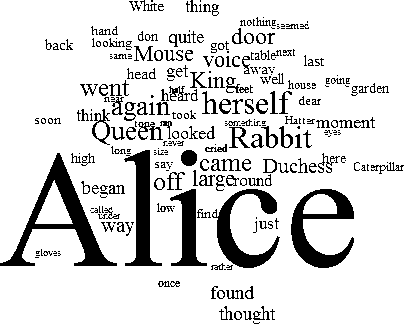

Here's a go at implementing Wordle's layout algorithm, described at cormullion's link.

First, let's generate the word data (this is pretty arbitrary):

punctuation = ",/.<>?;':\"()-_!&" (* boring words: *) common = {"the", "of", "and", "to", "in", "I", "that", "was", "his", "he", "it", "with", "is", "for", "as", "had", "you", "not", "be", "her", "on", "at", "by", "which", "have", "or", "from", "this", "him", "but", "all", "she", "they", "were", "my", "are", "me", "one", "their", "so", "an", "said", "them", "we", "who", "would", "been", "will", "no", "when", "there", "if", "more", "out", "up", "into", "do", "any", "your", "what", "has", "man", "could", "other", "than", "our", "some", "very", "time", "upon", "about", "may", "its", "only", "now", "like", "little", "then", "can", "should", "made", "did", "us", "such", "a", "great", "before", "must", "two", "these", "see", "know", "over", "much", "down", "after", "first", "mr", "good", "men"}; text = Select[ StringSplit@ StringReplace[ExampleData[{"Text", "AliceInWonderland"}], Alternatives @@ Characters[punctuation] -> " "], StringLength[#] > 2 & ]; text = DeleteCases[text, w_ /; MemberQ[common, ToLowerCase[w]]]; Now that we have the data, let's take a word tally and generate words at sized proportional to their frequency:

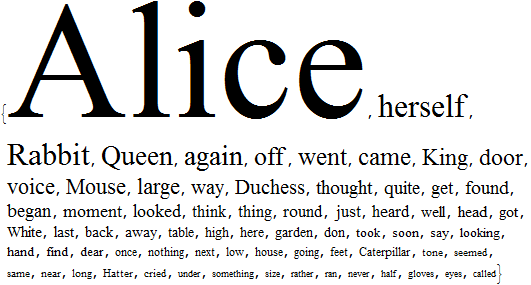

words = TakeWhile[Reverse@SortBy[Tally[text], Last], #[[2]] >= 10 &]; styledwords = Style[#1, FontSize -> #2, FontFamily -> "Times"] & @@@ words

Let's rasterize and binarize these (the binarization is to ease overlap detection):

images = Binarize@Rasterize[#, "Image"] & /@ styledwords; This counts black pixels in an image:

count[img_] := ImageLevels[Binarize[img]][[1, 2]] Now run this:

canvas = Image[Graphics[], ImageSize -> {1000, 1000}]; Monitor[ Do[ x = 0; w = images[[i]]; cc = count[canvas]; centre = RandomReal[0.1 {-1, 1}, {2}] + {0.5, 0.5}; compose := res = ImageCompose[canvas, SetAlphaChannel[w, ColorNegate[w]], Scaled[centre + x/100 {Cos[x], Sin[x]}]]; compose; While[count[res] - cc - count[w] < 0 && x < 80, x += 1; compose; ]; canvas = res, {i, 1, Length[images]} ], canvas ] canvas // ImageCrop

Some explanations:

This code takes the word images one by one and tries to place them at a random position. If it does not fit at that position, it tries other positions, moving on an outward winding Archimedean spiral. The step size of moving on this spiral should be chosen so that the points are distributed with an approximately constant density in the plane, and are not on gathered on a few thin lines. I used this code to verify the point distribution for a given step size (1 was good enough for a first try):

Manipulate[Graphics[Point@Table[x/100 {Cos[x], Sin[x]}, {x, 0, 100, s}]], {s, 0.1, 1.5}] Testing for image overlap (i.e. whether the word fits) is done by composing the image onto a canvas which has all the previously placed words, and verifying that no black pixels will collide (i.e. the total pixel count will not change after placing the image). There are probably faster ways to do this.

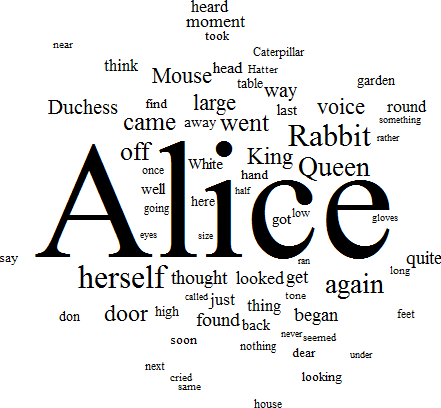

Second try: converting all this to vector graphics

I used images but his should only be an aid for calculating positions. The images should be dilated prior to arranging them (to have more "air" between them), their positions should be recorded, and the recorded positions should be used to arrange the vector versions of words.

Here's a basic implementation:

images = ColorNegate@Dilation[ColorNegate[#], 2] & /@ images canvas = Image[Graphics[], ImageSize -> {1000, 1000}]; positions = {}; Monitor[ Do[ x = 0; w = images[[i]]; cc = count[canvas]; centre = RandomReal[0.1 {-1, 1}, {2}] + {0.5, 0.5}; compose := res = ImageCompose[canvas, SetAlphaChannel[w, ColorNegate[w]], pos = Scaled[centre + x/100 {Cos[x], Sin[x]}]]; compose; While[count[res] - cc - count[w] < 0 && x < 80, x += 1; compose; ]; canvas = res; AppendTo[positions, pos], {i, 1, Length[images]} ], canvas ] Rasterize[ Graphics[MapThread[Text, {styledwords, positions}], ImageSize -> 1000], "Image"] // ImageCrop

There's a lot of refinement needed though (I didn't have time to tune the parameters).