Preferably free tools if possible.

Also, the option of searching for multiple regular expressions and each replacing with different strings would be a bonus.

Perl.

Seriously, it makes sysadmin stuff so much easier. Here's an example:

perl -pi -e 's/something/somethingelse/g' *.log perl -pi -e 's/something/somethingelse/g'`egrep -ril "something" ./`p: print, for each line, i:edit file inline, e: execute command given on commandlinerg and xargs?perl -pi -e -0777 's/something/somethingelse/g' *.logsed is quick and easy:

sed -e "s/pattern/result/" <file list> You can also join it with find:

find <other find args> -exec sed -e "s/pattern/result/" "{}" ";" -i argument appears to fix that. See also stackoverflow.com/questions/10445934/change-multiple-filesI've written a free command line tool for Windows to do this. It's called rxrepl, it supports unicode and file search. Some may find it useful.

Textpad does a good job of it on Windows. And it's a very good editor as well.

Unsurprisingly, Perl does a fine job of handling this, in conjunction with a decent shell:

for file in @filelist ; do perl -p -i -e "s/pattern/result/g" $file done This has the same effect (but is more efficient, and without the race condition) as:

for file in @filelist ; do cat $file | sed "s/pattern/result/" > /tmp/newfile mv /tmp/newfile $file done Under Windows, I used to like WinGrep. This no longer appears to exist, but a new project called grepwin does. I have no experience with this, so cannot recommend it.

Under Ubuntu, I used to use Regexxer but found that GUIs have to work too hard to work around the realities of regular expressions.

These days I almost always use ack.

For Mac OS X, TextWrangler does the job.

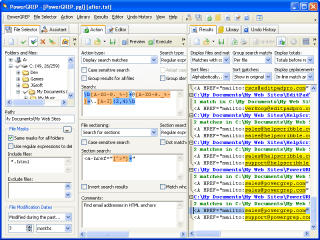

My personal favorite is PowerGrep by JGSoft. It interfaces with RegexBuddy which can help you to create and test the regular expression, automatically backs up all changes (and provides undo capabilities), provides the ability to parse multiple directories (with filename patterns), and even supports file formats such as Microsoft Word, Excel, and PDF.

I love this tool:

Gives you an "as you type" preview of your regular expression... FANTASTIC for those not well versed in RE's... and it is super fast at changing hundreds or thousands of files at a time...

And then let's you UNDO your changes as well...

Very nice...

Patrick Steil - http://www.podiotools.com

jEdit's regex search&replace in files is pretty decent. Slightly overkill if you only use it for that, though. It also doesn't support the multi-expression-replace you asked for.

Vim for the rescue (and president ;-) ). Try:

vim -c "argdo! s:foo:bar:gci" <list_of_files> (I do love Vim's -c switch, it's magic. Or if you had already in Vim, and opened the files, e.g.:

vim <list_of_files> Just issue:

:bufdo! s:foo:bar:gci Of course sed and perl is capable as well. HTH.

I have the luxury of Unix and Ubuntu; In both, I use gawk for anything that requires line-by-line search and replace, especially for line-by-line for substring(s). Recently, this was the fastest for processing 1100 changes against millions of lines in hundreds of files (one directory) On Ubuntu I am a fan of regexxer

sudo apt-get install regexxer if 'textpad' is a valid answer, I would suggest Sublime Text hands down.

Multi-cursor edits are an even more efficient way to make replacements in general I find, but its "Find in Files" is top tier for bulk regex/plain find replacements.

If you are a Programmer: A lot of IDEs should do a good Job as well.

For me PyCharm worked quite nice:

It has a live preview.

For at least 25 years, I've been using Emacs for large-scale replacements across large numbers of files. Run etags to specify any set of files to search through:

$ etags file1.txt file2.md dir1/*.yml dir2/*.json dir3/*.md Then open Emacs and run tags-query-replace, which prompts for regex and replacement:

\b\(foo\)\b \1bar