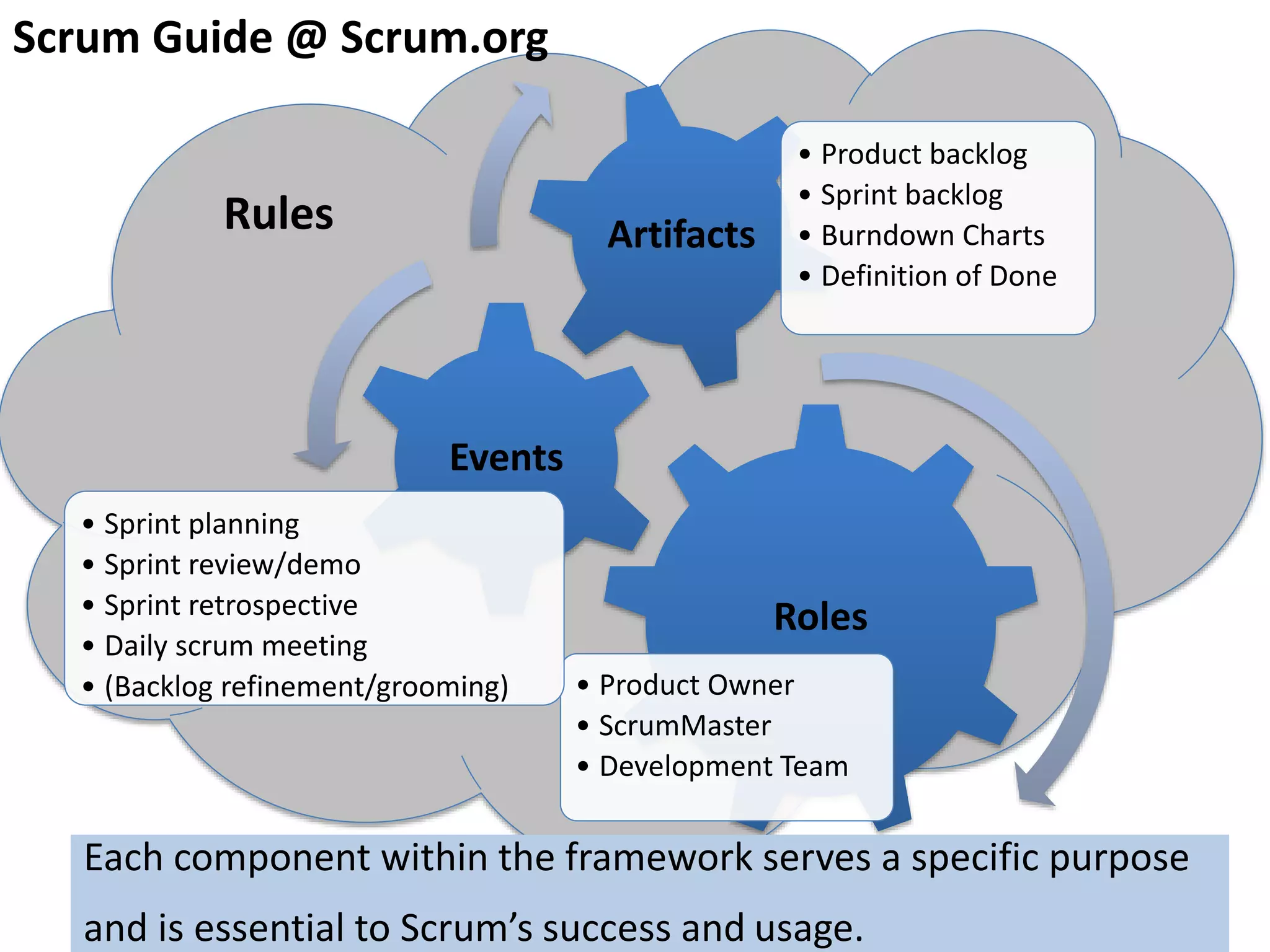

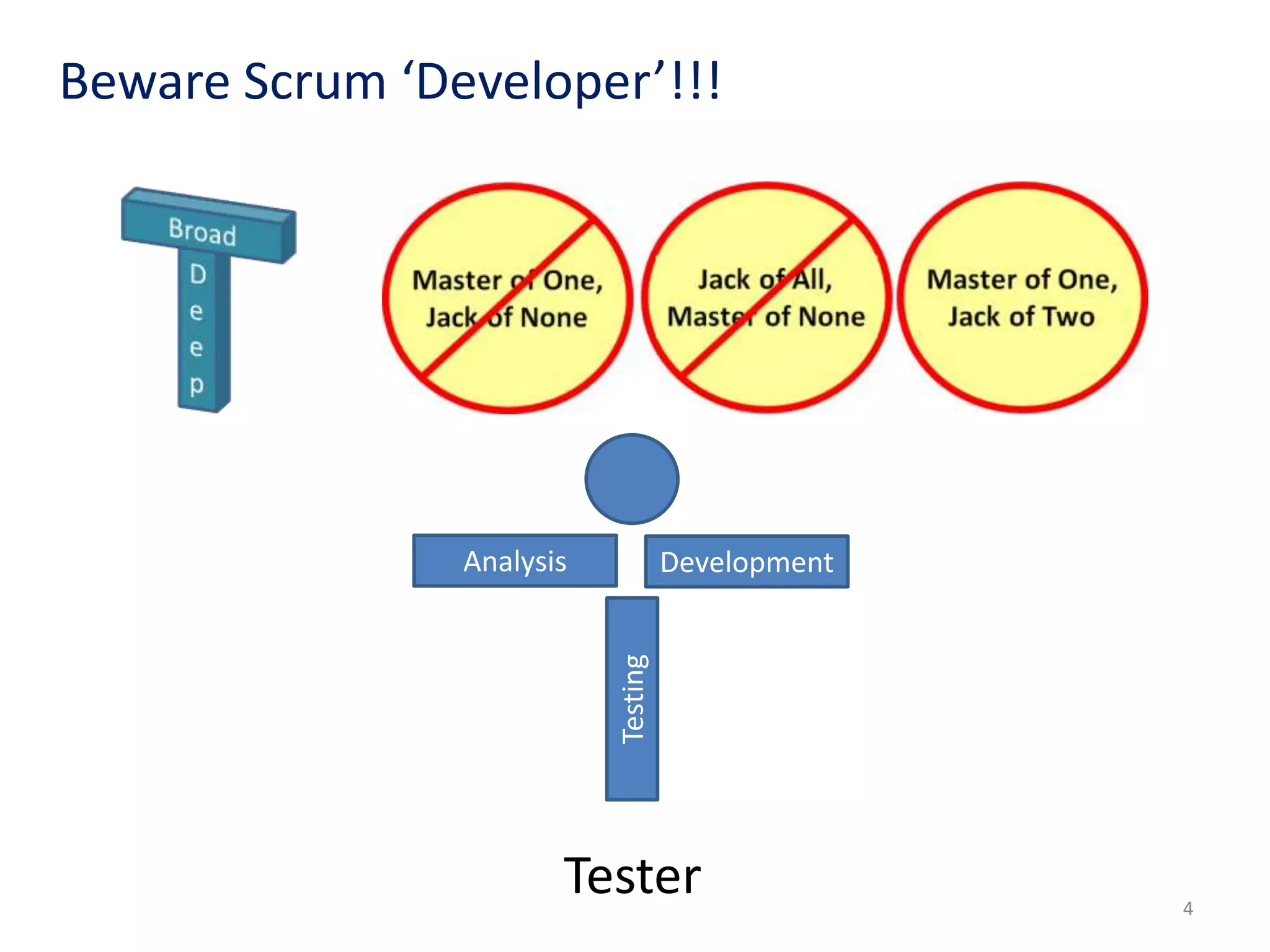

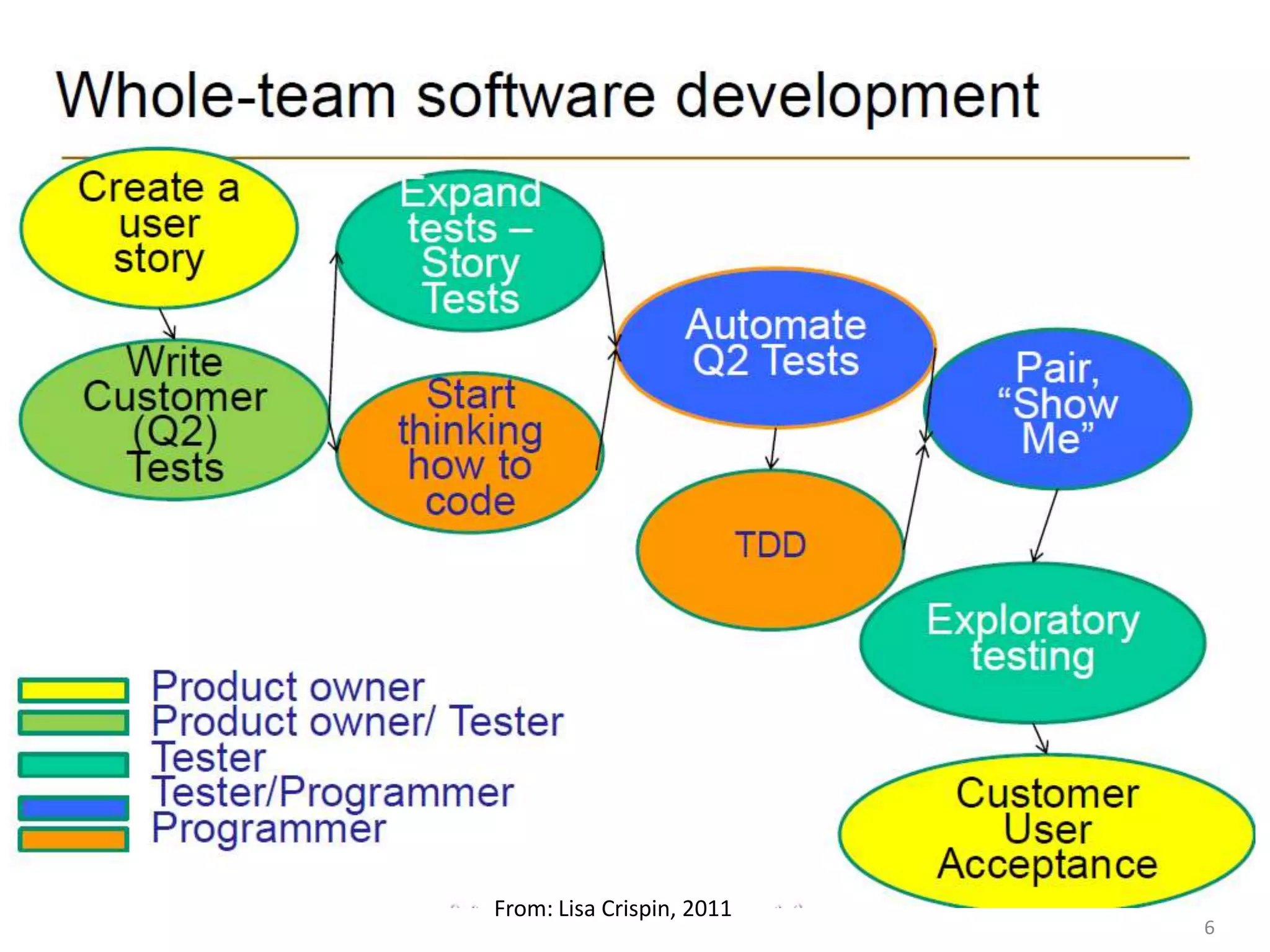

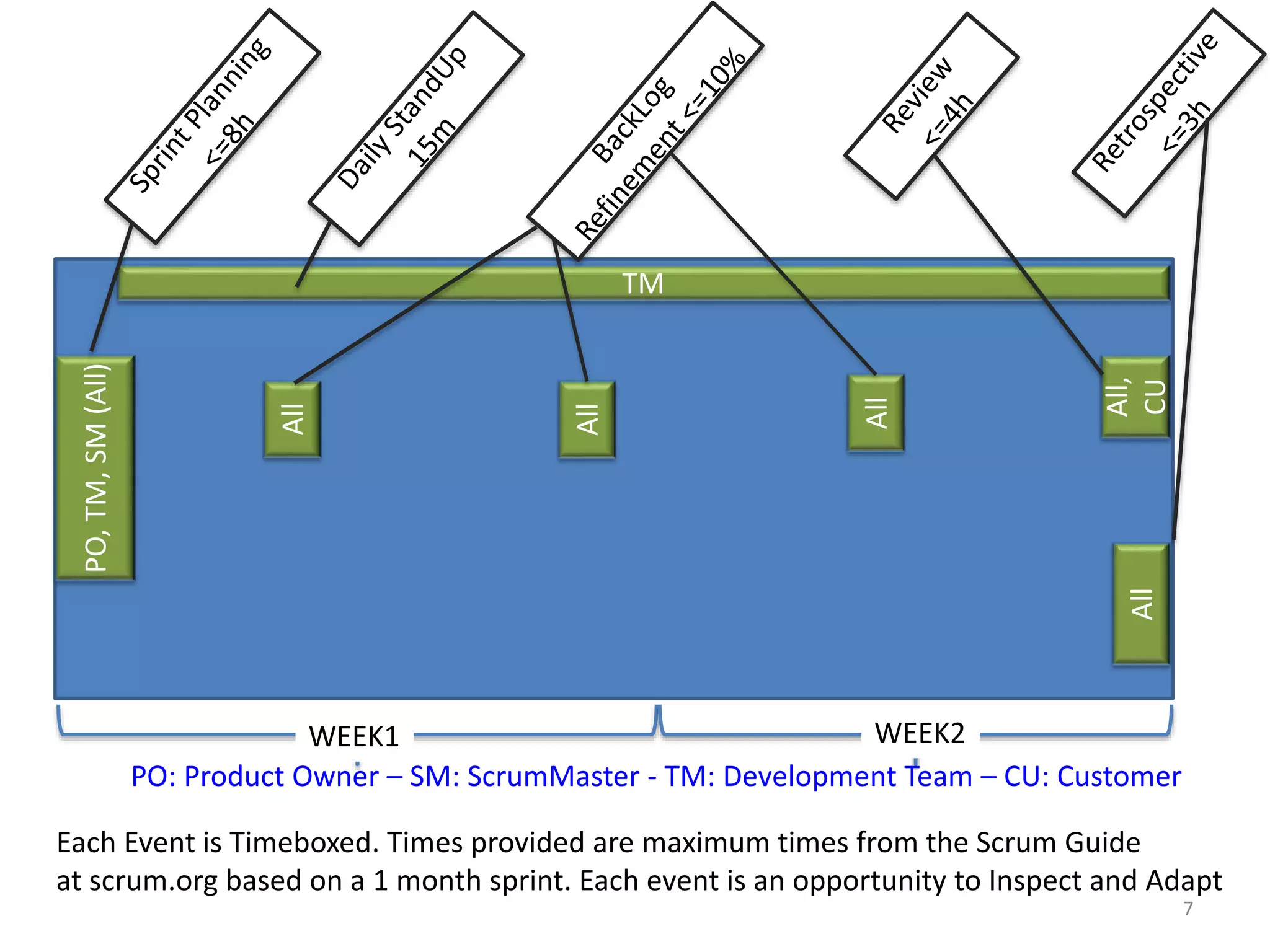

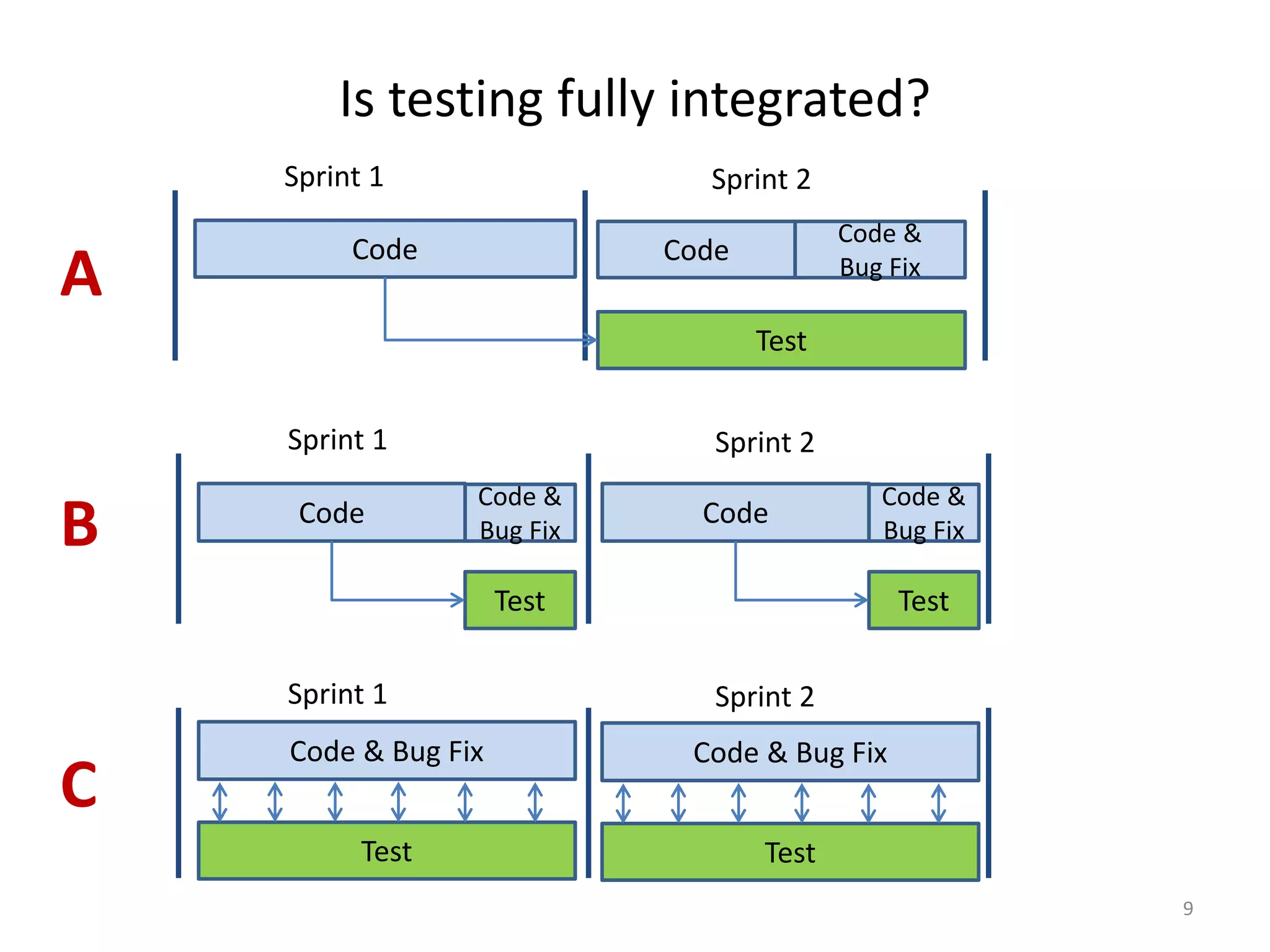

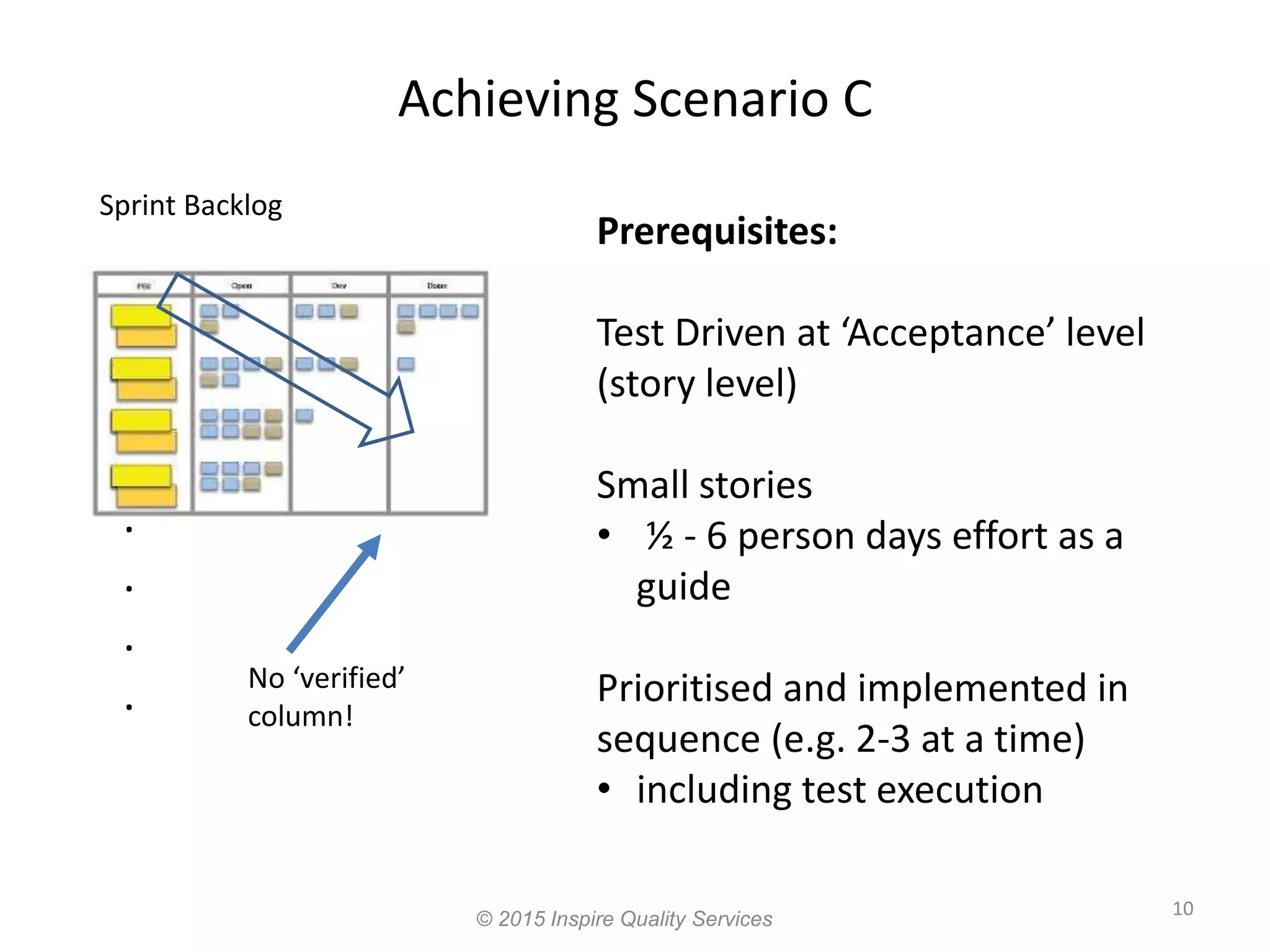

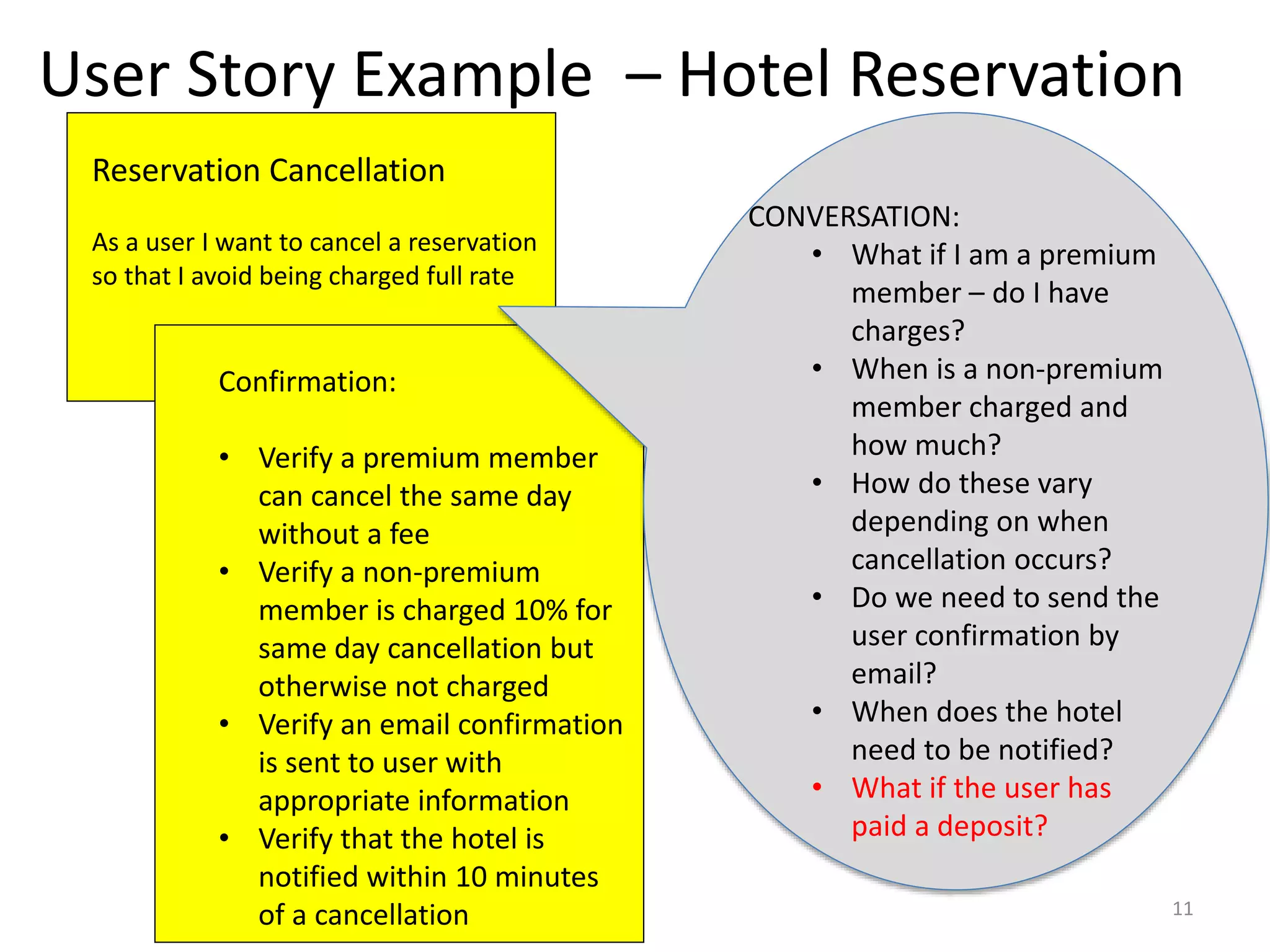

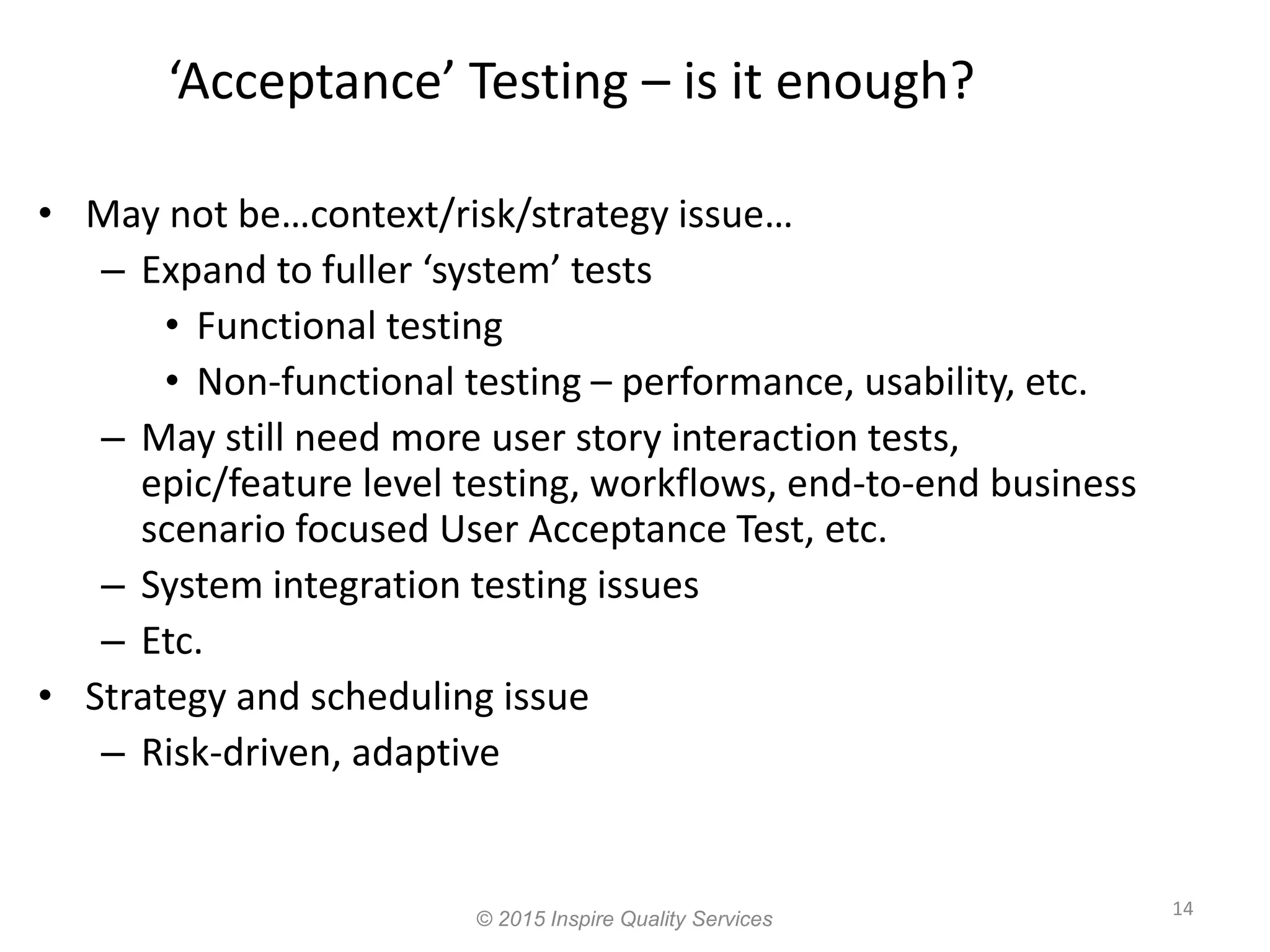

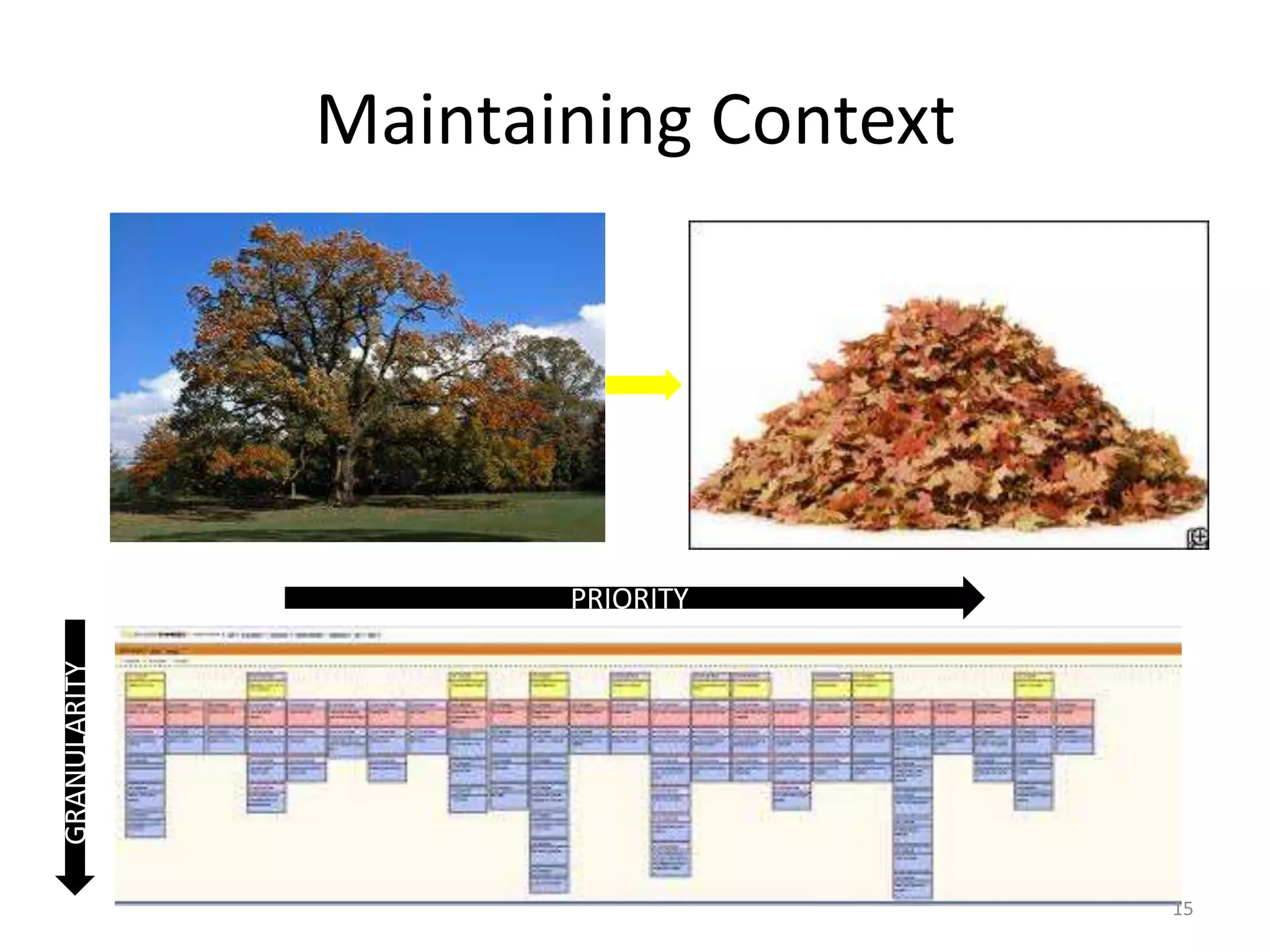

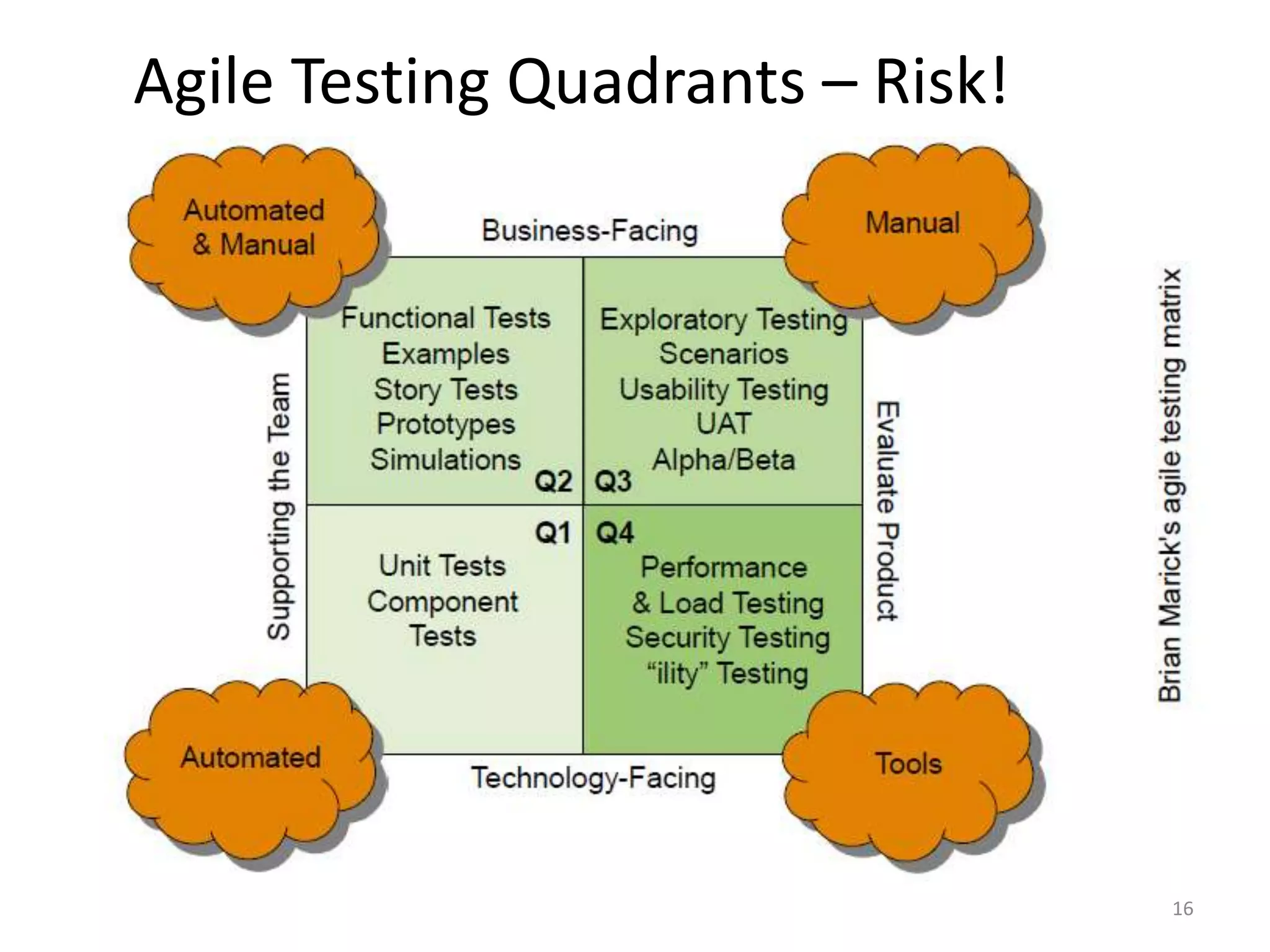

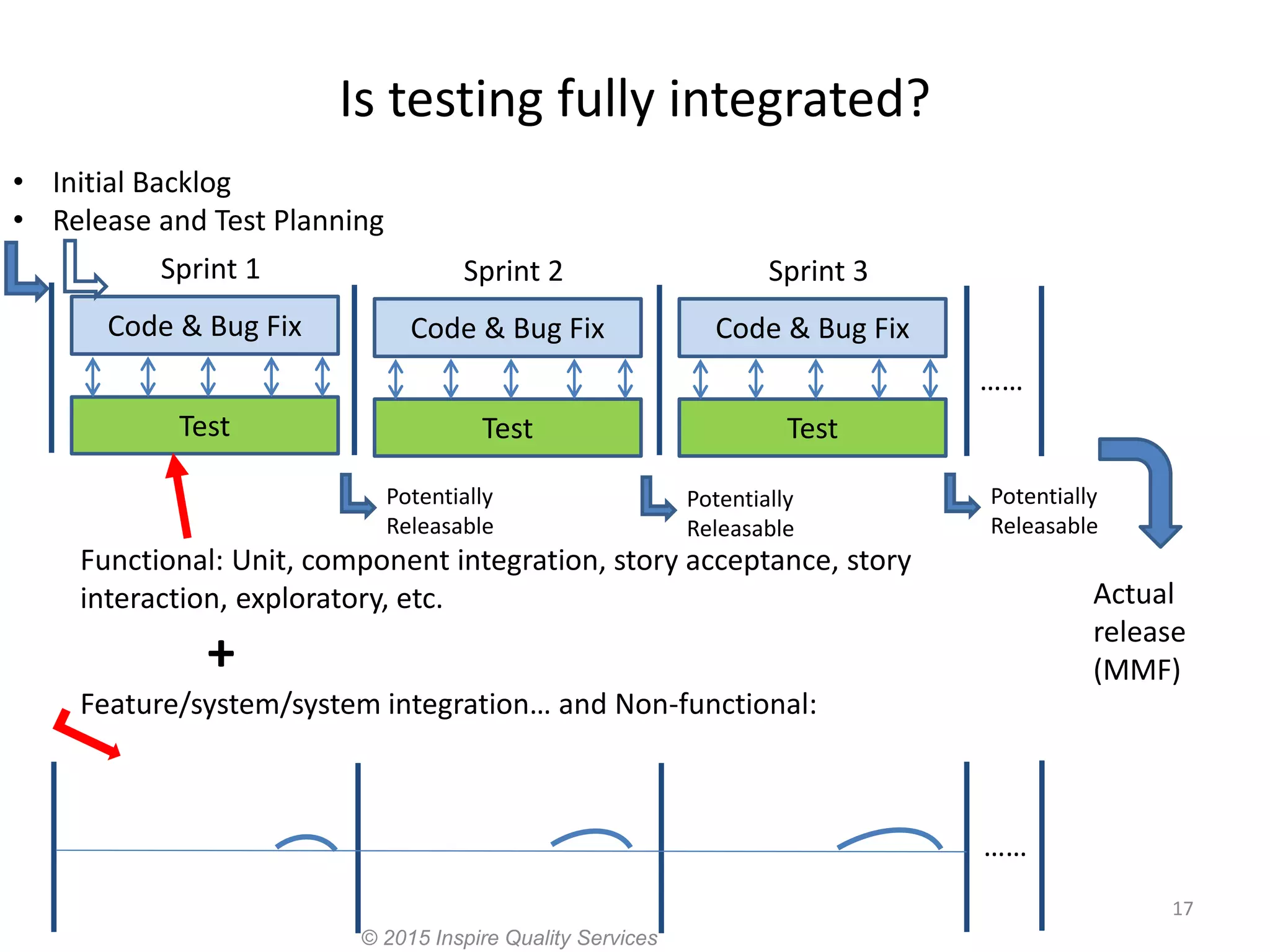

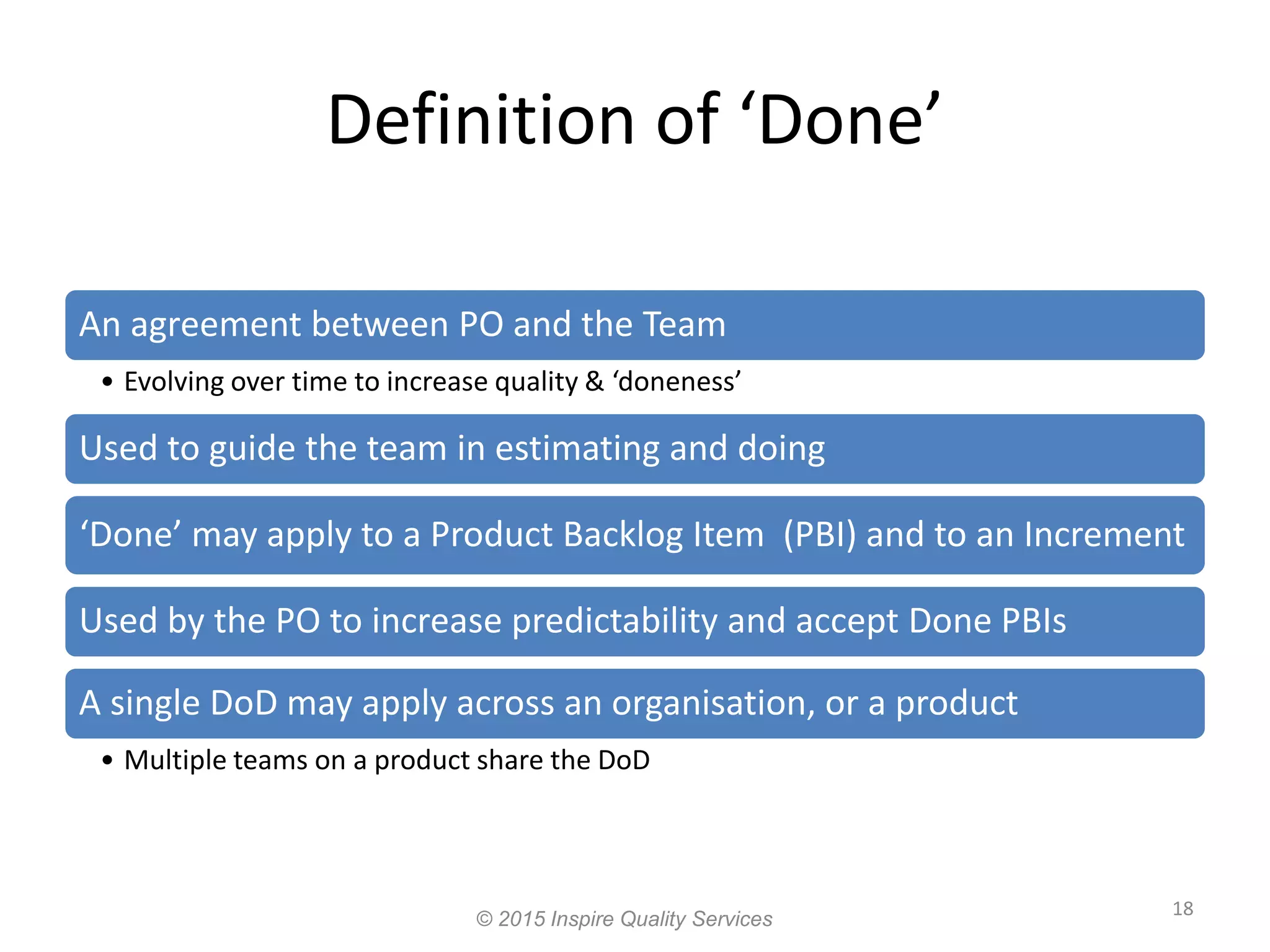

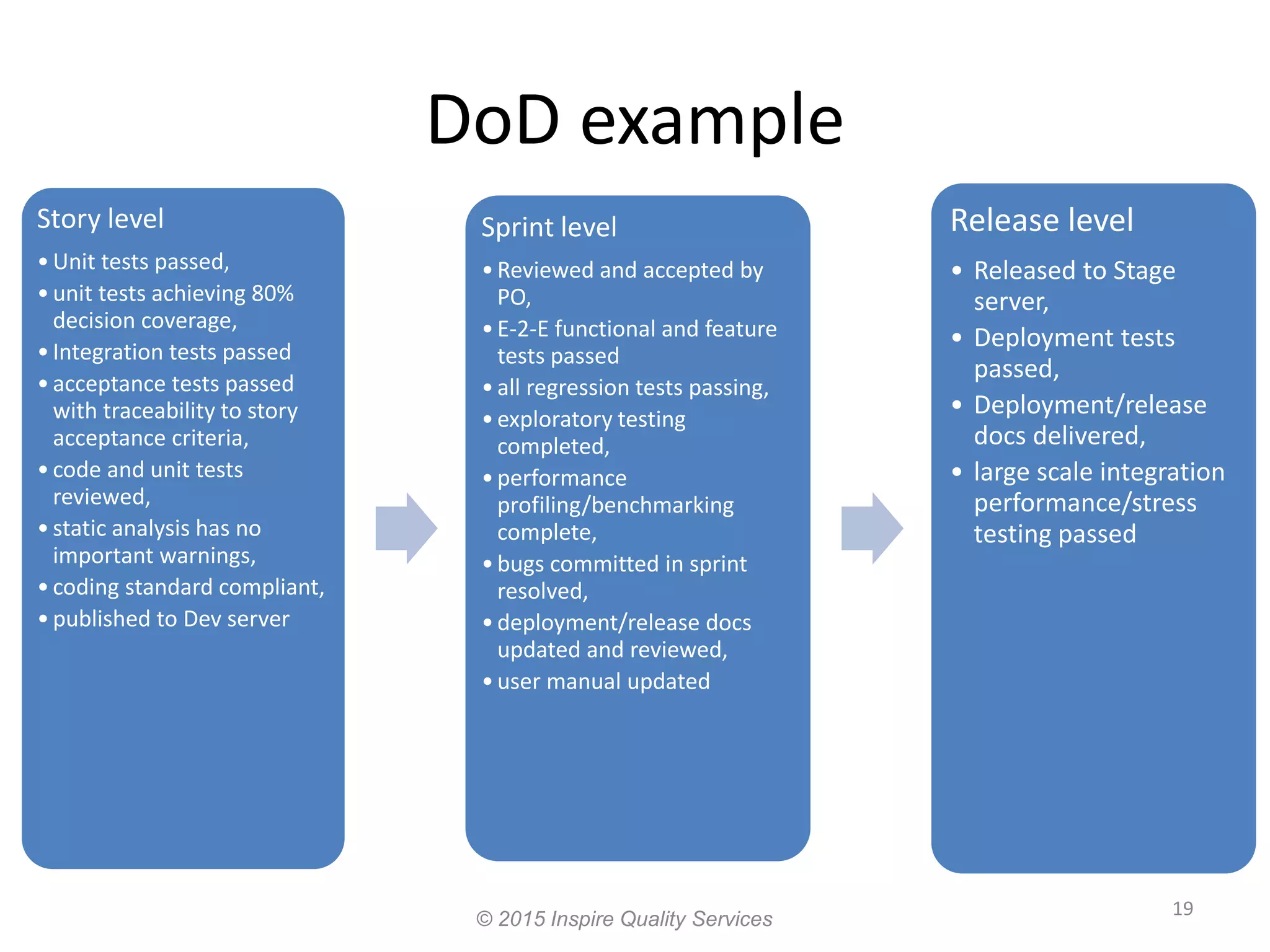

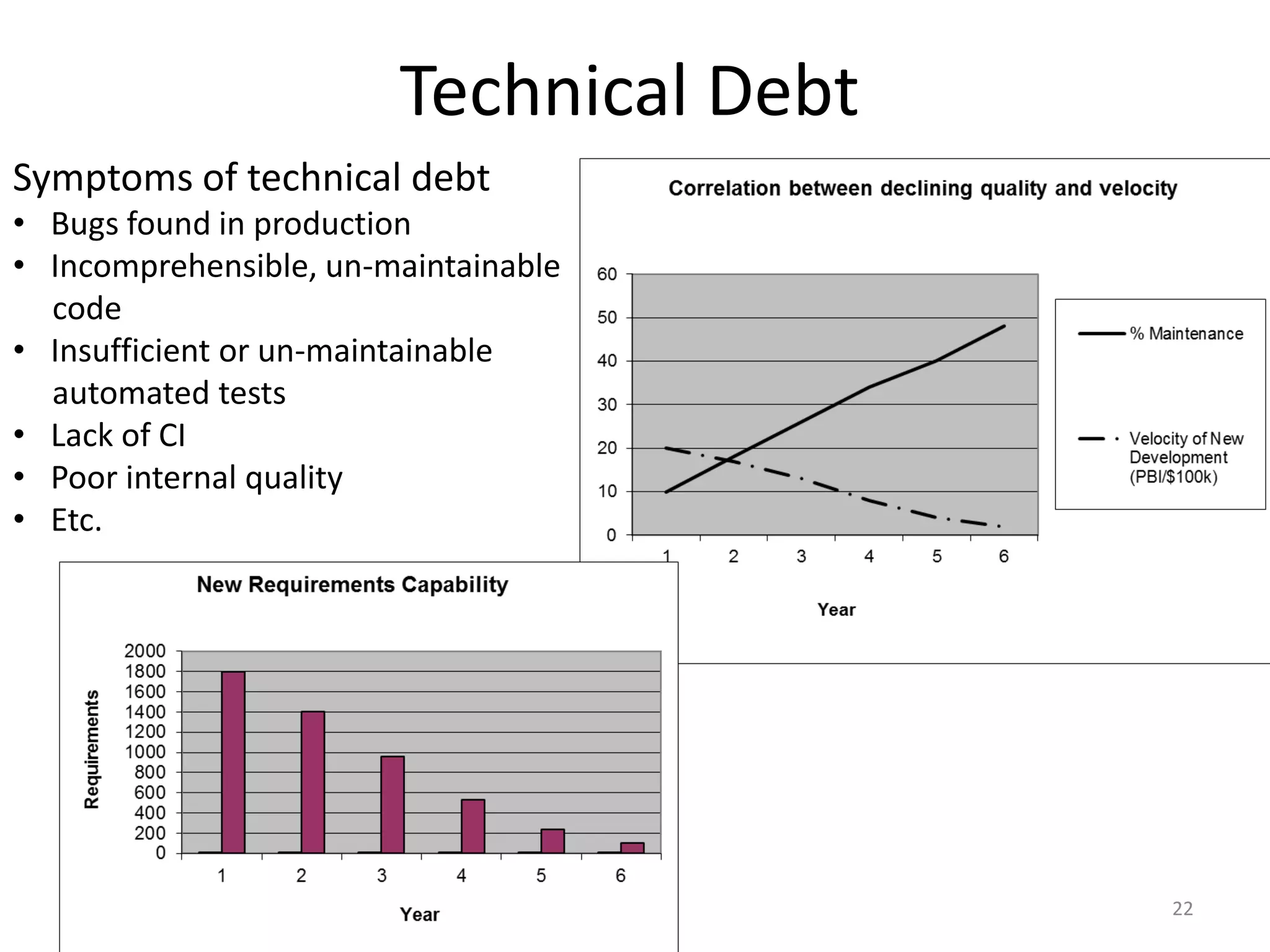

The document discusses integrating testing into the Agile lifecycle, emphasizing the seamless collaboration between development and testing to achieve quality. It outlines roles, events, and artifacts in Scrum, along with strategies for defining acceptance tests and ensuring their execution. Key insights include the importance of continuous integration and evolving the definition of done to enhance both testing and development processes.