The same structural patterns govern neural networks, code evolution, hardware performance, and perception itself. Domain changes. Architecture doesn't.

Systems Architect. AI/ML Engineer. Data Scientist. I design neural network architectures, train and deploy models, build production ML pipelines, and engineer the diagnostic instruments that reveal what other systems conceal. From statistical modeling and data-driven decision systems to real-time infrastructure and edge computing, I work the full stack from mathematical foundations.

10+ years across fintech, ad-tech, and enterprise SaaS. MS in AI/ML. MS in Computer Science (in-progress, CU Boulder). Founder & Chief Architect @ ByteStack Labs.

Architectural Diagnosis — Where systems fail and why, from the math up.

Temporal Intelligence — Pattern analysis across time: code evolution, hardware performance, signal processing, music.

Mathematical Rigor — Statistical validation, reproducible methodology, every claim traceable to its mathematical basis.

Cross-Domain Engineering — Python, C++/CUDA, Go, Rust, TypeScript. From edge hardware to cloud infrastructure.

I build across domains because the structural patterns are domain-invariant. Temporal decay in a codebase follows the same mathematics as convergence failure in a neural network. The physics don't change. The substrate does.

Temporal Code Intelligence platform. Time-series analysis on Git commit history.

Linear regression trend analysis, cyclomatic complexity tracking, confidence scoring, and predictive decay forecasting. Domain-Driven Design with Clean Architecture. Static analysis tells you a file is complex. GitVoyant tells you whether that complexity is growing, shrinking, or stable, and at what rate.

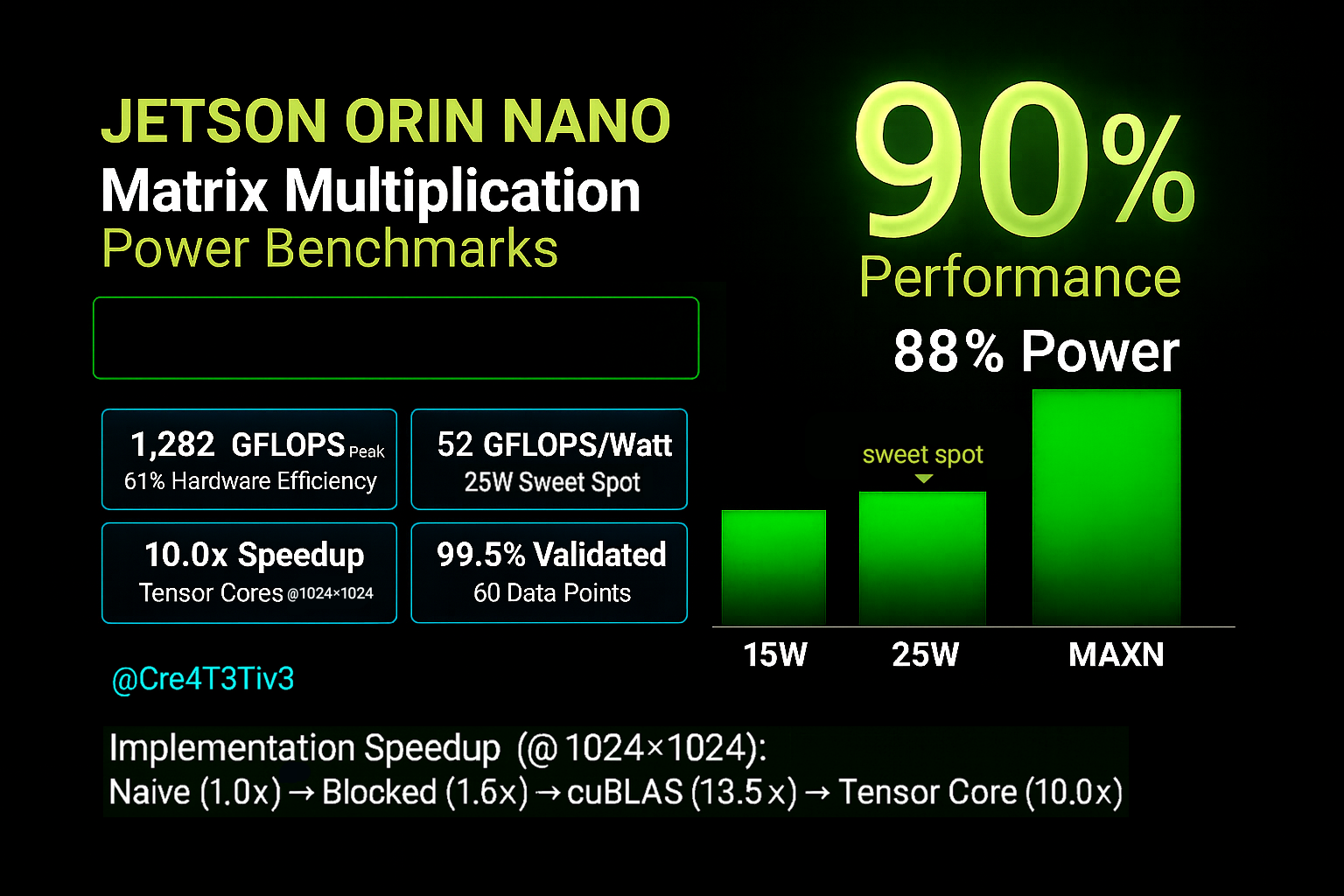

Scientific CUDA benchmarking framework. Four implementations, three power modes, five matrix sizes.

Naive, cache-blocked, cuBLAS, and Tensor Core WMMA across 15W, 25W, and MAXN on Jetson Orin Nano. C++/CUDA and Python. 1,282 GFLOPS peak, 99.5% mathematical validation, 60 data points. Every result validated against NumPy reference computation.

Benchmarking the gap between AI agent hype and architecture.

Three agent archetypes, 73-point performance spread, stress testing, network resilience, and ensemble coordination analysis with statistical validation (95% CI, Cohen's h). 0% positive synergy across all multi-agent ensemble patterns tested.

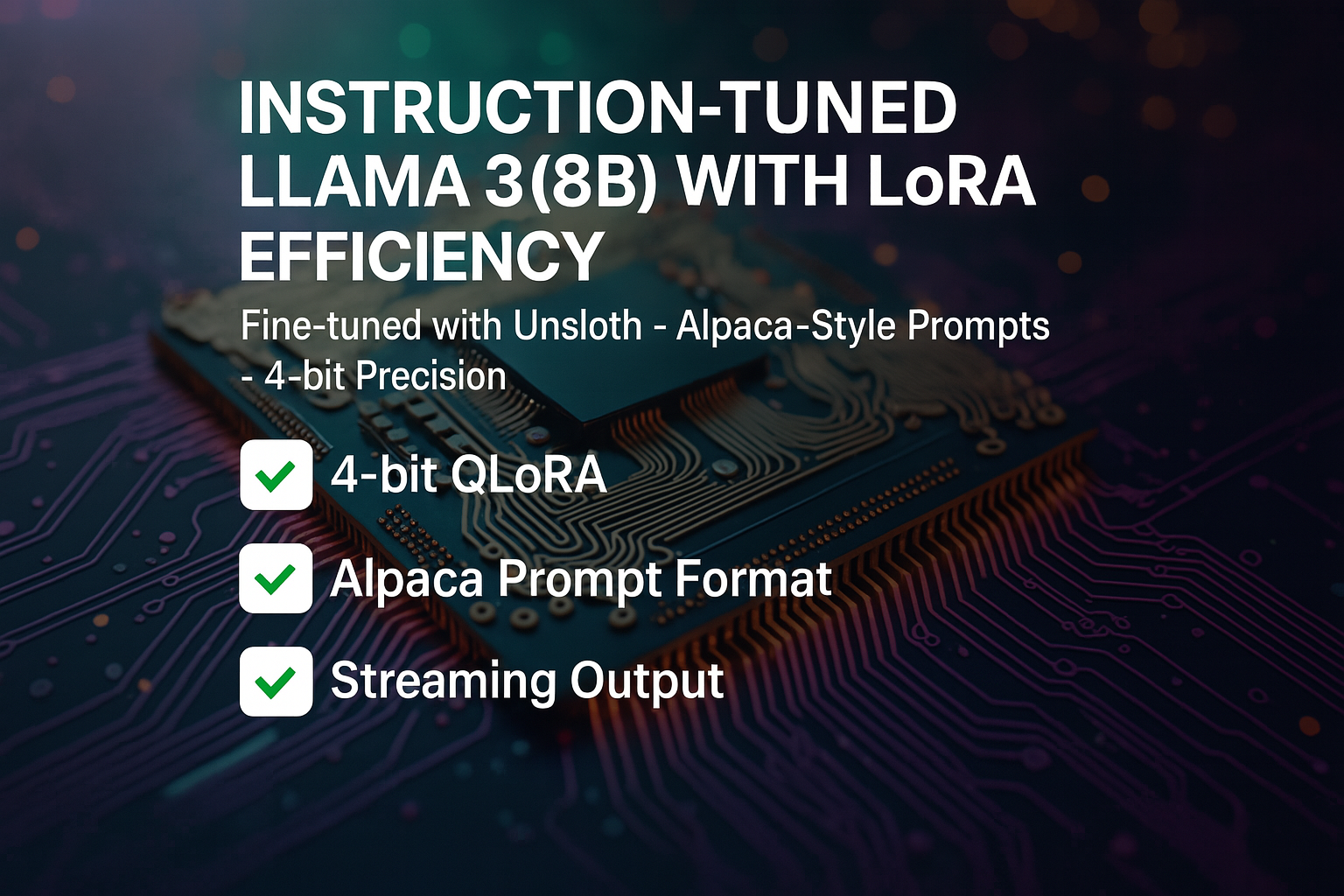

Custom model training. 4-bit QLoRA fine-tuning pipeline for LLaMA 3 8B.

End-to-end from dataset preparation through training, evaluation, and deployment. Memory-efficient instruction tuning on consumer GPUs using Unsloth. Published adapter on HuggingFace.

In a field that rewards premature convergence, I build systems that converge last and converge right.