You can use Dataflow ML's scale data processing abilities for prediction and inference pipelines and for data preparation for training.

Requirements and limitations

- Dataflow ML supports batch and streaming pipelines.

- The

RunInferenceAPI is supported in Apache Beam 2.40.0 and later versions. - The

MLTransformAPI is supported in Apache Beam 2.53.0 and later versions. - Model handlers are available for PyTorch, scikit-learn, TensorFlow, ONNX, and TensorRT. For unsupported frameworks, you can use a custom model handler.

Data preparation for training

Use the

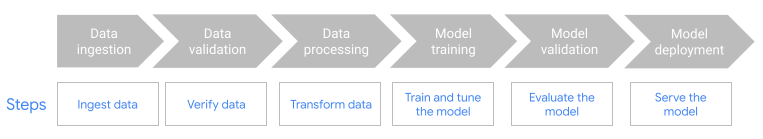

MLTransformfeature to prepare your data for training ML models. For more information, see Preprocess data withMLTransform.Use Dataflow with ML-OPS frameworks, such as Kubeflow Pipelines (KFP) or TensorFlow Extended (TFX). To learn more, see Dataflow ML in ML workflows.

Prediction and inference pipelines

Dataflow ML combines the power of Dataflow with Apache Beam's RunInference API. With the RunInference API, you define the model's characteristics and properties and pass that configuration to the RunInference transform. This feature allows users to run the model within their Dataflow pipelines without needing to know the model's implementation details. You can choose the framework that best suits your data, such as TensorFlow and PyTorch.

Run multiple models in a pipeline

Use the RunInference transform to add multiple inference models to your Dataflow pipeline. For more information, including code details, see Multi-model pipelines in the Apache Beam documentation.

Build a cross-language pipeline

To use RunInference with a Java pipeline, create a cross-language Python transform. The pipeline calls the transform, which does the preprocessing, postprocessing, and inference.

For detailed instructions and a sample pipeline, see Using RunInference from the Java SDK.

Use GPUs with Dataflow

For batch or streaming pipelines that require the use of accelerators, you can run Dataflow pipelines on NVIDIA GPU devices. For more information, see Run a Dataflow pipeline with GPUs.

Troubleshoot Dataflow ML

This section provides troubleshooting strategies and links that you might find helpful when using Dataflow ML.

Stack expects each tensor to be equal size

If you provide images of different sizes or word embeddings of different lengths when using the RunInference API, the following error might occur:

File "/beam/sdks/python/apache_beam/ml/inference/pytorch_inference.py", line 232, in run_inference batched_tensors = torch.stack(key_to_tensor_list[key]) RuntimeError: stack expects each tensor to be equal size, but got [12] at entry 0 and [10] at entry 1 [while running 'PyTorchRunInference/ParDo(_RunInferenceDoFn)'] This error occurs because the RunInference API can't batch tensor elements of different sizes. For workarounds, see Unable to batch tensor elements in the Apache Beam documentation.

Avoid out-of-memory errors with large models

When you load a medium or large ML model, your machine might run out of memory. Dataflow provides tools to help avoid out-of-memory (OOM) errors when loading ML models. For more information, see RunInference transform best practices.

What's next

- Explore the Dataflow ML notebooks to view specific use cases.

- Get in-depth information about using ML with Apache Beam in Apache Beam's AI/ML pipelines documentation.

- Learn more about the

RunInferenceAPI. - Learn about

RunInferencebest practices. - Learn about the metrics that you can use to monitor your

RunInferencetransform.