Inspiration Traditional language learning often suffers from a core weakness: rote memorization of vocabulary without context. Learners forget quickly and struggle to apply words in real-life communication. While learning through movies or real-life videos is highly effective, the process of finding the exact video segment containing the target vocabulary is incredibly time-consuming. Drawing from practical teaching experiences, we wanted to fully automate this process using AI, transforming any video into an interactive language lab where learners can immerse themselves in the language and practice accurate pronunciation.

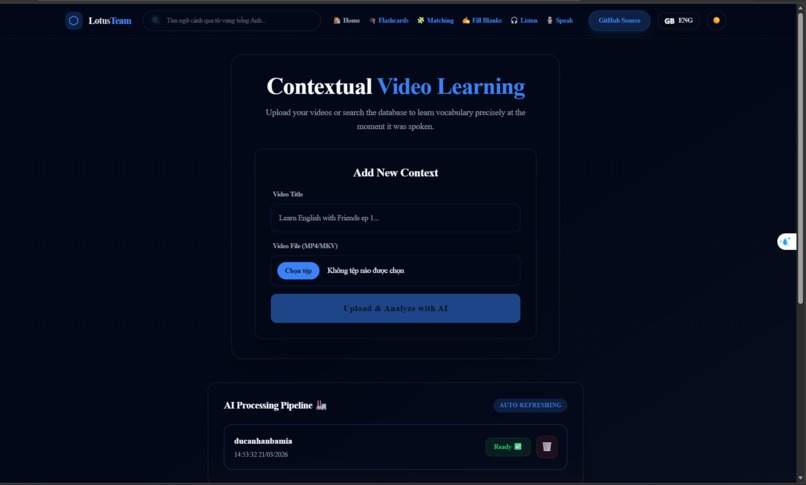

What it does Contextual Video Learning is a comprehensive language learning web application:

Smart Video Processing: Users upload videos, and the AI automatically extracts the audio, transcribes the speech, and generates subtitles with millisecond-accurate timestamps.

Contextual Search: Search for any vocabulary word, and the system instantly retrieves and plays the exact video segment containing that word, helping learners understand how it's used in real contexts.

Interactive Player: The video player synchronizes with the subtitles, allowing users to loop short segments for dictation and shadowing practice.

AI Speaking Assessment: Integrates TOEIC-standard speaking practice. Users record themselves reading a passage or answering AI-generated questions, and the system provides detailed scoring based on Pronunciation, Fluency, Vocabulary, and Grammar.

How we built it The system is designed with a robust architecture to ensure high performance and stability:

Frontend: Built with Next.js (React), TypeScript, and Tailwind CSS for a smooth interactive interface. The AudioRecorder component utilizes the MediaRecorder API for direct browser recording.

Backend: Developed using FastAPI (Python) with an asynchronous database, aiosqlite.

AI & Data Pipeline: * Powered by OpenAI Whisper for highly accurate Speech-to-Text.

Integrated FFmpeg to preprocess media files (extracting audio from video, compressing to 16kHz .wav format) to optimize RAM/VRAM usage.

Integrated LLMs acting as TOEIC examiners for scoring.

Asynchronous Processing: Completely decoupled the heavy AI processing pipeline from the web server using a Message Queue with Celery and Redis.

Challenges we ran into Event Loop Blocking: Initially, running the Whisper model directly on FastAPI's BackgroundTasks froze the entire server under multiple requests. We had to restructure the system, moving the AI tasks to independent Celery workers.

Async/Sync Conflicts: Celery workers operate synchronously, while our database (aiosqlite) is asynchronous. Synchronizing state data from Celery back to the database required careful handling using asyncio.run.

Secure Upload Pipeline: Relying solely on file extensions for security is risky. Applying secure programming principles, we implemented a mechanism to read Magic Bytes using the filetype library and chunked the file uploads using aiofiles to prevent memory exhaustion and mitigate potential malicious payloads.

Accomplishments that we're proud of Successfully building a fully automated, decentralized AI processing pipeline that doesn't compromise the user experience on the web interface.

Solving the synchronization challenge between the real-time Video Player and the Transcript list through smart database schema design.

Integrating a complex voice assessment workflow into a single platform, from frontend audio capture to backend AI scoring.

What we learned We deepened our mastery of integrating a modern frontend framework (Next.js) with a heavy data-processing backend (FastAPI + AI). The biggest takeaway was learning how to manage system resources (RAM/CPU) when handling large media files and designing message queues (Celery/Redis) to ensure the application can scale effectively in a production environment.

What's next for Contextual Video Learning YouTube Integration: Integrating yt-dlp to fetch videos directly from YouTube without manual downloads.

Auto-Translation: Adding automatic bilingual subtitles via translation APIs.

Spaced Repetition System (SRS): Allowing users to save vocabulary and export them directly to Anki flashcards.

Role-based Access: Building a classroom feature so educators can assign video-based homework to students.

Built With

- celery

- fastapi

- next.js

- openai-whisper

- python

- react

- redis

- sqlalchemy

- sqlite

- tailwind-css

- typescript

Log in or sign up for Devpost to join the conversation.