ETEST ONE — Project Story

Inspiration

The idea started with a simple observation during a visit to ETEST Vietnam's office.

A mother called in asking one question: "Is my child doing okay?"

The counselor spent 20 minutes pulling up spreadsheets, checking Zalo messages, and trying to piece together an answer from scattered notes. The mother had been paying $20,000 for her daughter's study abroad preparation — and the best the system could offer was a phone call and a gut feeling.

That conversation stuck with us.

We also noticed something happening on the other side of the journey. University admissions officers were quietly sounding alarms about AI-generated applications. A student who spent 14 months writing, revising, attending camps, and working with mentors — looked identical on paper to someone who spent 10 minutes with ChatGPT. The credential system that study abroad programs had built over decades was quietly breaking.

Two problems. One platform. That's how ETEST ONE was born.

What We Built

ETEST ONE has two modules, each solving one side of the same broken system.

Module 1 — Smart Parenting

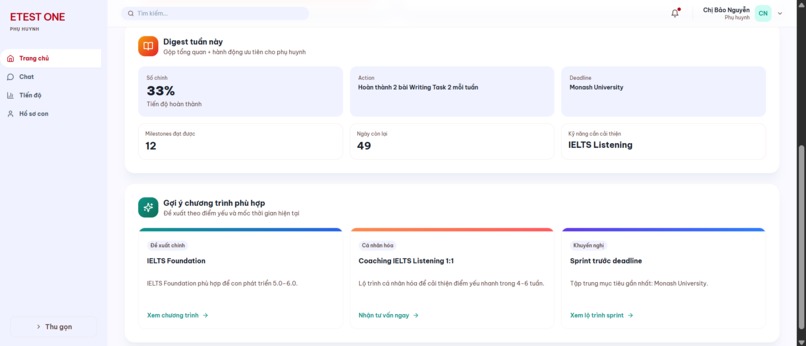

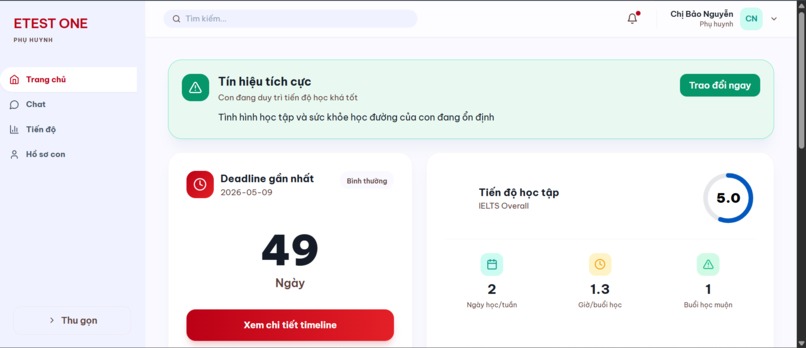

Smart Parenting is built for the most overlooked person in every study abroad journey: the parent.

The core insight is simple. ETEST already has all the data — IELTS scores, study streaks, behavioral logs, milestone progress, school deadlines. But none of it was ever surfaced to the person writing the check. Smart Parenting closes that gap.

The module runs on what we call the Insight Engine — a pipeline of four AI systems working together:

- An AI Chatbot that answers parent questions in plain language, backed by real student data. Not generic advice — specific answers grounded in their child's actual profile.

- A Wellbeing Engine that monitors behavioral patterns and fires alerts when signals cross defined thresholds. Study streak dropping more than 35%? Late-night sessions three days running? The parent knows before it becomes a crisis.

- A Digest Translator that converts raw statistics into one number, one action, and one deadline — designed for a 45-year-old parent who has never heard of an IELTS band score.

- An Upsell Engine that matches student skill gaps with relevant ETEST courses and camps at the right moment in the academic calendar.

The design principle throughout was radical simplicity. No dashboards. No charts. No jargon. Just the signal a parent actually needs, delivered at the moment they need it.

Module 2 — ETESTER

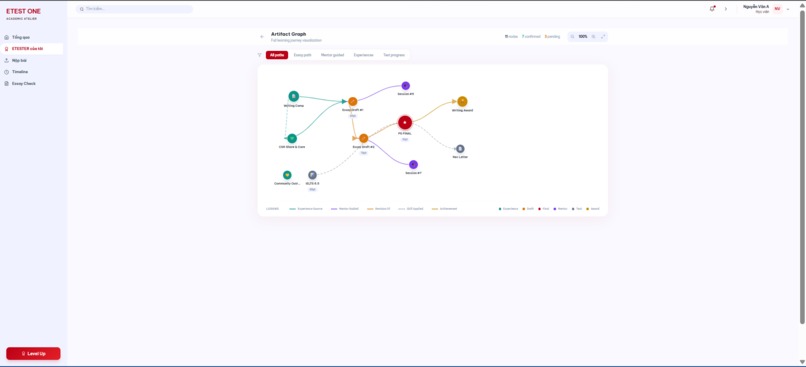

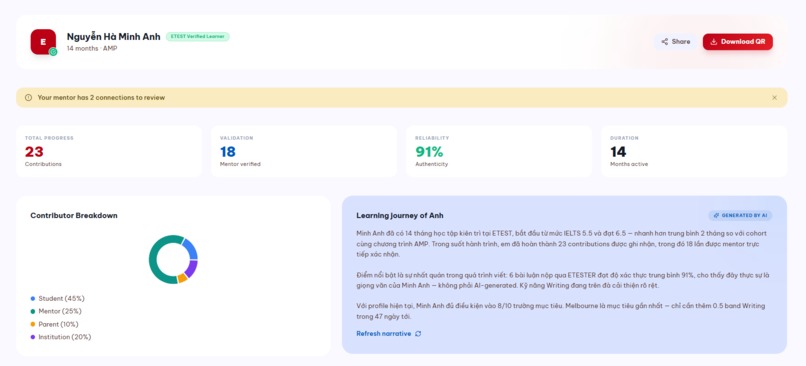

ETESTER is a living digital credential — a proof of learning that accumulates over time rather than capturing a single moment.

The core insight came from rejecting the standard approach to the AI essay problem. Most tools ask: "Did AI write this?" That's an arms race. AI gets better, detectors get better, and real students get caught in the crossfire.

ETESTER asks a different question entirely: "Does this essay fit who this student actually is — across 14 months of documented, verified learning?"

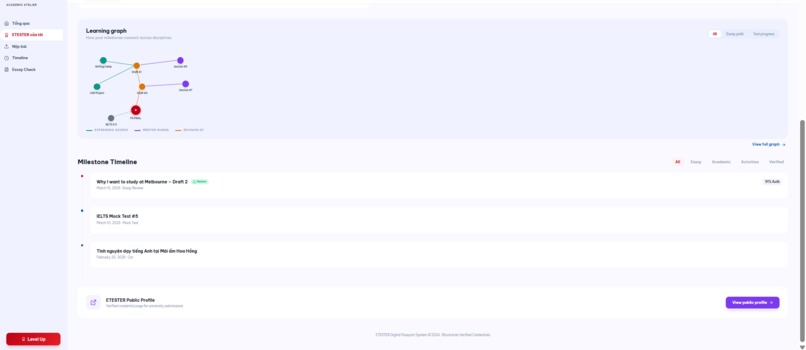

The answer to that question requires an artifact graph — a network of timestamped learning events (camps, essay drafts, mock tests, mentor sessions, CSR activities) connected by causal relationships. ETESTER builds this graph through what we call the Trust Engine, which consists of four AI systems:

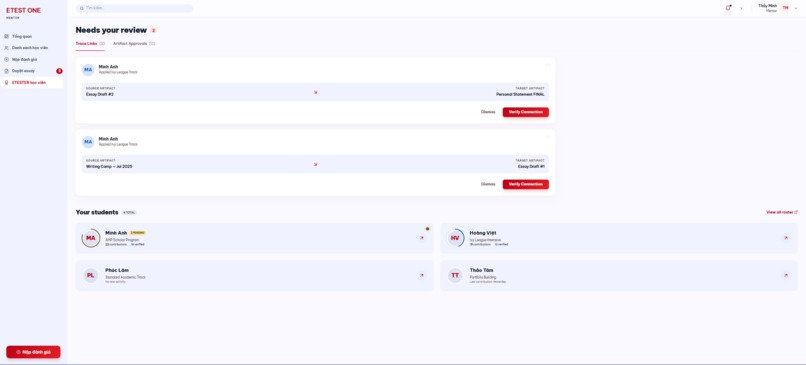

Trace Engine — When a student submits a new artifact, the Trace Engine reads its content alongside the five most recent prior artifacts and identifies causal relationships. Not just "happened before" — but "actually contributed to." A Writing Camp in July that introduced PEEL methodology, for example, gets linked to Essay Draft #1 in October with a relationship type of experience_source and a confidence score of 0.94.

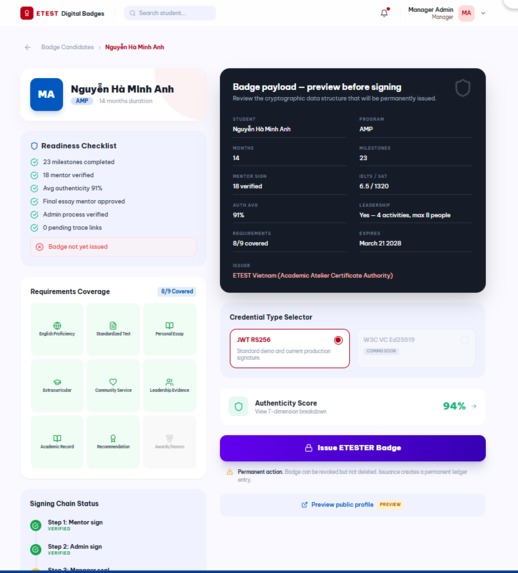

Auth Scorer — When a mentor confirms a trace link, the Auth Scorer runs a 7-dimension analysis across the full artifact graph:

$$\text{auth_score} = \sum_{i=1}^{7} w_i \cdot d_i$$

where $w_i$ is the weight of dimension $i$ and $d_i \in [0, 100]$ is the score for that dimension. The seven dimensions are:

| Dimension | Weight | Question |

|---|---|---|

| $d_1$ Growth explained | 0.25 | Is the score increase justified by artifacts? |

| $d_2$ Topic familiarity | 0.15 | Does claimed experience match artifact history? |

| $d_3$ Timeline consistency | 0.15 | Do essay references have prior artifacts? |

| $d_4$ Error pattern | 0.15 | Are writing errors consistent with history? |

| $d_5$ Mentor corroboration | 0.20 | Do mentor notes align with essay quality? |

| $d_6$ Behavioral context | 0.05 | Does study behavior support submission quality? |

| $d_7$ Writing fingerprint | 0.05 | Is writing structure consistent across drafts? |

The critical design decision was the verdict logic for $d_1$. If a score increase is large but confirmed artifacts exist with confidence $\geq 0.7$ AND mentor corroboration $d_5 \geq 80$, the verdict is justified_growth — not suspicious_jump. Growth is expected after real learning. ETESTER proves the learning happened.

Narrative Builder — After each confirmed event, the Narrative Builder reads the full artifact graph and produces a coherent learning story in both English (for universities) and Vietnamese (for parents). The narrative rebuilds itself each time new verified evidence is added — it is never static.

Leadership Aggregator — Leadership claims in college applications are typically self-reported and unverifiable. The Leadership Aggregator reads all artifacts where had_leadership_role = true, cross-references team sizes, outcomes, and mentor confirmations, and synthesizes a credibility-weighted profile. The output is evidence-based rather than claim-based.

The signing chain has three stages — Mentor (content verification), Admin (process verification), and ETEST Manager (institutional seal) — mirroring the trust model of established credential systems like IELTS. No single actor can fabricate the chain alone.

The final output is a QR badge containing a JWT-signed payload. A university scans it and sees not just scores — but 14 months of verified, connected, mentor-confirmed learning. No account required. No login. Instant trust.

How We Built It

The backend is built on FastAPI with SQLAlchemy and PostgreSQL, inheriting the data models already established by the Smart Parenting team. The key design principle was extension over replacement — ETESTER adds new tables (MilestoneTraceLink, ArtifactForm, AuthScoringResult, ETESTERCore, ETESTERBadge) without modifying any existing Smart Parenting models.

The frontend is React with Vite and Tailwind CSS. The artifact graph visualization is built in raw SVG — we deliberately avoided heavy graph libraries to keep the bundle size small and the interaction model simple.

All four AI systems in the Trust Engine call the same LLM endpoint with carefully structured prompts. Each prompt is designed to return structured JSON, with try/catch fallback to pre-written cached responses in case of timeout — ensuring the demo never breaks during a live presentation.

The QR badge uses JWT RS256 signing. The production roadmap replaces this with W3C Verifiable Credentials (Ed25519, did:web:etest.edu.vn) — the same standard used by MIT Digital Diplomas — but JWT was the right call for a 10-hour hackathon build.

Challenges

The "wrong question" problem. The hardest design challenge in ETESTER was resisting the instinct to build an AI detector. Every early prototype tried to answer "did AI write this?" — and every prototype failed, because the question is unanswerable with confidence. The breakthrough came when we reframed the problem entirely: stop asking about the essay, start asking about the student. That reframe changed everything — the data model, the prompt design, the trust architecture.

Justified growth vs suspicious jump. The Auth Scorer needed to handle a specific edge case that took significant iteration: a student who genuinely improves dramatically after a learning event. A naive scoring system would flag a 16-point authenticity jump as suspicious. The solution was making $d_1$ the highest-weight dimension and building explicit logic that overrides the suspicious verdict when confirmed artifacts with sufficient confidence exist in the graph. Real growth should be rewarded, not penalized.

The parent UX. Smart Parenting's hardest problem was not technical — it was design. Every early version had too much information. The breakthrough was a single constraint: every output must contain exactly one number, one action, and one deadline. Everything else is noise for a parent who is busy, worried, and doesn't speak the language of standardized testing.

Linking accountability. Approach C — the linking flow where AI suggests, student adds context, and mentor confirms — required careful UX thinking. The student needed enough agency to feel ownership of their profile, but not enough authority to self-certify. The mentor needed enough context to make a fast, accurate decision. Getting that balance right in the UI took more iteration than any other component.

What We Learned

The most important thing we learned is that trust is a product, not a feature.

Every technical decision in ETESTER — the three-stage signing chain, the append-only audit trail, the causal trace links, the mentor confirmation requirement — exists not because the technology requires it, but because trust requires it. Universities don't need a perfect AI. They need a system where a human being has put their professional reputation on the line for every credential issued.

ETEST has spent 20 years building that reputation. ETEST ONE is the product that makes it programmable.

Built With

- agent

- amazon-web-services

- docker

- fastapi

- langraph

- llm

- postgresql

- react

Log in or sign up for Devpost to join the conversation.