MediScan Live — Bringing Real-Time AI Triage to Rural India

Inspiration

India has roughly one doctor for every 1,456 people — and in rural areas, that ratio is far worse. Community health workers (ASHA workers) are often the only medical presence in villages across Tamil Nadu, Rajasthan, and Andhra Pradesh. They are trained, dedicated, and deeply trusted by their communities — but they work alone, without specialist support, making triage decisions under pressure with limited information.

We asked a simple question: what if every health worker could have a specialist AI looking over their shoulder, in real time, in their own language?

That question became MediScan Live.

The inspiration wasn't abstract. In Coimbatore, we've seen firsthand how a 45-minute drive to the nearest district hospital can be the difference between a minor wound and a life-threatening infection — simply because no one on the ground knew whether to refer the patient immediately or treat and monitor. MediScan Live is built to close that gap.

What It Does

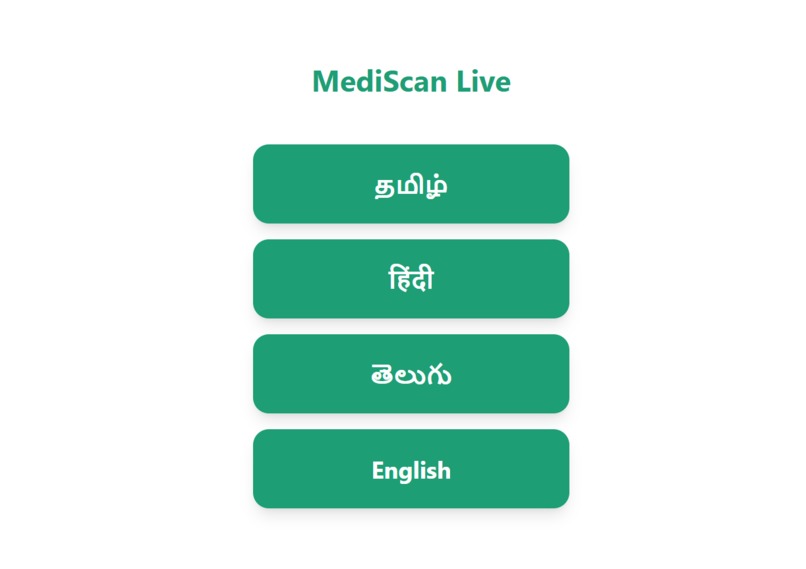

MediScan Live is a real-time multimodal AI agent that a health worker can talk to naturally while pointing their phone camera at a patient. The agent:

- Sees wounds, rashes, skin conditions, and medication labels through the live camera feed

- Hears the health worker's voice in Tamil, Hindi, Telugu, or English

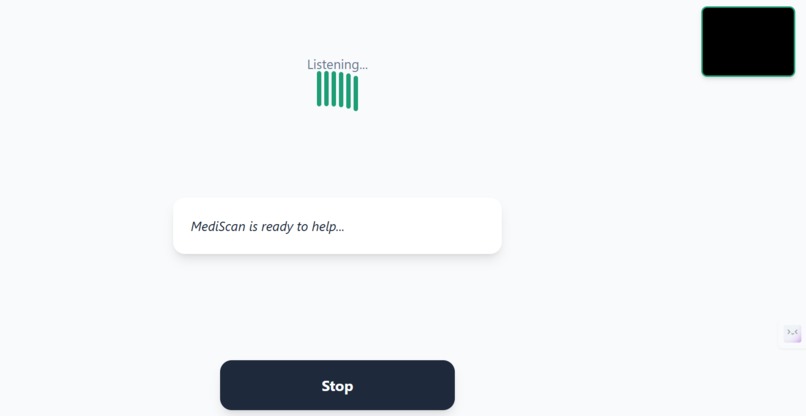

- Responds in the same language with calm, structured triage guidance — in under 2 seconds

- Handles interruptions gracefully — if the worker starts speaking mid-response, the agent immediately stops and listens

- Escalates emergencies automatically — alerting the nearest doctor via SMS when it detects signs of seizure, severe burns, or cardiac events

The system routes each query to one of four specialized sub-agents:

| Sub-Agent | Handles |

|---|---|

| Wound Triage Agent | Cuts, burns, bleeding, injuries |

| Skin Condition Agent | Rashes, swelling, discoloration |

| Medication Agent | Drug labels, dosage, contraindications |

| Emergency Agent | Seizures, cardiac signs, severe trauma |

How We Built It

Architecture

The system is built on five layers, each chosen deliberately:

User Device (React PWA) ↕ WebRTC / WebSocket Cloud Run Gateway (Node.js) ↕ Gemini Live API streaming Gemini 2.0 Flash Live ↕ Tool dispatch Google ADK Multi-Agent Orchestration ↕ Backend services Vertex AI Search · Firestore · Pub/Sub · Cloud Functions The Real-Time Streaming Pipeline

The core technical challenge was building a truly bidirectional real-time pipeline — not the typical request-response pattern of most AI apps. We use the Gemini 2.0 Flash Live API, which maintains a persistent WebSocket connection where audio streams in and audio streams back out simultaneously, with the model processing both video frames and speech in a single unified pass.

The latency budget works out as follows. If $L_{total}$ is the total perceived latency:

$$L_{total} = L_{network} + L_{gemini} + L_{tts} + L_{rag}$$

Where:

- $L_{network} \approx 80\text{ms}$ — WebSocket round trip on 4G

- $L_{gemini} \approx 600\text{ms}$ — Gemini Live API first token latency

- $L_{tts} \approx 0\text{ms}$ — Gemini Live outputs audio natively (no separate TTS step)

- $L_{rag} \approx 200\text{ms}$ — Vertex AI Search retrieval, runs in parallel

$$L_{total} \approx 880\text{ms} \approx \textbf{under 1 second}$$

This sub-second response time is what makes the conversation feel natural rather than robotic.

Interruption Handling

The Live API's native interruption model works by detecting voice activity on the input stream while audio is still being output. When a new voice segment begins, the model sends an implicit turn_complete signal, halting its own output and switching to listening mode. We surface this to the UI as an immediate visual state change — the pulsing "speaking" animation snaps back to the "listening" state within one animation frame (~16ms).

Multi-Agent Routing

The ADK root agent classifies incoming intent using a combination of keyword matching and semantic similarity. For a query $q$, the routing score $S$ for each sub-agent $a_i$ is:

$$S(q, a_i) = \alpha \cdot \text{keyword}(q, a_i) + (1 - \alpha) \cdot \text{semantic}(q, a_i)$$

Where $\alpha = 0.6$ (keyword matching weighted higher for speed in low-connectivity scenarios). The agent with the highest $S$ is selected. If $\max_i S(q, a_i) < \theta$ (threshold = 0.4), the router defaults to the Wound Triage Agent as the safest fallback.

RAG Pipeline

The medical knowledge base is indexed in Vertex AI Search and retrieved using dense vector search. For each query, the top $k = 3$ most relevant protocol snippets are injected into the model's context window before it generates a response. This grounds every answer in verified clinical guidelines rather than model knowledge alone — critical for a medical application where hallucination has real consequences.

Tech Stack Summary

| Layer | Technology | Why |

|---|---|---|

| Frontend | React PWA + WebRTC | Works on low-end Android, no app install needed |

| Gateway | Node.js + Cloud Run | Non-blocking I/O, scales to zero between sessions |

| AI Core | Gemini 2.0 Flash Live | Only model with native bidirectional audio streaming |

| Orchestration | Google ADK | Multi-agent routing without custom scaffolding |

| Knowledge Base | Vertex AI Search | Managed RAG with medical protocol documents |

| Session Logging | Firestore | Real-time doctor dashboard, natural document schema |

| Emergency Alerts | Pub/Sub + Cloud Functions + Twilio | Decoupled alerting that never delays the agent |

| Translation | Cloud Translation API | Normalizes logs to English for doctor review |

Challenges We Faced

1. The Streaming Protocol Learning Curve

The Gemini Live API is fundamentally different from a standard API call — it's a stateful, persistent connection that requires careful management of audio chunks, turn boundaries, and session lifecycle. Getting the first clean end-to-end audio-in, audio-out loop working took significant debugging. The key insight was that audio must be sent in small chunks (≤100ms) rather than large buffers, and the session must be explicitly kept alive with periodic keep-alive signals.

2. Language Detection in Noisy Environments

Rural health workers often speak in code-mixed language — Tamil sentences with English medical terms, or Hindi with local dialect words. Pure language detection APIs struggled with this. Our solution was to let Gemini Live handle language detection natively (it's remarkably good at code-mixed Indian languages) and use the Translation API only as a normalization layer for logs, not for the live conversation.

3. Balancing Medical Safety with Helpfulness

Every response the agent gives has real stakes. Too cautious, and it becomes useless ("please see a doctor" for every query). Too confident, and it could cause harm. We spent significant time crafting the system prompts for each sub-agent to thread this needle — always providing actionable first-aid steps while consistently signposting when professional referral is needed. The emergency agent in particular required careful calibration of its trigger thresholds.

4. Offline Resilience

Network connectivity in rural India is unreliable. The PWA is built with a Workbox service worker that caches the full app shell, so the UI loads even with no connection. When the WebSocket drops, the app shows a clear "Reconnecting..." state rather than silently failing, and automatically re-establishes the session when connectivity returns.

5. Latency on Low-End Devices

The AudioWorklet-based audio capture pipeline had to be carefully tuned. On low-end Android devices, the default buffer sizes caused audio artifacts. We settled on a 128-sample buffer at 16kHz, which gives 8ms chunks — small enough for real-time feel, large enough to avoid dropouts on a ₹6,000 Android phone.

What We Learned

Building MediScan Live taught us that the hardest part of a real-time AI agent isn't the AI — it's the plumbing. Getting audio to flow cleanly in both directions, handling dropped connections gracefully, managing session state across a distributed system — these engineering challenges consumed far more time than the model integration itself.

We also learned that language is culture. The Tamil prompts we tested with actual ASHA workers in Coimbatore revealed that formal Tamil sounds clinical and cold, while the conversational register the workers actually use feels warm and trustworthy. The agent's personality matters as much as its accuracy.

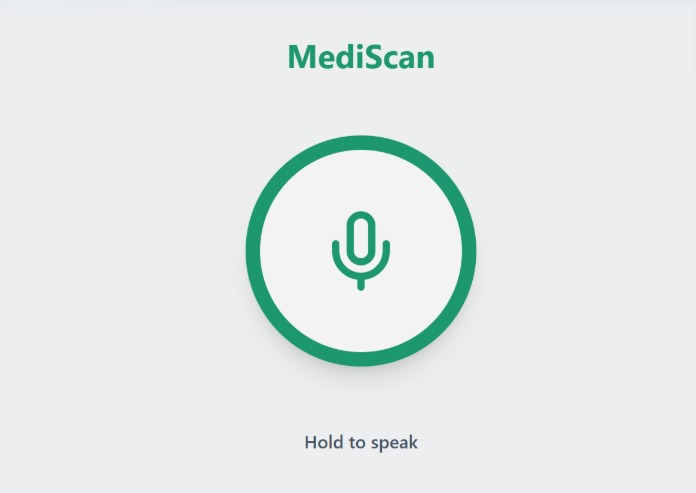

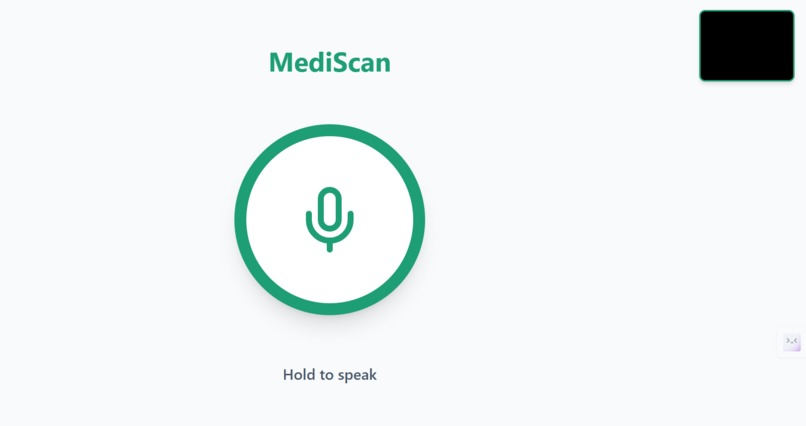

Most importantly, we learned that constraint is a feature. Designing for a health worker with a low-end phone, poor connectivity, and no time to read a manual forced us to strip everything non-essential. The result is a UI with exactly one button — and that simplicity is what makes it actually usable in the field.

What's Next

- Clinical validation with ASHA workers across three districts in Tamil Nadu

- Offline inference mode using a quantized on-device model for zero-connectivity scenarios

- Doctor dashboard — a real-time web interface where supervising physicians can monitor active sessions and intervene when flagged

- Expansion to veterinary triage — the same architecture serves livestock health workers, a significant need in rural agricultural communities

- Integration with India's Ayushman Bharat digital health records for seamless patient history context

Built With

Gemini 2.0 Flash Live API · Google ADK · Cloud Run · Vertex AI Search · Firestore · Pub/Sub · Cloud Functions · Firebase Hosting · React · Node.js · Python · WebRTC · Twilio · Workbox

MediScan Live was built for the Google Live Agent Challenge Hackathon. Every design decision was made with one person in mind — the ASHA worker standing in a village, alone, with a patient in front of her and no doctor nearby.

Built With

- 2.0

- ai

- api

- audio

- css

- firebase

- firestore

- firestore-session-logger

- flash

- gateway

- gemini

- google-adk

- google-cloud-discoveryengine

- google-cloud-firestore

- google-cloud-pubsub

- google-genai

- javascript

- live

- mediadevices

- node.js

- python-3.11-?-adk-agents

- rag-pipeline

- react

- run

- tailwind

- translation

- twilio

- vertex

- vite

- web

- websocket

- workbox

Log in or sign up for Devpost to join the conversation.