Inspiration

Your AI forgets you the moment you close the tab.

That's the core problem. Every conversation starts from zero. But it gets worse — your knowledge isn't just forgotten, it's fragmented. Tasks in one app, notes in another, decisions discussed in chat and never recorded. Nothing connects.

The breaking point for me came when I realized I was making the same architectural decision for the third time — and couldn't remember why I rejected the alternative before. The decision itself was in git. But the reasoning — the trade-offs, the context, the alternatives I considered — gone.

AI assistants today store some facts about you, but they don't capture how you think. When I make a decision, I remember what I weighed, what I rejected, and why. Over time that builds into personal rules and patterns. No AI does this.

I wanted one system that works quietly behind the scenes — through tools you already use — and builds a real model of your reasoning without you having to explain everything from scratch each time.

What it does

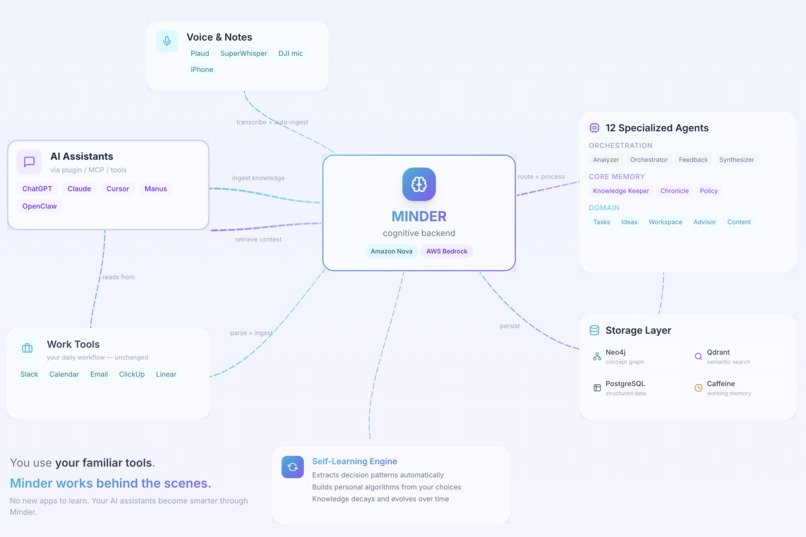

Minder is a cognitive backend — the invisible memory layer behind your existing AI tools.

You don't install a new app. You keep using ChatGPT, Claude, Cursor — whatever you prefer. Minder works behind the scenes through plugins and MCP integrations.

It doesn't just store — it understands. Every message is processed by specialized agents in parallel: extracting tasks, capturing ideas, recording decisions with full reasoning, identifying people and relationships, building a concept graph.

It self-learns. Minder tracks your decision patterns and builds personal algorithms from your choices. After enough similar decisions it notices patterns you didn't explicitly teach it. Knowledge naturally decays and evolves — a decision from 6 months ago weighs less than one from last week.

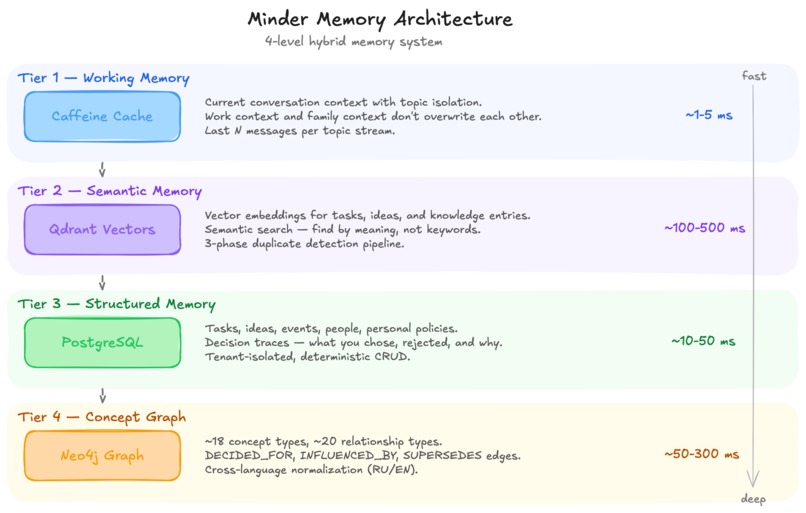

It's deterministic where it matters. Tasks live in PostgreSQL, not in an LLM's context window. Concepts live in Neo4j, not in a prompt. The LLM reasons; the database remembers.

Live today:

- Knowledge Capture — Chat with any AI assistant, Minder remembers permanently

- Voice-to-Knowledge — Voice recordings → transcription → structured knowledge

- Context-Aware AI — Your AI tools retrieve personal context before answering

- Decision Tracking — What you decided, why, what alternatives you considered

How I built it

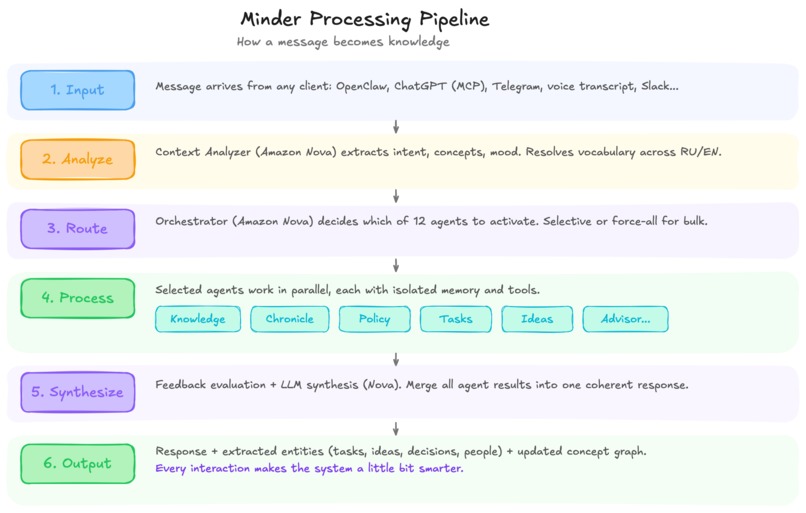

Multi-Agent Architecture

Different knowledge needs different processing. A task manager needs precision. An idea lab needs loose association. You can't optimize one prompt for both.

Minder uses a multi-layer agent architecture — orchestration agents handle routing and quality control, core memory agents manage semantic, episodic, and procedural memory, and domain agents cover specialized areas like task management, idea development, and personal advisory.

Amazon Nova Integration

Nova is the reasoning backbone:

- Nova Pro v2 — Powers the Orchestrator. Two-phase reasoning: analyze input → route to agents. Strong reasoning capabilities are essential for making correct routing decisions.

- Nova Lite v2 — Powers domain and core agents. When multiple agents run in parallel, speed matters. Handles extraction, classification, and generation efficiently.

- Titan Embed Text V2 — 1024-dim embeddings for the concept graph, knowledge storage, semantic search, and duplicate detection. Called more often than any other model in the system.

Storage: Right Tool for Each Job

| Storage | Purpose |

|---|---|

| Neo4j | Concept Graph with typed relationships (DECIDED_FOR, EVOLVED_INTO, INFLUENCED_BY) |

| Qdrant | Vector embeddings — semantic search, duplicate detection, concept similarity |

| PostgreSQL | Deterministic storage — tasks, ideas, events, tenant isolation |

| Caffeine | Working memory — session context with topic isolation |

Plugin Ecosystem

- OpenClaw Plugin — Full integration with the OpenClaw AI agent platform: tools for querying memory, remembering information, managing tasks/ideas, plus passive hooks for automatic context injection

- Voice Plugin — Voice recorders → automatic transcription → structured knowledge extraction

- MCP/Tools — Any AI assistant supporting MCP or function calling can connect via REST API

Tech Stack

| Component | Choice |

|---|---|

| Runtime | Java 21, Spring Boot 3.5, Spring WebFlux (reactive) |

| AI | Amazon Nova Pro v2, Nova Lite v2, Titan Embed V2 via AWS Bedrock |

| Graph DB | Neo4j |

| Vector DB | Qdrant |

| SQL DB | PostgreSQL 15 |

| Demo Frontend | React 19, Vite, Tailwind CSS, Framer Motion |

Challenges I ran into

Cold start problem. An empty Minder knows nothing. The onboarding had to seed enough knowledge to be useful without feeling like homework. Solution: AI-to-AI onboarding — generate a profile via ChatGPT/Claude, feed it to Minder in one step.

Multi-agent coordination. Multiple agents processing the same input can produce conflicts. A dedicated feedback evaluation step reviews outputs and can reroute if quality is insufficient. Getting this stable without infinite loops required careful design.

Concept vocabulary normalization. Early versions created duplicates: "PostgreSQL", "postgres", "PG database" — three nodes for one thing. Multi-level vocabulary resolution with alias mapping and cross-language support (Russian/English) fixed this.

Latency budget. Multiple LLM calls per interaction through Bedrock adds up. Async processing with real-time status polling was essential. Nova Lite's speed made parallel agent execution feasible within reasonable latency.

Accomplishments that I'm proud of

- It actually works end-to-end. Not a prototype or a slide deck — a running system with real tenant isolation, real persistence, real multi-agent coordination. You can ingest a meeting transcript and query it minutes later with full concept graph enrichment.

- AI-to-AI onboarding. The idea of using one AI to generate a structured profile that another AI ingests — this turned out to be the smoothest onboarding UX I've ever built. Zero forms, zero manual data entry.

- Invisible integration. Users don't interact with Minder directly. Their existing AI assistants just... get smarter. The OpenClaw plugin injects personal context before every response and auto-ingests every conversation. The user never notices.

- Decision traces. This is the feature I built Minder for in the first place. Not just "user chose X" but the full chain — what was considered, what was rejected, why. And it actually captures this from natural conversation without explicit prompting.

What I learned

- Multi-agent systems need explicit contracts. Agent autonomy is great until two agents update the same entity differently. Typed results with explicit verdicts (UNIQUE, ENRICH, DUPLICATE) made it predictable.

- Nova Pro's reasoning shines in orchestration. The two-phase orchestrator (analyze → route) works remarkably well — consistent, correct routing decisions.

- Embeddings are the unsung hero. Titan Embed V2 powers duplicate detection, semantic search, concept similarity, and retrieval. It's the most-called model in the system.

- The best UX for AI tools is invisible UX. Users shouldn't have to learn a new app. Plugin architecture that integrates with existing tools gets far more engagement than standalone UI.

What's next for Minder

- Decision pattern mining — automatic discovery of personal decision patterns across domains

- Cognitive Replay — retrospective decision analysis with new data

- Team Knowledge Sync — personal + corporate instances sharing across boundaries

- Work tool integration — automatic ingestion from Slack, Calendar, Email

- Mobile voice companion — always-on capture with real-time ingestion

Try the live demo: platform.minder.host

Built With

- amazon-bedrock

- amazon-nova

- caffeine

- docker

- java

- neo4j

- postgresql

- qdrant

- spring-ai

- spring-webflux

- whisper

Log in or sign up for Devpost to join the conversation.