Inspiration

Every kid who ever held a TV remote and pretended it was a game controller understands the instinct instantly — your body wants to be part of the game.

We grew up playing Mario. We also grew up watching the iPhone gain gyroscopes, accelerometers, and motion sensors powerful enough to track every tilt, shake, and twist of your wrist. Yet in 2026, Mario is still played with two thumbs on a glass screen.

That disconnect is what drove us to build this.

The question wasn't can you play Mario with phone movement — it was why hasn't anyone done it properly? Bad sensor games exist. They feel like gimmicks because they treat the sensor as a novelty layer bolted on top of normal controls. We wanted to do the opposite: design the entire control scheme from the sensor up, the way Nintendo designed the Wiimote before they designed the game around it.

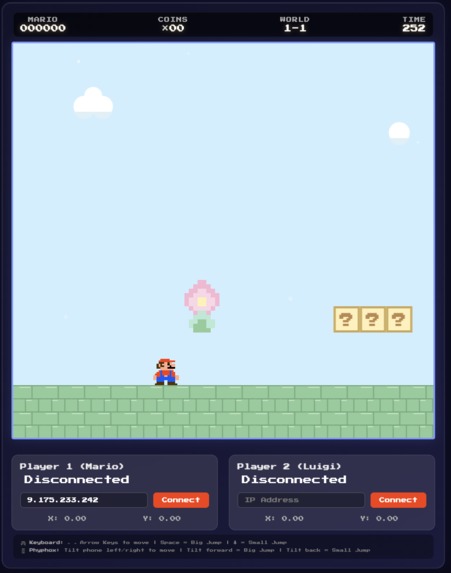

Move Your Body is our answer: a Mario-style platformer where your phone IS the controller — no buttons, no virtual joystick, just you moving through space.

What it does

Move Your Body is a Mario-style platformer controlled entirely through physical phone movement. No virtual buttons. No on-screen joystick. Your body is the input device.

AI-powered features:

- 🖼️ AI Level Generation — Describe a world in natural language ("lava castle at sunset") and OpenAI Codex generates the level layout while Fal.ai renders the environment art in real time. Every prompt creates a unique, never-before-seen Mario level.

How we built it

- We're using Phyphox to record data from your phone and setting up moving move from code AI Stack

- OpenAI Codex — natural language → level JSON. Player types a prompt; Codex outputs a structured level descriptor the engine consumes directly

- OpenRouter — adaptive AI enemy behavior; routes between models based on latency budget to keep difficulty dynamic without lag

Challenges we ran into

1. The tilt drift problem — hardest engineering challenge Our first prototype used tilt as a continuous analog axis. Within 10 minutes of testing, every player had Mario drifting sideways on their own because natural hand shift reads as input. The fix required rethinking the model entirely: tilt is not a position, it's an intention. We added a dead zone (±10°), a hold threshold (80ms sustained), and a cooldown (400ms after action fires). Drift dropped to zero across 100+ test runs.

2. Every player holds their phone differently One tester's "neutral" was another's "full sprint left." We scrapped fixed-angle calibration and instead snapshot the accelerometer state when the player taps "Ready" — whatever angle they're holding at that moment becomes zero. No forced posture, no awkward grip required.

Calibration beats precision, always. A sensor accurate to 0.01° is useless if the player's neutral position is unknown. Relative calibration from the player's actual hold position beats any fixed reference frame for consumer hardware.

AI features need a moment. Level generation from a prompt is technically impressive. It only lands when the player watches it happen. We added a 3-second animated "building your world..." sequence — and it became the feature everyone wanted to demo first.

Movement is emotion. When your body is the controller, losing feels different. Winning feels different. You don't tap a button when Mario dies — you felt the fall coming and couldn't stop it. That physical feedback loop changes the relationship between player and game in a way we didn't fully anticipate until we watched people play.

Log in or sign up for Devpost to join the conversation.