Inspiration

Technical hiring is fundamentally constrained by scale.

Organizations often receive hundreds of applications for a single role, making it impossible to conduct meaningful live interviews for every candidate. As a result, many companies rely on static coding tests, asynchronous recordings, or shallow AI interview tools.

These approaches remove the most important element of technical evaluation: context.

They cannot see a candidate’s workspace, follow their reasoning in real time, or react to how a solution evolves while it is being written.

We built Owlyn to solve this problem.

Owlyn is an autonomous multimodal agent ecosystem that conducts live interviews, provides real-time assistance, and generates structured technical evaluation reports.

Instead of static prompts or delayed AI responses, Owlyn can see, hear, and reason about a candidate’s workspace in real time. Using Gemini Live and multimodal AI agents, the system conducts natural voice interviews, observes coding behavior, and generates structured technical insights.

Owlyn is therefore a multimodal AI platform for live technical interviews, interview preparation, and real-time assistance.

What it does

Owlyn enables organizations and candidates to interact with AI in a fully multimodal technical environment.

The system analyzes:

- voice conversations

- screen activity

- coding behavior

- whiteboard interactions

- webcam signals

From these inputs, Owlyn can conduct interviews, provide assistance, monitor sessions, and generate evaluation reports.

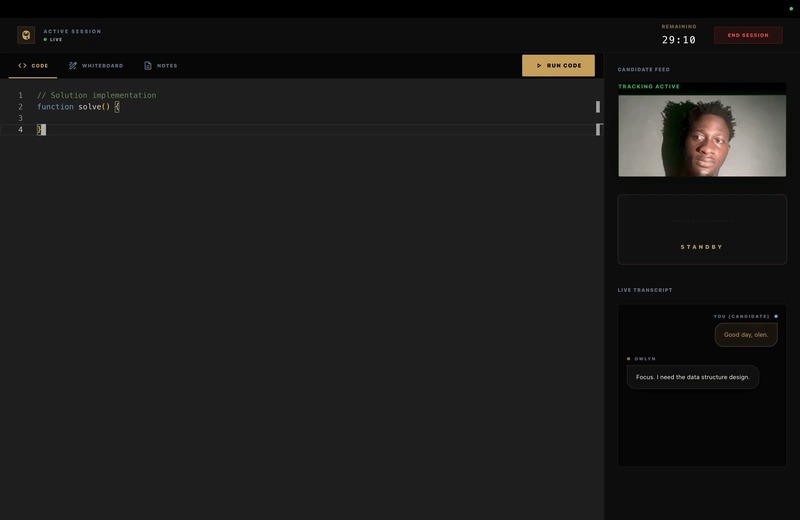

Live Technical Interviews

Owlyn conducts real-time technical interviews using Gemini Live with sub-second conversational latency.

Candidates interact with the AI interviewer in a professional workspace that includes:

- Monaco code editor

- whiteboard canvas

- notes panel

As the candidate writes code or explains their reasoning, the system observes their implementation and asks relevant follow-up questions.

The interviewer reacts dynamically to the candidate’s logic and reasoning process rather than relying on static questions.

Practice Mode

Owlyn includes a Practice Mode designed for candidates preparing for real interviews.

Users can configure their own session by selecting:

- topic or skill area

- difficulty level

- session duration

- preferred spoken language

The system then generates a technical session that mirrors the real interview environment.

Practice Mode uses the same multimodal infrastructure as enterprise interviews, allowing candidates to interact with the AI interviewer while coding, drawing diagrams, or explaining solutions.

This gives users the opportunity to practice in a realistic interview environment before entering a real session.

Assistant Mode

Owlyn also functions as a persistent multimodal assistant.

In Assistant Mode, the AI runs as a floating widget that stays alongside the user’s IDE and terminal.

The assistant can:

- observe the screen workspace

- listen to voice prompts

- analyze code context

- provide debugging insights

- explain architecture or implementation decisions

This creates an experience similar to pair programming with an AI collaborator that understands your working environment.

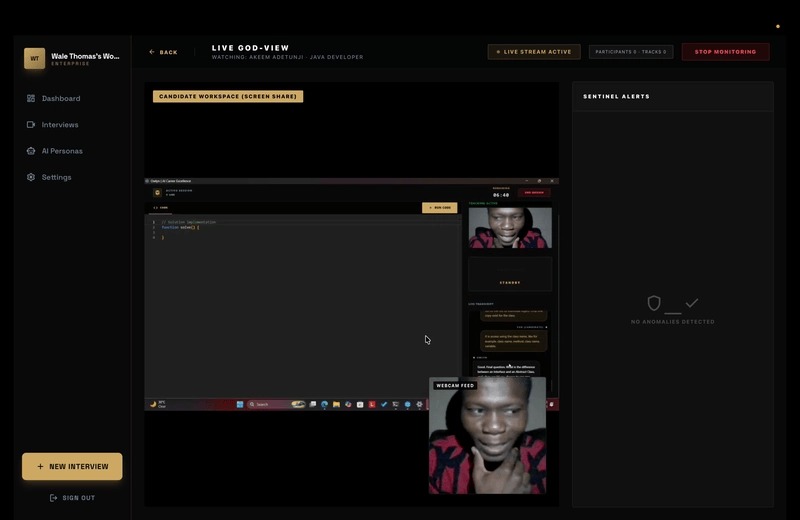

Monitoring Dashboard

Organizations can observe active interviews through a Monitoring Dashboard.

Recruiters or hiring managers can join a session as silent observers, gaining visibility into:

- the candidate’s coding workspace

- live transcripts

- conversation flow

- security alerts

If the system detects suspicious activity through the Sentinel agents, the monitoring interface immediately surfaces alerts so teams can intervene if necessary.

This gives hiring teams real-time visibility without disrupting the interview process.

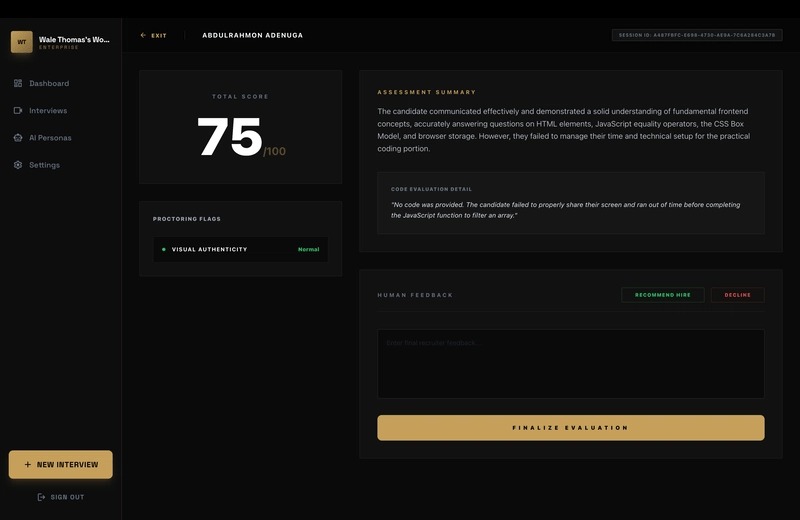

Recruitment Dashboard

Owlyn also includes a Recruitment Management Dashboard for managing the hiring pipeline.

Teams can:

- track candidates across interview stages

- configure interview parameters

- review AI-generated technical reports

- collaborate internally on candidate evaluations

While Owlyn performs automated evaluation, human recruiters remain responsible for the final hiring decision.

Talent Pool

All completed interviews are stored in a centralized Talent Pool.

This system allows organizations to:

- compare candidates side-by-side

- filter candidates by skill score or role

- review transcripts and code evolution

- analyze hiring pipeline performance

Because every candidate is evaluated using the same criteria, the Talent Pool creates a more objective way to identify strong technical talent across large candidate pools.

Automated Technical Evaluation

After each session, Owlyn generates a structured technical report based on:

- transcripts

- coding behavior

- reasoning patterns

- interaction signals

The report includes structured insights into:

- problem solving ability

- code quality

- communication clarity

- algorithmic reasoning

These reports help recruiters quickly evaluate technical ability at scale.

How we built it

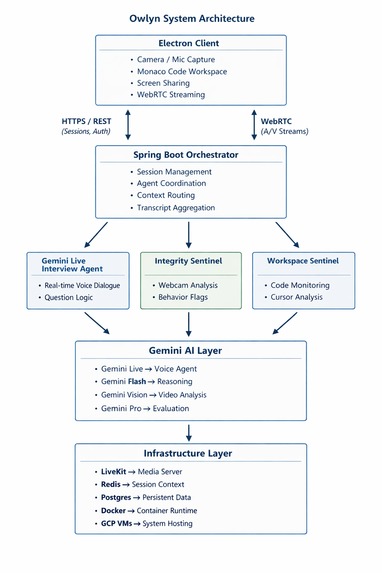

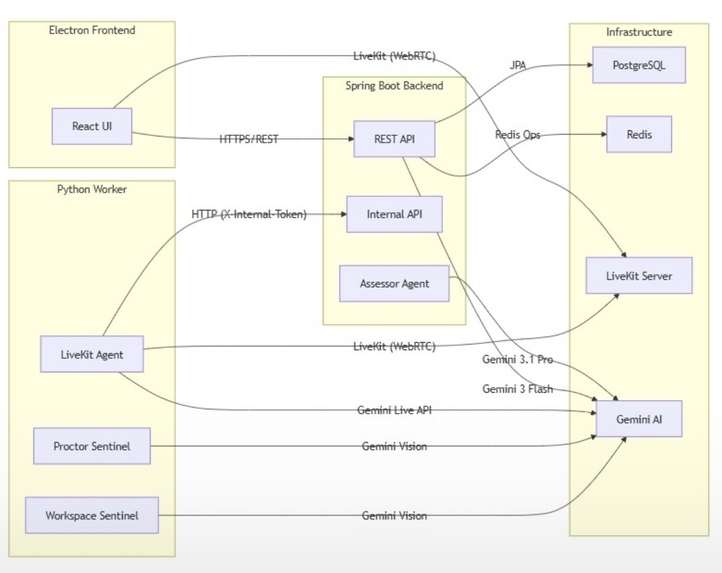

Owlyn is built as a distributed multimodal AI system powered by specialized agents.

Client Layer

The desktop application is built using:

- Electron

- React

- Monaco Editor

The client captures:

- microphone audio

- webcam video

- screen workspace

- coding activity

These signals are streamed to the backend using WebRTC and REST APIs.

Real-Time Media Infrastructure

We use LiveKit to handle real-time audio and video streaming between the client and AI agents.

This allows Owlyn to maintain natural voice conversations with Gemini Live while keeping latency extremely low.

Backend Orchestration Layer

The backend is built using Spring Boot and acts as the central orchestrator.

It manages:

- session lifecycle

- candidate data

- transcript synchronization

- agent communication

All multimodal signals pass through this layer to ensure a unified system state.

Multi-Agent Architecture

Owlyn operates through several specialized Gemini-powered agents (GenAI SDK)

Question Generator (Gemini Flash)

Generates technical challenges based on job role and requirements.

Interviewer Agent (Gemini Live)

Conducts the real-time voice interview.

Workspace Sentinel (Gemini Vision)

Observes the coding workspace and analyzes implementation logic.

Integrity Sentinel

Monitors webcam signals for suspicious activity.

Technical Assessor (Gemini Pro)

Analyzes session logs and transcripts to generate structured evaluation reports.

These agents operate independently while sharing context through the orchestration layer.

Infrastructure

Owlyn runs on:

- Google Cloud Virtual Machines

- Docker

- PostgreSQL

- Redis

- LiveKit

- Gemini APIs

Redis stores live session state while PostgreSQL stores persistent interview records and reports.

Challenges we ran into

Real-Time Latency

Traditional LLM systems often introduce multi-second delays.

Using Gemini Live with WebRTC streaming allowed us to maintain sub-second response times necessary for natural conversations.

Multimodal Synchronization

Owlyn processes several signals simultaneously:

- audio

- video

- workspace activity

- code evolution

Coordinating these streams across multiple AI agents while keeping latency low required careful system orchestration.

Bandwidth Optimization

Continuous video analysis would consume significant bandwidth.

We optimized the system by streaming 1 frame per second, which provides enough visual context for Gemini Vision while maintaining accessibility for users with typical internet connections.

Multi-Agent Coordination

Gemini agents cannot directly communicate with each other.

To solve this, we built a central orchestration layer that routes context between agents and synchronizes their reasoning.

Accomplishments that we're proud of

- Building a real-time multimodal interview system

- Designing a multi-agent AI architecture powered by Gemini

- Creating a practice environment that mirrors real interviews

- Developing a persistent multimodal assistant

- Building a monitoring system for live interview visibility

- Implementing a Talent Pool for large-scale candidate evaluation

Most importantly, we built a system that feels less like a chatbot and more like an intelligent technical collaborator.

What we learned

Real-time AI systems require very different design decisions compared to traditional LLM applications.

Latency becomes the most important factor, especially when voice conversations and coding activities occur simultaneously.

We also learned that specialized agents outperform monolithic models when dealing with multimodal reasoning.

What's next for Owlyn

We plan to expand Owlyn with:

Hybrid AI + Human Interviews

Recruiters will be able to jump into an interview and seamlessly take over from the AI interviewer.

ATS Integrations

Direct integrations with hiring tools like Greenhouse and Lever.

Collaborative Whiteboarding

Allowing candidates and interviewers to work on the same whiteboard in real time.

Log in or sign up for Devpost to join the conversation.