Built at the MIT Hardware + AI Hackathon by Tim Qi (product, mechanical), Haoyang Li (mechanical, hardware), Pranav Chaparala (hardware), Shu Ou (software, AI)

Inspiration

When you're stuck on a problem, the worst thing you can do is keep thinking about it the same way. And yet that's exactly what most AI chat interfaces encourage: a linear thread of thought, one message at a time, going deeper and deeper into the same groove you were already in.

We wanted to break that pattern, not by giving better answers, but by surfacing better questions from unexpected angles.

The current chat paradigm is fundamentally one-directional: you type, it responds, you type again. That format reinforces tunnel vision. Unreasonable Cube is an attempt to make AI interaction physically multidimensional, to bring multiple facets of a problem into the room at once, so you can hold them, rotate them, and let the weight of different perspectives shift before you commit to a direction.

The Rubik's Cube felt like the right form. It is already a cultural shorthand for complexity and transformation. Every face is different, and every rotation changes how they relate. We asked: what if this was not a puzzle to solve, but a question to shape?

That became Unreasonable Cube, a physical AI interface that maps your challenge into six perspectives, lets you rebalance them through rotation, and generates a speculative glimpse of where your thinking could lead.

What it does

You hold the cube and speak a challenge. It could be a decision you're wrestling with, something you're trying to plan, a question you can't quite articulate yet. The cube listens. Then it maps your challenge to six distinct perspectives, one per face, each representing a different dimension of how humans naturally understand a problem: relevance, emotional resonance, relationships, blind spots, systemic forces, and what hasn't surfaced yet.

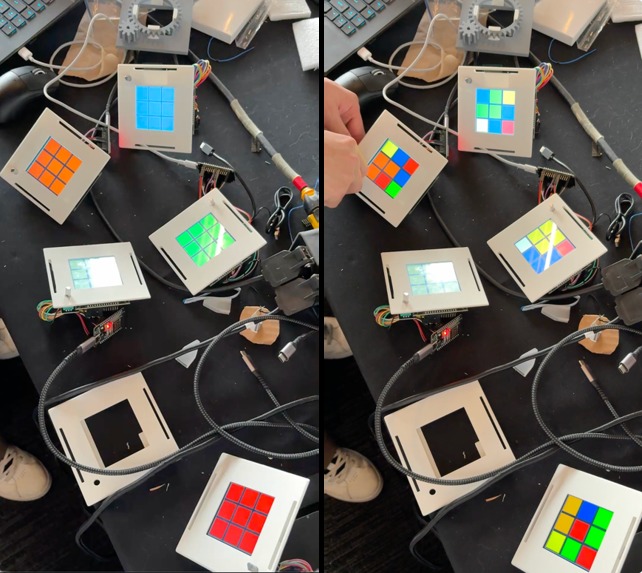

You can rotate the cube to shift how these perspectives interact, just as turning a Rubik's Cube reconfigures its surrounding pieces. As you turn it, the embedded screens pulse with color, reflecting the evolving balance between perspectives.

When you're ready, press a face to choose your primary lens. The cube responds with follow-up questions shaped not only by that one perspective, but by the full configuration you've created through rotation. It then generates a brief, speculative future of the challenge you're exploring.

How we built it

The system has two tightly coupled parts: the physical cube and the AI brain.

Hardware

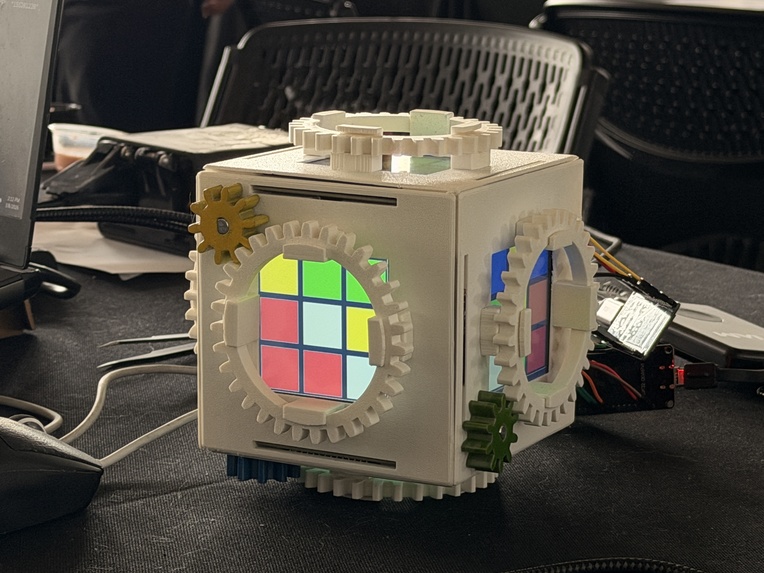

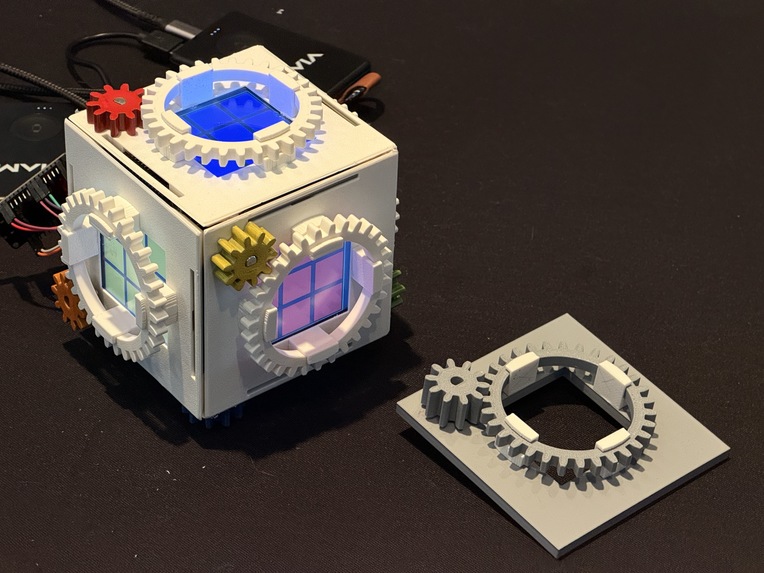

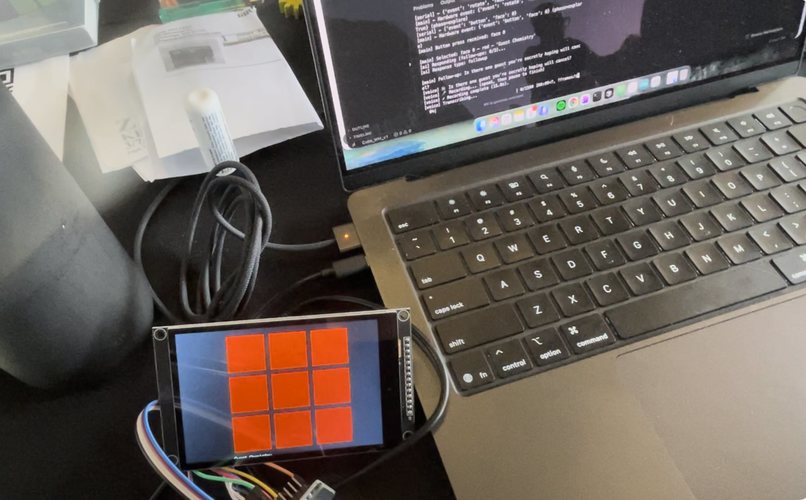

The cube is a custom-built mechanical Rubik's Cube housing ESP32 microcontrollers, 4" ST7796 TFT displays, and rotary encoders, one of each per face, wired 1:1:1. Each ESP32 handles its own screen firmware and sends encoder events (rotate, press, long press) to the laptop. The mechanical cube shell was designed and fabricated to hold everything in place while remaining rotatable.

Software The laptop runs a Python application that coordinates the full interaction loop:

- Voice I/O:

openai-whisperfor speech-to-text with silence detection - Weight engine: a Rubik's-adjacency weight model, rotating face shifts the weights of its four adjacent faces, not the rotated face itself

- AI layer: Claude API (

claude-sonnet) with a two-stage prompt system. One for generating the six perspectives from the challenge, one for deciding whether to ask a follow-up or generate the speculative story, using the full weight vector to shape both

The six perspectives are generated fresh for every challenge.

Challenges

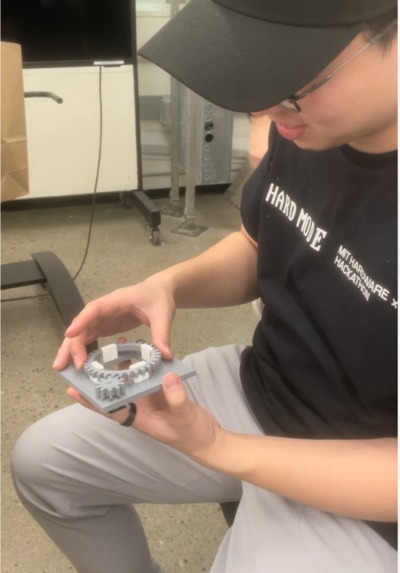

The mechanical decoupling problem. This was one of the hardest challenges of the build. Each face needed a rotary encoder that the user could turn with their hand, but the encoder had to be mechanically isolated from the screen behind it. Early iterations kept running into the same wall: any mechanism that let the user rotate the encoder inevitably meant rotating or stressing the screen as well. We went through multiple design iterations without a clean solution.

The breakthrough came from the design of a gear-based system (shout out to Tim!). Each face has a large outer gear ring that the user can grab and rotate. That ring drives a smaller colored gear (visible on each cube face), which in turn drives the encoder shaft.

Whisper in a noisy room. The silence detection threshold that works in a quiet lab becomes useless at a hackathon. We tuned the RMS threshold and hard-capped recording at 8 seconds to keep transcription fast regardless of ambient noise.

The blank screen problem. The ESP32 sends a ready event on boot, but Python often missed it because the serial reader thread hadn't started yet. We added a 5-second wait with a deadline and fallback so the system proceeds even if the handshake is lost.

Accomplishments

We built a working end-to-end tangible AI interface in a weekend: voice in, physical manipulation, AI reasoning, voice out, all running through a custom-fabricated cube with embedded electronics.

The interaction loop works. You speak something unresolved, you hold and rotate an object, you hear something that reframes it. That experience from fuzzy question to surprising perspective is exactly what we set out to build.

What we learned

A few people played around with the Unreasonable Cube during the hackathon. We observed that tangible interfaces change how people relate to AI output. When you've physically shaped the weights by rotating a cube, the story the cube tells feels like yours, not a generic AI response. The physical act of choosing creates a sense of connection and investment in the process.

What's next for Team 18: Unreasonable Cube

- Consolidate to fewer boards for cleaner internals and a more refined enclosure

- Explore ways in which users can choose to know the generated perspectives

- Embed a mic and speaker directly in the cube so it works standalone, no laptop needed

- Go fully wireless by leveraging the built-in wifi

- Explore multi-user experience

Log in or sign up for Devpost to join the conversation.