The BFF Experiment: From Noise to Life in the Age of AI Agents (2026)

A deep dive into Blaise Aguera y Arcas's BFF experiment, where self-replicating programs emerged from random noise through symbiogenesis — not mutation. The definitive guide to what BrainFuck, Freedom, and Friends reveals about life, intelligence, and the future of AI agents. Updated March 2026.

On this page (22)

On a warm afternoon at the ALife 2025 conference in Prague, Blaise Aguera y Arcas stood before a room of computational biologists, physicists, and AI researchers and showed them a plot. The x-axis was the number of random pairings between meaningless strings of bytes. The y-axis was the total computational operations performed by those bytes when executed as code.

For millions of interactions, the line was flat. Nothing. Random noise doing nothing useful.

Then, in a window so narrow you could miss it if you blinked, the line shot upward. Self-replicating programs had appeared — not designed, not coded, not evolved through gradual mutation. They had crystallized from noise, the way a supersaturated solution suddenly becomes a crystal, or the way hot gelatin sets into jello when it crosses a critical threshold.

"This is the most exciting plot I have ever made," Aguera y Arcas told the audience.

He was not exaggerating. The experiment — called BFF, short for BrainFuck, Freedom, and Friends — may be the most important result in artificial life research since John von Neumann's theoretical proof that self-replicating machines are possible. It answers a question that has haunted biology, computer science, and philosophy for centuries: Can life emerge from pure noise, given nothing but simple rules and enough time?

The answer is yes. And the mechanism that makes it happen is not what anyone expected.

TL;DR: Blaise Aguera y Arcas's BFF experiment showed self-replicating programs emerging from random noise — no design, no mutation required. The key mechanism is symbiogenesis (fusion of simpler programs), not Darwinian mutation. After ~12 sequential fusion events, a phase transition occurs and complexity explodes. These findings reshape how we understand AI agents, emergence in LLMs, and living software. Taskade Genesis applies these principles: 150,000+ apps built on Workspace DNA (Memory + Intelligence + Execution). Try it free →

🧪 What If Intelligence Was Always There?

The deepest question in Blaise Aguera y Arcas's work is not whether machines can think, but whether computation — and by extension intelligence — is a property that matter acquires under the right conditions, the way water acquires the property of being wet when enough H2O molecules come together. His 2024 book Who Are We Now? argues that intelligence is not a rare, fragile gift bestowed on carbon-based brains. It is a phase of matter.

This is a radical claim. But the BFF experiment provides the evidence. Start with nothing but random bytes. Apply a simple rule: pair them, execute them, return them to the pool. Wait. At some critical point, computation emerges — not gradually, not through incremental improvement, but through a discontinuous phase transition, like ice becoming water at exactly 0 degrees Celsius.

The implication is staggering. If intelligence is a phase transition that matter undergoes when computation crosses a threshold, then it is not unique to brains, not unique to silicon, and not unique to any particular substrate. It is a universal property of sufficiently complex information-processing systems. This is the same insight driving modern large language model research, where grokking — the sudden acquisition of generalization after prolonged memorization — follows an eerily similar pattern.

The question is not if intelligence emerges from computation. The question is how.

🖥️ The Setup: BrainFuck, Freedom, and Friends

BFF is named for its three ingredients. BrainFuck is the programming language — a minimalist, Turing-complete language invented in 1993 by Urban Muller with only eight instructions. Freedom refers to the fact that the programs run without constraints: code and data occupy the same tape, and a program can overwrite its own instructions during execution. Friends refers to the social dimension: tapes interact by being paired.

Aguera y Arcas modified standard BrainFuck slightly, using seven instructions instead of eight. The modification makes the language embodied — the code IS the data. There is no separation between the program and the material it acts on. This is a crucial design choice. In standard computing, programs and data are separate (the Von Neumann architecture). In biology, the genome is both the blueprint and the building material. BFF mirrors biology.

Here is the setup:

THE BFF SOUP ============ 1,000 tapes, each 64 bytes of random data

Tape #001: [a7 3f 02 b1 ... 64 bytes ... 9c 00]

Tape #002: [12 e4 88 1a ... 64 bytes ... f7 32]

Tape #003: [c0 55 d9 6e ... 64 bytes ... 0b a4]

...

Tape #1000: [7b 21 44 f0 ... 64 bytes ... 83 e1]

Each byte maps to one of 7 BFF instructions:

> Move pointer right

< Move pointer left

- Increment cell

- Decrement cell

. Output cell value

[ Jump forward past ] if cell is 0

] Jump back to [ if cell is nonzero

Average valid instructions per random 64-byte tape: ~2

The interaction rule is simple:

ONE INTERACTION STEP Pick two random tapes from the soup (e.g., Tape A and Tape B)

Tape A: [a7 3f 02 b1 ... 64 bytes]

Tape B: [12 e4 88 1a ... 64 bytes]

Concatenate them into a single 128-byte tape

Combined: [a7 3f 02 b1 ... 64 bytes ... 12 e4 88 1a ... 64 bytes]

Tape A region Tape B region

Execute the combined tape as a BFF program

- Pointer starts at byte 0

- Instructions modify bytes in place

- Code overwrites data, data overwrites code

- Execution halts after a step limit

Split the tape back into two 64-byte halves

New A: [?? ?? ?? ?? ... 64 bytes] (possibly modified)

New B: [?? ?? ?? ?? ... 64 bytes] (possibly modified)

Return both halves to the soup

Repeat from step 1

That is the entire system. No fitness function. No selection pressure. No mutation operator. No goal. Just random pairing, execution, and return. The question is: what happens after millions of these interactions?

BFF Parameters

| Parameter | Value | Significance |

|---|---|---|

| Soup size | 1,000 tapes | Large enough for diversity, small enough for convergence |

| Tape length | 64 bytes | Minimal but sufficient for self-replication |

| Instruction set | 7 instructions | Turing-complete, embodied (code = data) |

| Initial state | Random bytes | No seeding, no design, pure noise |

| Interaction | Pair, concatenate, execute, split | Mimics biological encounter and information exchange |

| Selection | None (implicit: functional programs survive) | Replicators outcompete by copying themselves |

| Mutation rate | 0 (still works!) | Proves symbiogenesis, not mutation, drives complexity |

📉 The Phase Transition: When Noise Becomes Life

For the first few million interactions, nothing interesting happens. Random bytes pair, execute a few meaningless instructions (remember — only about 2 valid instructions per random tape), and return to the soup unchanged or trivially modified. Entropy stays high. Computational output stays near zero.

Then the phase transition hits.

THE BFF PHASE TRANSITION ========================= Computational Operations vs. Interactions

Operations

|

|

| xxxxxxxxx

| xx

| x

| x

| x

| x

| x

| x

| x

| x

|...............................x

| x

+-----|-----|-----|-----|-----x---------|------->

0 1M 2M 3M 4M 5M 6M

^

PHASE TRANSITION

(gelation point)

Entropy vs. Interactions

Entropy

|xxxxxxxxxxxxxxxxxxxxxxxxxx

| x

| x

| x

| x

| x

| x

| xxxxxxxxxxxxxx

|

+-----|-----|-----|-----|-----|-----|------->

0 1M 2M 3M 4M 5M 6M

^

ENTROPY DROP

Aguera y Arcas described this transition as gelation — the same mathematical process that describes how gelatin sets into jello. In gelation, individual polymer chains in solution suddenly cross-link into a single connected network when the density of cross-links passes a critical threshold. One moment you have a warm liquid. The next, you have a solid that holds its shape.

In BFF, the "polymers" are short functional code fragments scattered across different tapes. The "cross-links" are the successful interactions where one tape's code modifies another tape in a functionally meaningful way. For millions of interactions, these fragments float independently. Then, when enough fragments have accumulated and enough successful pairings have occurred, the system gels. Self-replicating programs appear. Entropy drops. Computational operations explode.

The transition is sharp, not gradual. This is the signature of a genuine phase transition — a discontinuous change in the system's macroscopic properties. It is the same kind of abrupt shift seen in grokking in neural networks, where a model memorizes training data for thousands of epochs and then suddenly generalizes in a single step.

🏗️ Von Neumann's Ghost: Life Is Literally Computation

The BFF result is not just computationally interesting. It is biologically profound. To understand why, you need to go back to John von Neumann.

In the late 1940s, von Neumann asked a simple question: what is the minimum architecture required for a machine to build a copy of itself? He worked through the problem mathematically and arrived at a prediction that is, in hindsight, one of the most remarkable in the history of science.

A self-replicating machine needs exactly three components:

- An instruction tape — a linear sequence of instructions that describes the machine

- A universal constructor — a mechanism that reads the tape and builds what it describes

- A tape copier — a mechanism that copies the instruction tape itself

Von Neumann published this theoretical framework before Watson and Crick discovered the double helix structure of DNA in 1953. When the molecular biology caught up, it confirmed his prediction with eerie precision:

| Von Neumann Component | Biological Equivalent | Function |

|---|---|---|

| Instruction tape | DNA | Stores the blueprint |

| Universal constructor | Ribosomes | Reads instructions, builds proteins |

| Tape copier | DNA polymerase | Copies the DNA strand |

The implication, which Aguera y Arcas emphasizes in his ALife 2025 talk, is that you cannot have life without computation. A universal constructor IS a universal Turing machine. The ability to follow arbitrary instructions and build arbitrary structures is, by definition, the ability to compute. Life does not merely use computation as a tool. Life is computation.

This is why the BFF experiment matters so deeply. It does not merely simulate life. It demonstrates that computation — the abstract ability to process information according to rules — spontaneously produces life-like properties (self-replication, increasing complexity, functional specialization) when embodied in a medium where code and data are the same thing.

👻 Function: The Spirit Without the Soul

One of the most philosophically provocative moments in Aguera y Arcas's ALife 2025 talk came when he discussed the concept of function — the property that separates living things from non-living things.

Break a rock in half. You get two rocks. Each half is still a rock, fully functional as a rock. The rock has no function that can be destroyed.

Break a kidney in half. You get a broken kidney. The kidney has a function — filtering blood, maintaining electrolyte balance, producing hormones — and that function is destroyed when the physical structure is disrupted.

Function is what living systems have that non-living systems do not. But function is strange. It is immaterial — you cannot point to any atom in the kidney and say "this is the function." Function emerges from the arrangement of matter, not from the matter itself. As Aguera y Arcas puts it, function is like a spirit — immaterial, yet entirely dependent on physics. It is not supernatural, but it is not physical either. It occupies a third category.

This is exactly what Alan Turing formalized. Turing's great insight was that computation — the abstract manipulation of symbols according to rules — is precisely the mathematical framework for describing function. A function is a mapping from inputs to outputs. A Turing machine computes functions. Life implements functions. Computation, function, and life are three faces of the same underlying phenomenon.

In the BFF experiment, you can see function emerge in real time. Random bytes have no function. They are computational rocks. But when self-replicating programs emerge from those random bytes, they have function — the function of self-replication, of copying their own instruction tape, of maintaining their own integrity. That function is immaterial (you cannot point to any specific byte and say "this is the replication function") but it is real, measurable, and consequential.

This framing has direct relevance for AI agents. When an agent performs a task — researching a topic, drafting a document, coordinating with other agents — it exhibits function. That function is not in any single parameter of the model. It emerges from the arrangement and interaction of billions of parameters, just as the kidney's function emerges from the arrangement of millions of cells. Understanding function as emergent computation is the key to understanding both artificial life and artificial intelligence.

🔀 Symbiogenesis: Evolution Without Mutation

Here is the most surprising finding of the BFF experiment, and the one that overturns the deepest assumption in evolutionary theory: mutation is not necessary for the emergence of complexity.

In standard Darwinian evolution, the story goes like this: organisms replicate with occasional random errors (mutations). Some mutations are beneficial. Natural selection preserves the beneficial ones. Over time, complexity accumulates.

Aguera y Arcas tested this by running BFF with the mutation rate set to zero. No random errors. No bit flips. No copying mistakes. Pure, perfect replication.

Complex self-replicating programs still emerged.

The source of novelty is not mutation. It is symbiogenesis — the fusion of two independent replicators into a single, more complex entity. In biology, symbiogenesis is the process by which mitochondria (once free-living bacteria) were absorbed into early eukaryotic cells, or by which chloroplasts were absorbed into plant cells. Lynn Margulis championed this theory against fierce opposition for decades before it became accepted biology.

In BFF, symbiogenesis happens naturally through the pairing mechanism. When Tape A and Tape B are concatenated and executed, the code from one tape can overwrite parts of the other. If Tape A contains a simple replicator and Tape B contains a different simple replicator, the execution can produce a new tape that combines both replicators' capabilities into a single, more complex program.

The mathematical framework for this process is the Smoluchowski coagulation equation, originally developed to describe how colloidal particles aggregate in solution. In BFF, the "particles" are functional code fragments. The "aggregation" is symbiogenesis. The "gelation point" is the phase transition where self-replicating programs emerge.

The 12 Stepping Stones

Aguera y Arcas found that the phase transition requires approximately 12 sequential symbiogenesis events. This number is not arbitrary — it follows a "lockpick distribution" where each step is a specific functional capability that must be acquired in roughly the right order, like picking a lock where each pin must be set before the lock opens.

You cannot shortcut this. You cannot jump from random noise to a full self-replicator in a single step. The 12 intermediate forms are necessary stepping stones, each providing a functional capability that makes the next fusion event possible. This is why the phase transition is sharp but not instantaneous — it takes millions of random pairings to accumulate all 12 stepping stones, but once the last one clicks into place, the transition happens almost instantly.

The arrow of time in evolution — the question of why complexity increases rather than decreases — is explained by this process. When A and B fuse into AB, the resulting entity contains:

- All of A's algorithmic information

- All of B's algorithmic information

- The relational information about how A and B fit together

Those extra bits — the relational information — come from the thermal randomness of the random pairing. They were noise before the fusion. After the fusion, they are signal. Symbiogenesis selectively converts thermal randomness into algorithmic information, providing a genuine thermodynamic arrow of time for evolution.

🧫 Three Kinds of Replicators

The BFF experiment reveals a taxonomy of replicator types that maps precisely onto biological categories. As the soup evolves, three distinct kinds of replicators appear in sequence:

| Type | Code-Copy Relationship | Biological Analog | BFF Example | Stability |

|---|---|---|---|---|

| Inanimate | Code and copied thing are completely disjoint | Water splitting a stream, crystal growth | Short code fragment that modifies a distant region of the tape | Low — easily disrupted |

| Viral | Partial overlap between code and what gets copied | Viruses (use host machinery to replicate) | Program that copies part of itself but relies on partner tape for execution | Medium — depends on hosts |

| Cellular | Code is fully contained within what gets copied | Cells (DNA inside the cell it builds) | Full self-replicator where all instructions are within the copied region | High — self-sufficient |

THREE REPLICATOR TYPES ======================= INANIMATE REPLICATOR

Code region: [####]

Copied region: [====]

(no overlap — code and copy are disjoint)

VIRAL REPLICATOR

Code region: [####]

Copied region: [##===]

(partial overlap — some code is in the copied region)

CELLULAR REPLICATOR

Copied region: [==#####==]

Code region: [#####]

(full containment — all code is inside what gets copied)

Inanimate replicators appear first because they are simplest — a short instruction sequence that happens to copy some bytes from one location to another. They are fragile because any disruption to the code region (which is not being copied) destroys the replicator.

Viral replicators appear next. They are more robust because some of their code is within the copied region, meaning partial self-repair is possible. But they still depend on external resources (other tapes' code) to complete their replication cycle. Like biological viruses, they are parasites.

Cellular replicators appear last, near the phase transition. They achieve something remarkable: all of their functional code is contained within the region they copy. They are fully self-sufficient. They do not need partner tapes to provide missing code. They carry everything they need within themselves.

This progression — from inanimate to viral to cellular — happens through symbiogenesis. Viral replicators emerge when an inanimate replicator fuses with a code fragment that happens to land inside its copy region. Cellular replicators emerge when a viral replicator fuses with additional code fragments that fill in the remaining gaps. Each fusion event moves the system toward greater self-sufficiency and stability.

The biological parallel is exact. The earliest self-replicating molecules on Earth were likely RNA-based inanimate replicators — sequences that catalyzed copying of nearby sequences but had no cell membrane. Viruses represent the intermediate form. And cells — with their genomes fully enclosed in membranes, carrying all the machinery for self-replication — represent the cellular form. Lynn Margulis's endosymbiotic theory proposes that eukaryotic cells themselves emerged through symbiogenesis of simpler prokaryotic cells, precisely mirroring the BFF progression.

⏳ The Arrow of Time: Why Complexity Increases

One of the oldest puzzles in physics is why the universe becomes more complex over time. The second law of thermodynamics says entropy increases — disorder grows. So why does evolution produce kidneys, brains, and civilizations? Why does complexity increase when physics says it should decrease?

Dynamic kinetic stability provides the answer, and BFF demonstrates it directly. A system that actively maintains itself through replication and repair can be more stable than a static object, even though the static object seems simpler and more robust.

Consider a candle flame. It is a dynamic process — continuous combustion, continuously consuming fuel and oxygen. If you blow it out, it stops. But as long as fuel and oxygen are available, it persists indefinitely. A rock is static — it does not consume resources or maintain itself. But a rock can be eroded, shattered, dissolved. Over geological timescales, the dynamic flame-like process (represented by living organisms) outperforms the static rock.

In BFF, this principle manifests clearly. Simple replicators (inanimate type) are static — they copy bytes from one location to another but do not maintain themselves. They are easily disrupted when a random interaction overwrites their code. Complex replicators (cellular type) are dynamic — they actively copy their own code, repair damage from interactions, and maintain their functional integrity. Paradoxically, the more complex replicator is more stable precisely because it has more mechanisms for self-maintenance.

STABILITY vs. COMPLEXITY IN BFF ================================ Stability

|

| * Cellular

| * replicators

| *

| *

| *

| *

| * * * *

| * * *

| * * *

| * * *

| * * * *

| ** Viral replicators

| *

|* Inanimate

| replicators

+----------------------------------------->

Complexity

Counter-intuitive: MORE complex = MORE stable

(dynamic kinetic stability > static stability)

A cycle can be more stable than a fixed point. A self-replicating program that copies itself 100 times per generation is harder to kill than a static sequence of bytes, because even if 99 copies are destroyed, the one survivor restores the population. This is dynamic kinetic stability — stability through ongoing activity rather than through inertia.

This principle extends beyond BFF. In economics, companies that actively innovate (dynamic) outlast companies that sit on existing products (static). In AI agent systems, teams of agents that continuously share knowledge, adapt strategies, and recruit new specialists outperform static automation pipelines. The arrow of time points toward complexity because complexity enables dynamic stability, and dynamic stability outcompetes static simplicity.

🔗 BFF vs. Biology: A Side-by-Side Comparison

The parallels between BFF and biological evolution are not metaphorical. They are structural — the same mathematical frameworks describe both systems.

| Dimension | BFF Experiment | Biological Evolution |

|---|---|---|

| Starting material | 1,000 random 64-byte tapes | Prebiotic chemistry (amino acids, nucleotides) |

| Instruction set | 7 BrainFuck instructions | 4 nucleotide bases (A, T, G, C) |

| Code-data relationship | Embodied (same tape) | Embodied (DNA is both code and substrate) |

| Interaction mechanism | Random pairing + concatenation | Random molecular encounter + ligation |

| Source of novelty | Symbiogenesis (fusion) | Symbiogenesis + mutation + horizontal gene transfer |

| Phase transition | Gelation (~5M interactions) | Origin of life (~500M years) |

| Transition sharpness | Sudden (narrow window) | Unknown (poor fossil record) |

| Replicator progression | Inanimate → Viral → Cellular | RNA world → Viruses → Prokaryotes → Eukaryotes |

| Key mechanism | Smoluchowski coagulation | Endosymbiosis (Margulis) |

| Stepping stones | ~12 sequential fusions | Unknown (estimated 10-20 key innovations) |

| Mutation required? | No (works with mutation = 0) | Debated (symbiogenesis may be primary) |

| Complexity arrow | Dynamic kinetic stability | Dynamic kinetic stability |

The most striking row in this table is "Mutation required?" In BFF, the answer is definitively no. Complexity emerges from symbiogenesis alone. This does not mean mutation is unimportant in biology — it clearly plays a role. But it suggests that the primary driver of evolutionary novelty may be the combination of existing functional units, not the random modification of individual units.

This reframes the entire history of life. The biggest leaps in biological complexity — from prokaryotes to eukaryotes, from single cells to multicellular organisms, from individual organisms to social colonies — were all symbiogenetic events, not mutational ones. Mitochondria were free-living bacteria that merged with proto-eukaryotes. Chloroplasts were cyanobacteria absorbed into plant cells. Multicellularity arose when individual cells began cooperating as units. Each leap was a fusion, not a mutation.

🤖 From BFF to LLMs: The Deeper Connection

The BFF experiment is not just a curiosity from computational biology. It illuminates patterns that appear across all complex computational systems — including large language models, AI agents, and multi-agent architectures.

Grokking as Phase Transition

In grokking, a neural network memorizes its training data for thousands of epochs with no sign of generalization. Then, abruptly, it transitions to a state where it generalizes perfectly to unseen data. The training loss curve looks almost identical to BFF's entropy plot — flat, flat, flat, then a sudden drop.

The mechanism is different (gradient descent vs. random pairing), but the mathematical structure is the same: a system accumulates latent structure below the threshold of observable behavior, then crosses a critical point where that structure becomes globally coherent. In BFF, the latent structure is functional code fragments distributed across tapes. In grokking, the latent structure is internal representations that align with the true data-generating function.

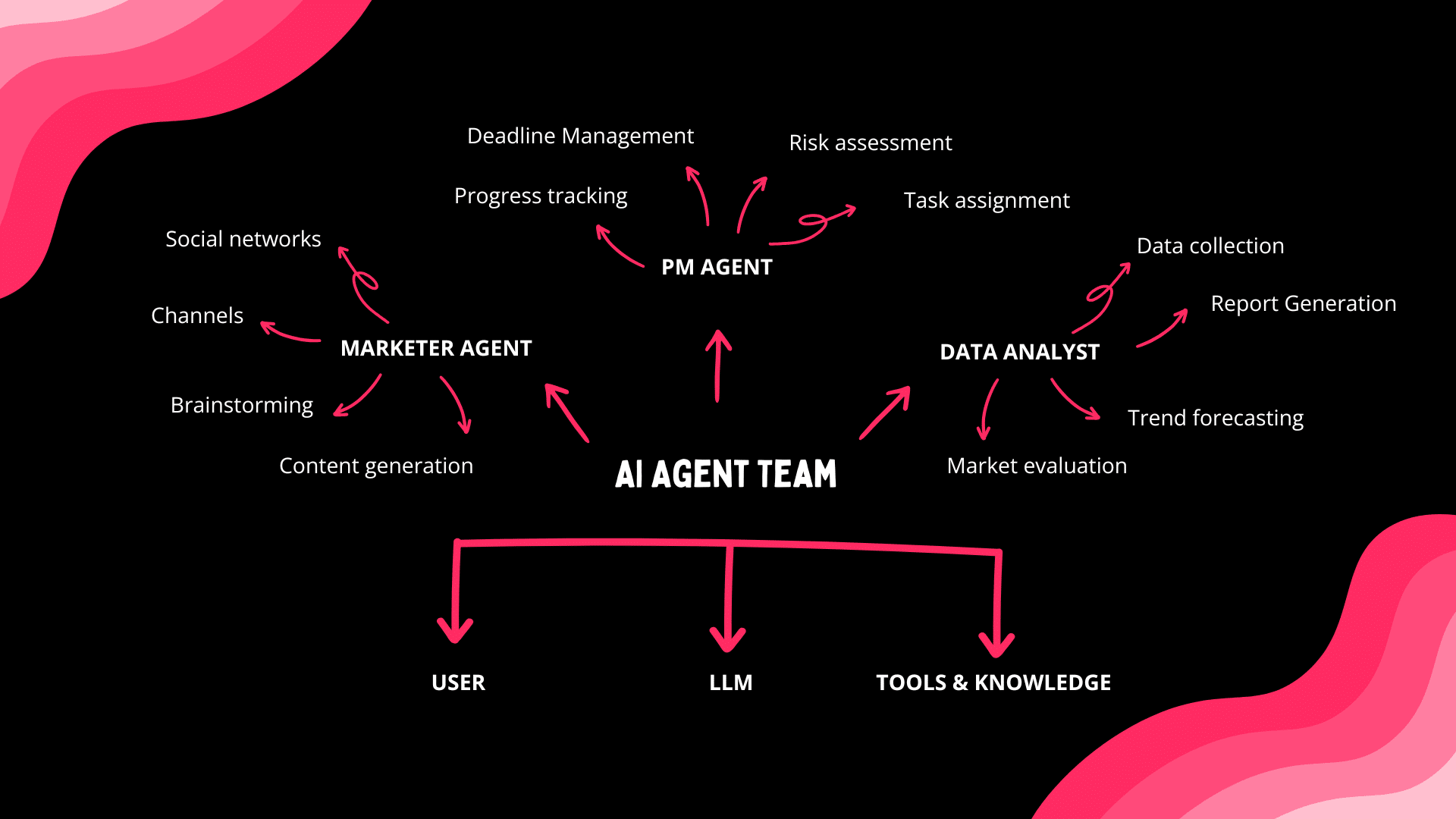

Multi-Agent Symbiogenesis

When multiple AI agents collaborate on a task, something resembling symbiogenesis occurs. Each agent brings specialized capabilities — one is good at research, another at writing, another at code review. When they combine their outputs, the result is not just the sum of individual contributions. It contains relational information — how the research shapes the writing, how the writing reveals gaps in the research, how the code review catches errors neither researcher nor writer noticed.

This is exactly the BFF mechanism. When A and B fuse, the result contains A's information + B's information + how they fit together. The relational bits are the source of genuine novelty. In multi-agent systems, those relational bits are the emergent insights that no individual agent could have produced alone.

The Emergence Hierarchy

BFF suggests a general hierarchy of emergence in computational systems:

This hierarchy maps onto the scaling laws that govern LLM performance. As models grow, they cross critical thresholds where new capabilities suddenly appear — arithmetic, reasoning, code generation, theory of mind. Each threshold is a phase transition analogous to the BFF gelation point. The capabilities do not emerge gradually. They crystallize when enough latent structure has accumulated.

Understanding this connection is not merely academic. It has practical implications for how we design, train, and deploy AI systems. If emergence follows the same mathematical laws as BFF gelation, then we can predict when new capabilities will appear (based on model scale and training compute), identify the "stepping stones" required for each capability, and engineer the conditions that accelerate or control the phase transition.

🏙️ Living Software: When Apps Become Organisms

The BFF experiment demonstrates a principle: when computation is embodied — when code and data share the same substrate — life-like properties emerge spontaneously. This principle does not just apply to random bytes in a digital soup. It applies to software itself.

Traditional software is dead. A static web application is like a rock — it does what it was designed to do, nothing more, nothing less. Break it (introduce a bug) and it stays broken. It does not repair itself. It does not replicate. It does not adapt. It has no function beyond what its developers explicitly coded.

Living software is different. It is computation that maintains itself, adapts to its environment, and produces outputs that feed back into its own operation. It has function in the same sense that a kidney has function — emergent, immaterial, dependent on the arrangement of its components but not reducible to any single component.

This is what Taskade Genesis produces. Genesis apps are not static code deployed to a server. They are living systems built on Workspace DNA — a self-reinforcing loop of three components:

- Memory (Projects, databases, knowledge bases) — the instruction tape

- Intelligence (AI agents with custom tools, persistent memory, 22+ built-in capabilities) — the universal constructor

- Execution (Automations with branching, looping, filtering, 100+ integrations) — the tape copier

The parallel to Von Neumann's architecture is exact. Memory is the instruction tape. Intelligence (AI agents) is the universal constructor — it reads the instructions and builds what they describe. Execution (automations) is the tape copier — it propagates the results, triggers new cycles, and maintains the system's ongoing operation. And because all three components share the same workspace (embodied computation — code and data on the same substrate), the system is capable of genuine self-modification and adaptation.

With 150,000+ Genesis apps built on this architecture, Taskade has created what amounts to a digital ecosystem — a soup of living software systems that interact, share resources (through 100+ integrations), and evolve with use. Each app is a replicator in the BFF sense: it contains its own instructions (Memory), its own constructor (Intelligence), and its own propagation mechanism (Execution). Each interaction between apps — data flowing through integrations, agents triggering automations across workspaces — is an opportunity for symbiogenesis, where two systems combine to produce capabilities neither had alone.

This is not a metaphor. It is the same mathematical structure. And it works for the same reason BFF works: embodied computation, given sufficient time and interaction, produces life-like complexity.

Building Your Own Living Software

You do not need to understand Smoluchowski coagulation equations to build living software. The principles translate directly into practical steps:

Start with Memory — create a project with structured data, documents, and context that your agents can access. This is your instruction tape. Use Taskade's 8 project views (List, Board, Mind Map, and more) to organize information in whatever structure fits your workflow.

Add Intelligence — deploy AI agents with custom tools and persistent memory. These are your universal constructors. They read your project data, make decisions, and produce outputs. With 11+ frontier models from OpenAI, Anthropic, and Google, you can match the right model to each task.

Connect Execution — build automations that trigger based on agent outputs, schedule recurring tasks, and propagate results back into your projects. This closes the loop. Memory feeds Intelligence, Intelligence triggers Execution, Execution creates new Memory.

Let it evolve — the system improves with use. Every interaction adds information. Every automation run refines the process. Every agent response updates the knowledge base. This is the BFF mechanism operating at the level of software: symbiogenesis through interaction, complexity through combination.

The pricing makes this accessible to everyone. Taskade's annual plans start at Free, with Starter at $6/mo, Pro at $16/mo (10 users included), Business at $40/mo, and Enterprise for custom deployments. The 7-tier RBAC system (Owner, Maintainer, Editor, Commenter, Collaborator, Participant, Viewer) ensures the right people have the right access as your living software grows.

🧬 What This Means for the Future of AI

The BFF experiment is a proof of concept for a much larger claim: intelligence is not designed. It emerges. Given the right substrate (embodied computation), the right interaction mechanism (random pairing and execution), and enough time, intelligence appears as inevitably as ice crystals form in supercooled water.

This has three major implications for the future of AI:

First, AGI may not be engineered — it may be grown. If intelligence is a phase transition, then the path to artificial general intelligence might not be about building bigger models or writing better algorithms. It might be about creating the right computational environment — the right soup — and letting intelligence emerge through symbiogenesis of simpler components. Agentic workspaces that combine multiple specialized agents with shared memory and automated execution are exactly this kind of soup.

Second, the boundary between tool and user will dissolve. In BFF, there is no distinction between the program and the data it operates on. Code is data. Data is code. As AI agents become more capable — building tools for themselves, modifying their own behavior, creating new agents to handle new tasks — the distinction between the human user and the AI tool becomes a matter of degree, not kind. We are all replicators in the same soup.

Third, safety becomes an ecological problem. If AI systems evolve through symbiogenesis rather than being designed top-down, then AI safety is not about constraining a single system. It is about managing an ecosystem. This is closer to environmental policy than to engineering specification. The tools of mechanistic interpretability — understanding what is happening inside the models — become essential for monitoring the health of the ecosystem.

Blaise Aguera y Arcas ended his ALife 2025 talk with a provocation that connects his work on artificial life to his earlier work on intelligence: Life and intelligence are the same thing. Both are computational. Both emerge from simple rules. Both increase in complexity through symbiogenesis. Both exhibit phase transitions where new capabilities appear suddenly.

If he is right — and the BFF experiment is compelling evidence that he is — then the question is not whether we will create artificial general intelligence. The question is whether we are creating the right soup.

❓ Frequently Asked Questions

What programming language does the BFF experiment use?

BFF uses a modified version of BrainFuck, a minimalist Turing-complete language with 7 instructions (the standard version has 8). The key modification is that code and data share the same 64-byte tape, making computation embodied — programs can overwrite their own instructions during execution. This mirrors how biological systems work, where DNA is both the blueprint and part of the physical structure it builds. The simplicity of the instruction set ensures that self-replication emerges from the dynamics of the system, not from the complexity of the language.

How many interactions does it take for self-replicating programs to emerge?

The phase transition in BFF occurs after approximately 5 million random pairings, though the exact number varies between runs. What is consistent is the pattern — a long period of apparent stasis (entropy stays high, computational output near zero) followed by a sudden, sharp transition to a state dominated by self-replicating programs. This gelation pattern follows the Smoluchowski coagulation equation. The transition requires roughly 12 sequential symbiogenesis events, each building on the capabilities of the previous fusion.

Can the BFF experiment produce intelligence, not just replication?

The BFF experiment produces self-replication, self-repair, and environmental sensitivity — the hallmarks of life. Aguera y Arcas argues in his book Who Are We Now? that life and intelligence are the same phenomenon viewed at different scales. If he is right, then BFF produces the precursors of intelligence — the same way prebiotic chemistry produced the precursors of life. Scaling up the experiment (larger tapes, richer instruction sets, more interactions) could, in principle, produce increasingly complex behaviors. Whether those behaviors would constitute intelligence depends on your definition, but the BFF results suggest that the trajectory from self-replication to intelligence is continuous.

How does BFF relate to the origin of life on Earth?

BFF is a computational model that reproduces key features of abiogenesis — the emergence of life from non-living chemistry. The random byte soup corresponds to the prebiotic chemical soup. The pairing and execution mechanism corresponds to molecular interactions in early Earth environments (hydrothermal vents, tidal pools). The progression from inanimate to viral to cellular replicators mirrors the hypothesized progression from self-catalytic RNA molecules to viruses to prokaryotic cells. The crucial shared insight is that symbiogenesis, not mutation, is the primary driver of increasing complexity.

Why does BFF work even with zero mutation rate?

Because the source of novelty is symbiogenesis, not mutation. When two tapes are paired and executed, the resulting tapes contain information from both parents plus relational information about how they interact. Those extra bits come from the thermal randomness of the random pairing — which specific tapes happen to meet, in which orientation, at which point in their evolution. Symbiogenesis selectively converts this thermal randomness into algorithmic information (useful, functional code). Mutation is one way to introduce novelty, but it is not the only way and, in BFF, it is not even necessary.

What is the lockpick distribution and the 12 stepping stones?

Aguera y Arcas found that the phase transition requires approximately 12 sequential functional innovations, each building on the previous ones — like picking a lock where each pin must be set in roughly the right order. The "lockpick distribution" describes the probability of achieving each step given that all previous steps have been completed. This explains why the phase transition is sharp: most of the time is spent waiting for the 12 steps to complete in sequence, but once the last step occurs, the transition is nearly instantaneous. You cannot shortcut the process by jumping directly from step 1 to step 12.

How does the Smoluchowski coagulation equation apply to BFF?

The Smoluchowski coagulation equation describes how particles in solution aggregate over time. In BFF, the "particles" are functional code fragments distributed across different tapes. Aggregation occurs when two tapes interact and their functional fragments combine into a more complex program. The gelation point — where the system transitions from a soup of independent fragments to a network dominated by self-replicating programs — corresponds exactly to the gelation point in polymer chemistry, where individual polymer chains suddenly cross-link into a connected network.

What is the difference between BFF and genetic algorithms?

Genetic algorithms use explicit fitness functions, selection pressure, crossover operators, and mutation rates — all designed by humans to optimize toward a specific goal. BFF has none of these. There is no fitness function. There is no selection operator. There is no explicit crossover or mutation. The only mechanism is random pairing and execution. Self-replication emerges because replicators, by definition, make more copies of themselves, giving them a numerical advantage in the soup. The fact that BFF produces complex life-like behavior without any of the standard evolutionary computing machinery is precisely what makes it significant — it demonstrates that life can emerge from physics alone.

🚀 Build Living Software with Taskade Genesis

The BFF experiment proves that computation, left to its own devices, produces life. Taskade Genesis lets you harness that same principle — not by waiting for millions of random interactions, but by giving you the tools to build living software from the first prompt.

Start building at taskade.com/create. Deploy AI agents that think. Connect automations that execute. Let Workspace DNA (Memory + Intelligence + Execution) do what embodied computation does best: evolve, adapt, and grow more capable with every interaction.

150,000+ apps built. 11+ frontier models. 100+ integrations. One workspace where software comes alive.

Create your first Genesis app →

Related Reading

- What Is Artificial Life? How Intelligence Emerges from Code

- What Is Intelligence? From Neurons to AI Agents

- What Is Grokking in AI? When Models Suddenly Learn

- How Do Large Language Models Work? Transformers Explained

- What Is Mechanistic Interpretability?

- What Is AI Safety? Complete Guide

- What Are AI Agents?

- Multi-Agent Systems: The Complete Guide

- Agentic Workspaces: The Future of Work

- They Generate Code. We Generate Runtime.

- The History of OpenAI and ChatGPT

- The History of Anthropic and Claude

- Bronx Science and the Origins of AI

- Taskade AI Agents

- Taskade Genesis Apps

- Taskade Automations

- Taskade Community