Embed presentation

Download as PDF, PPTX

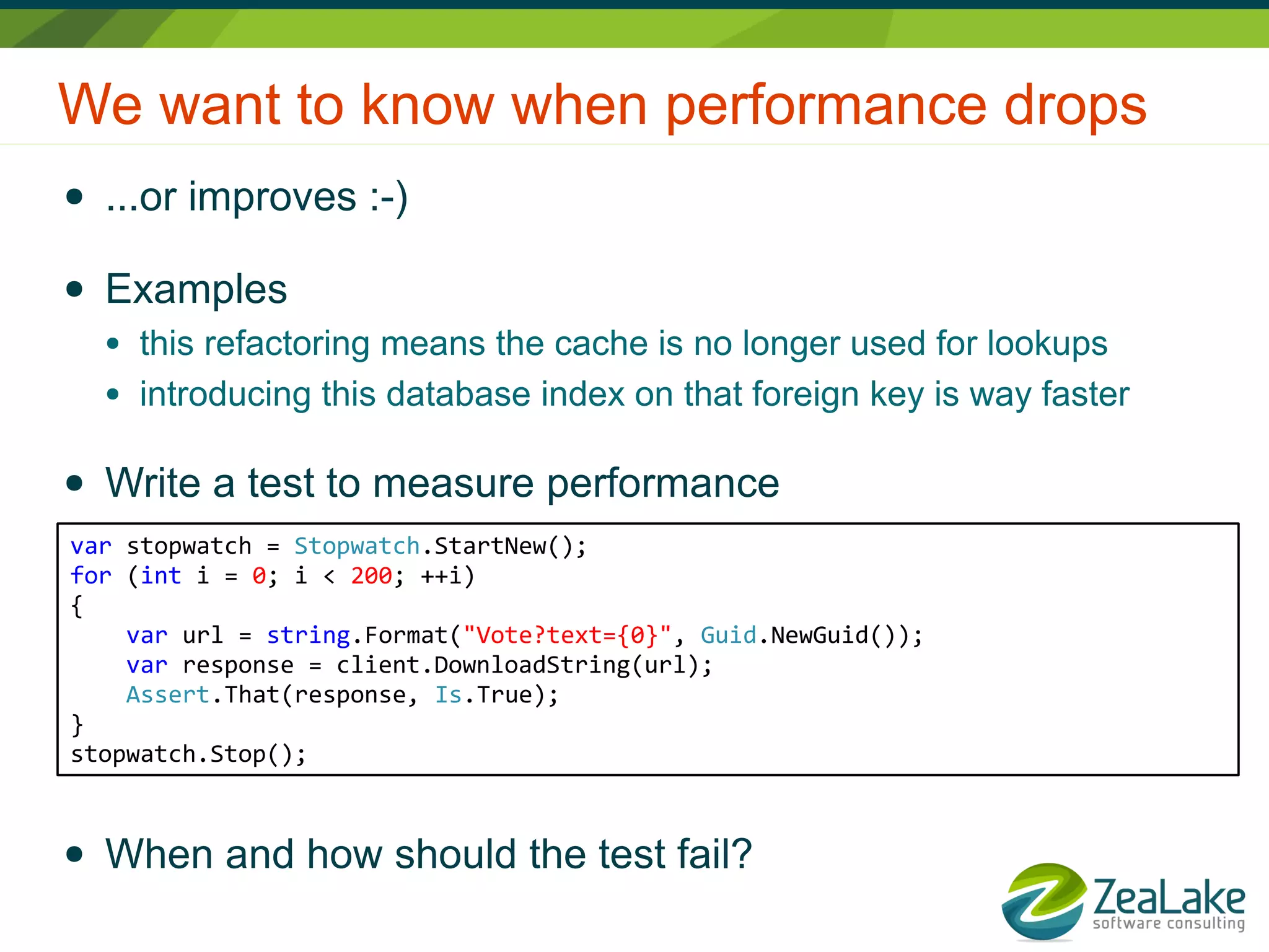

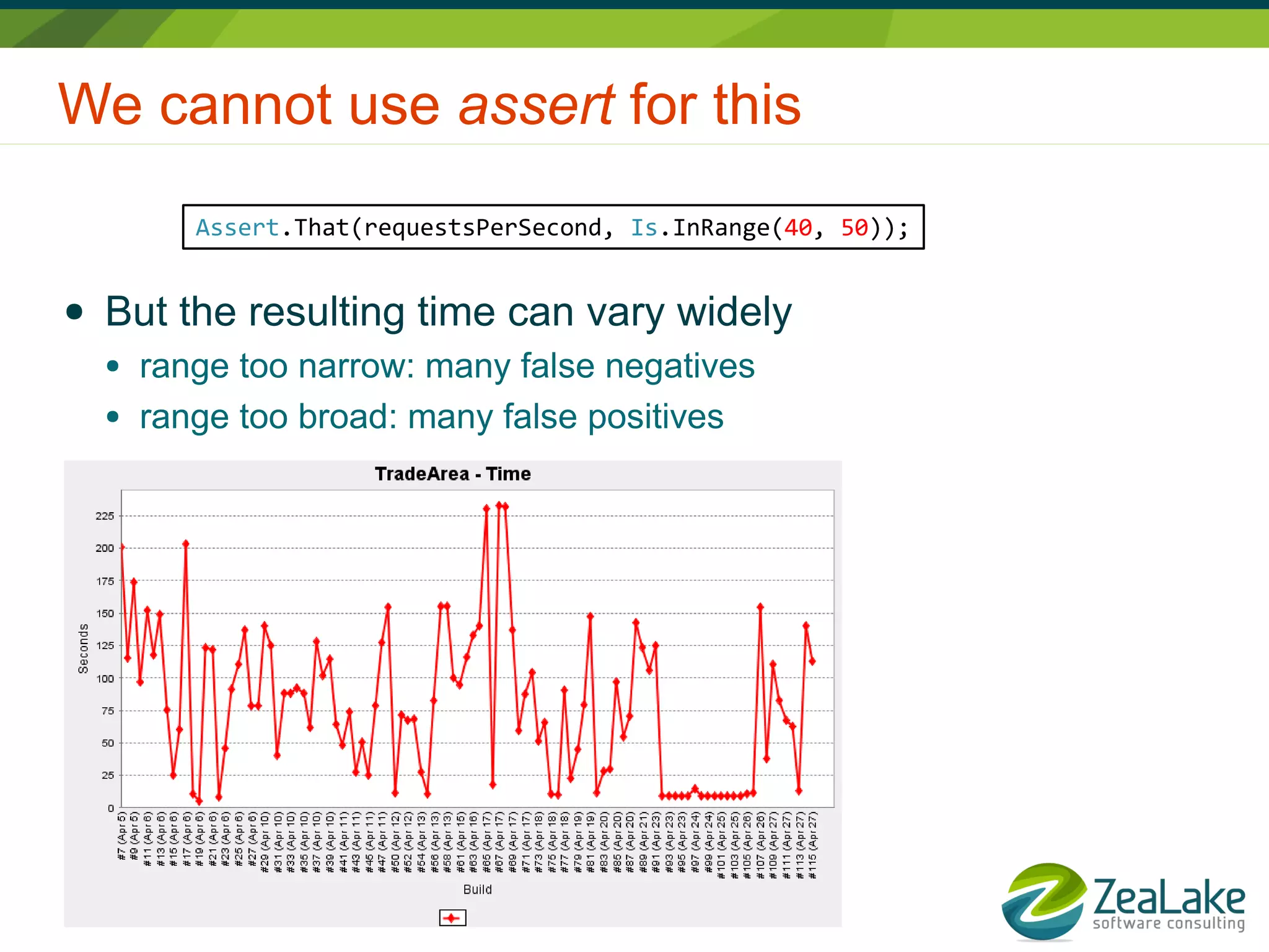

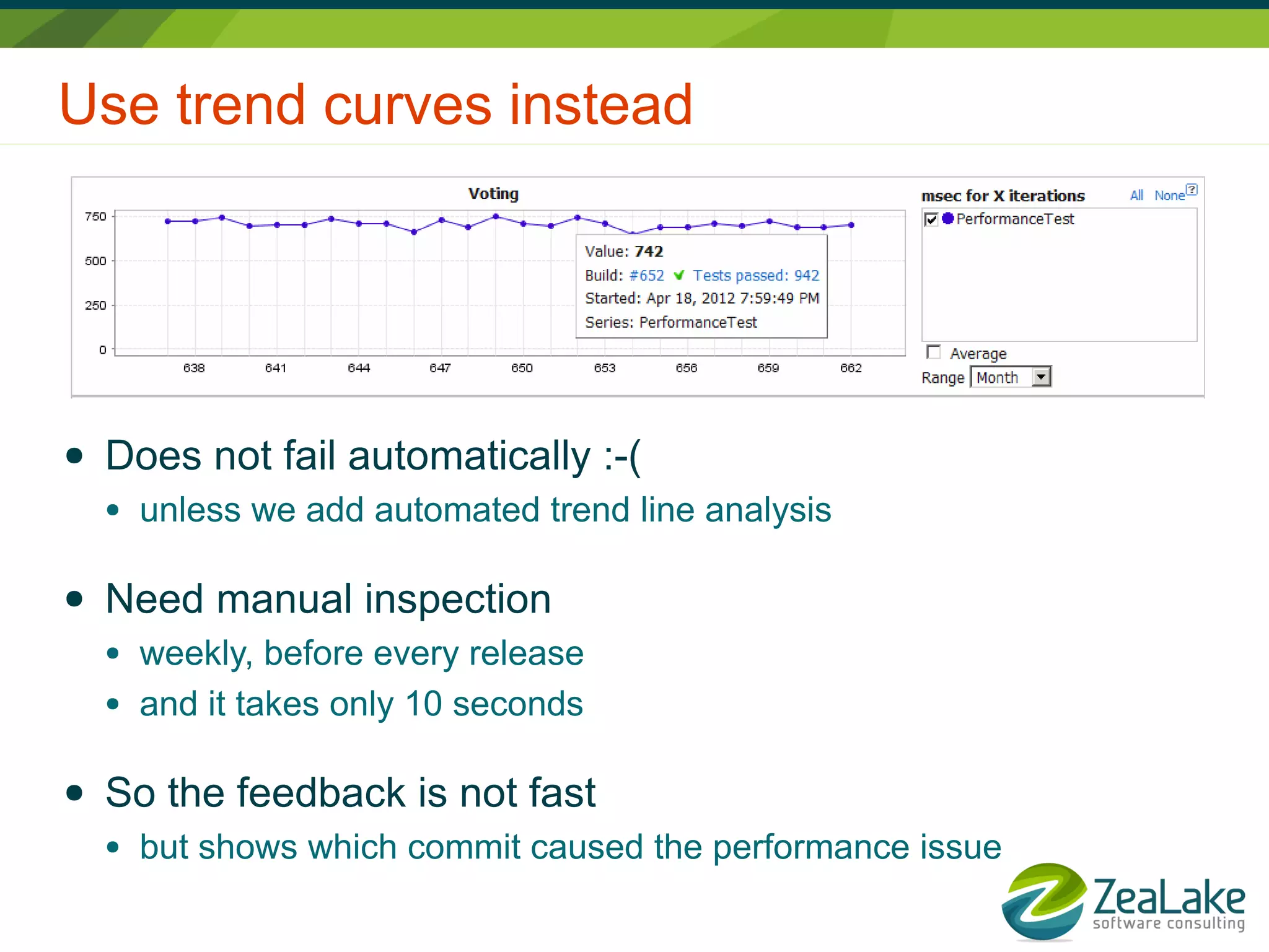

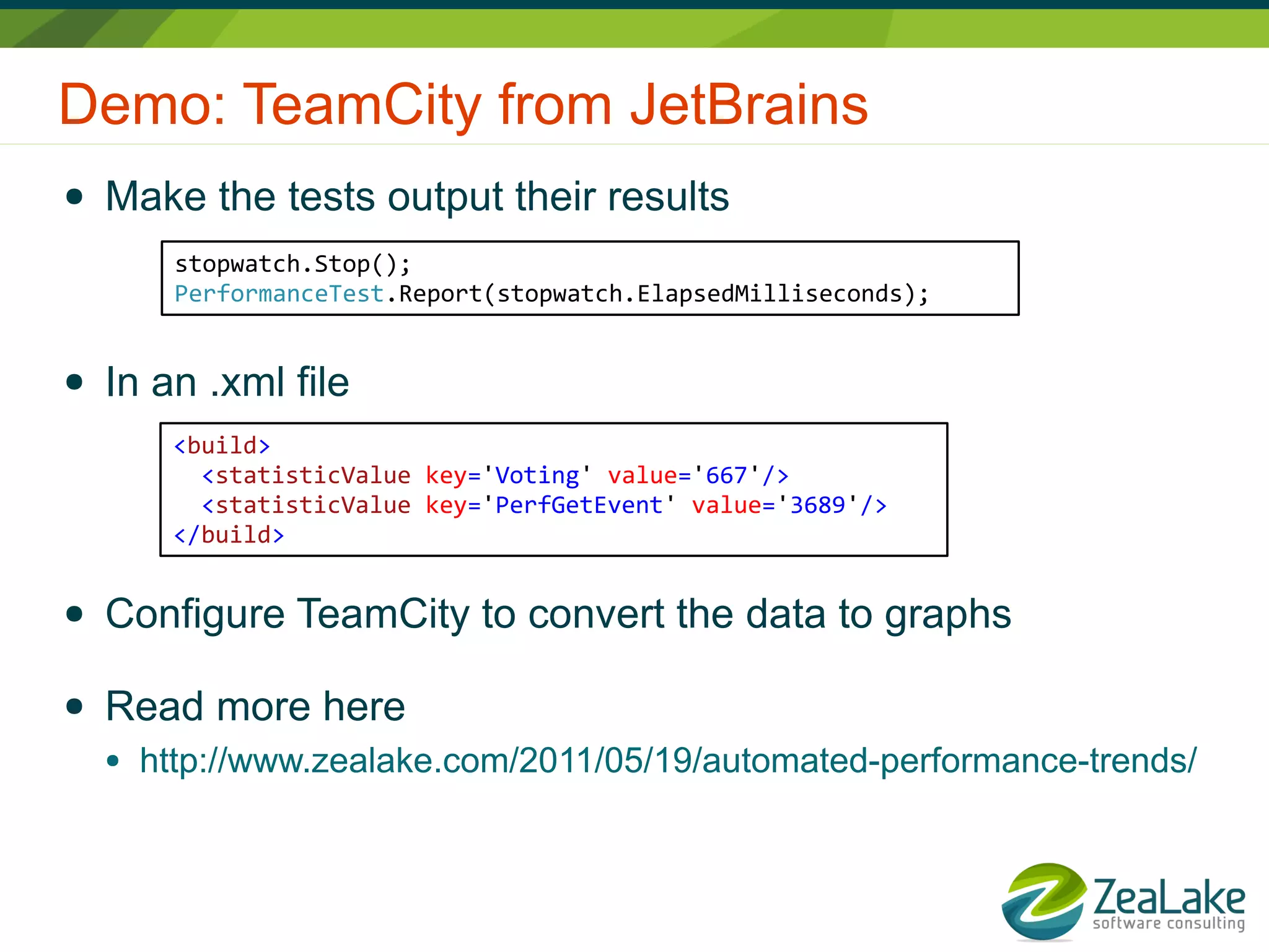

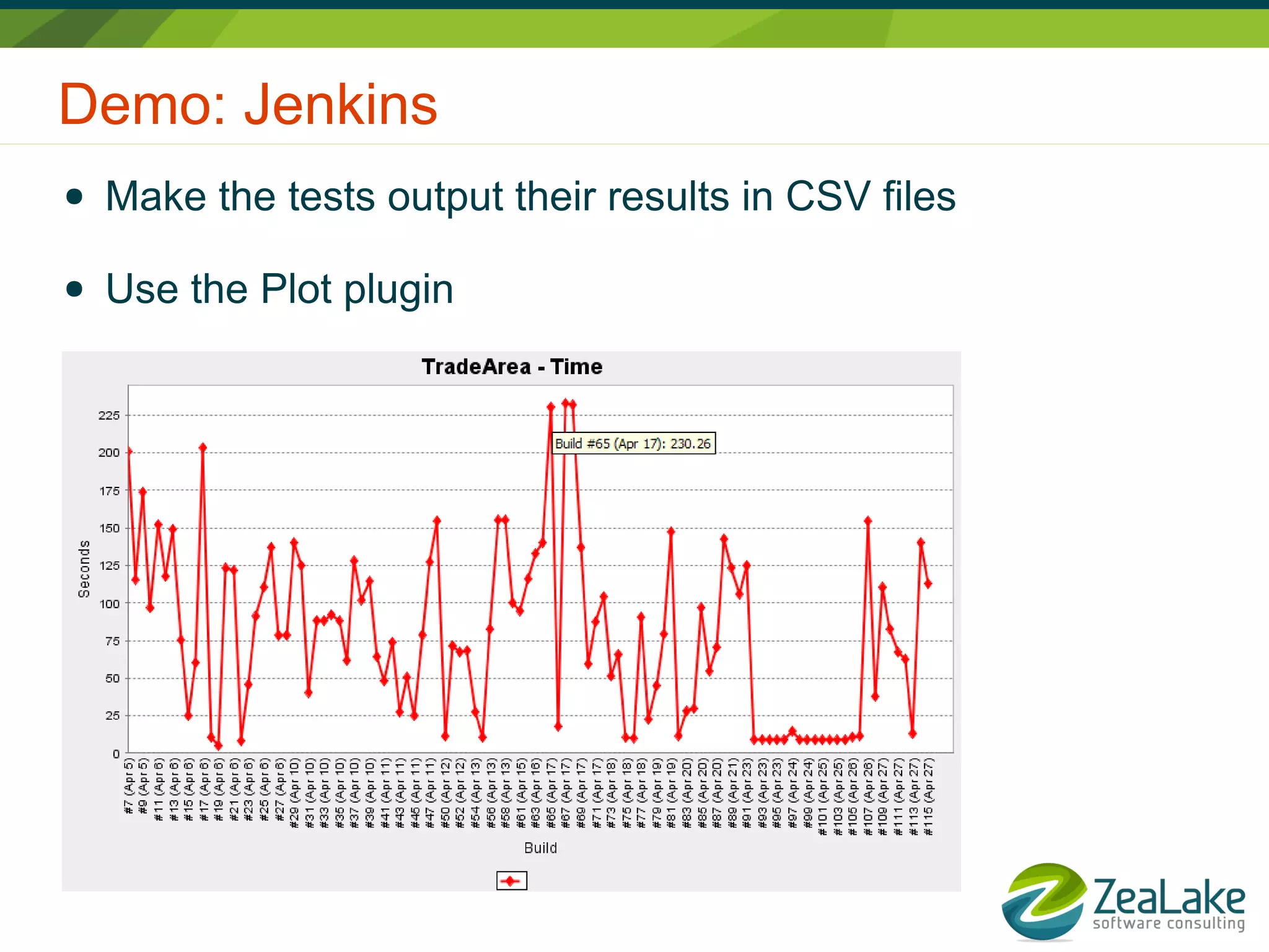

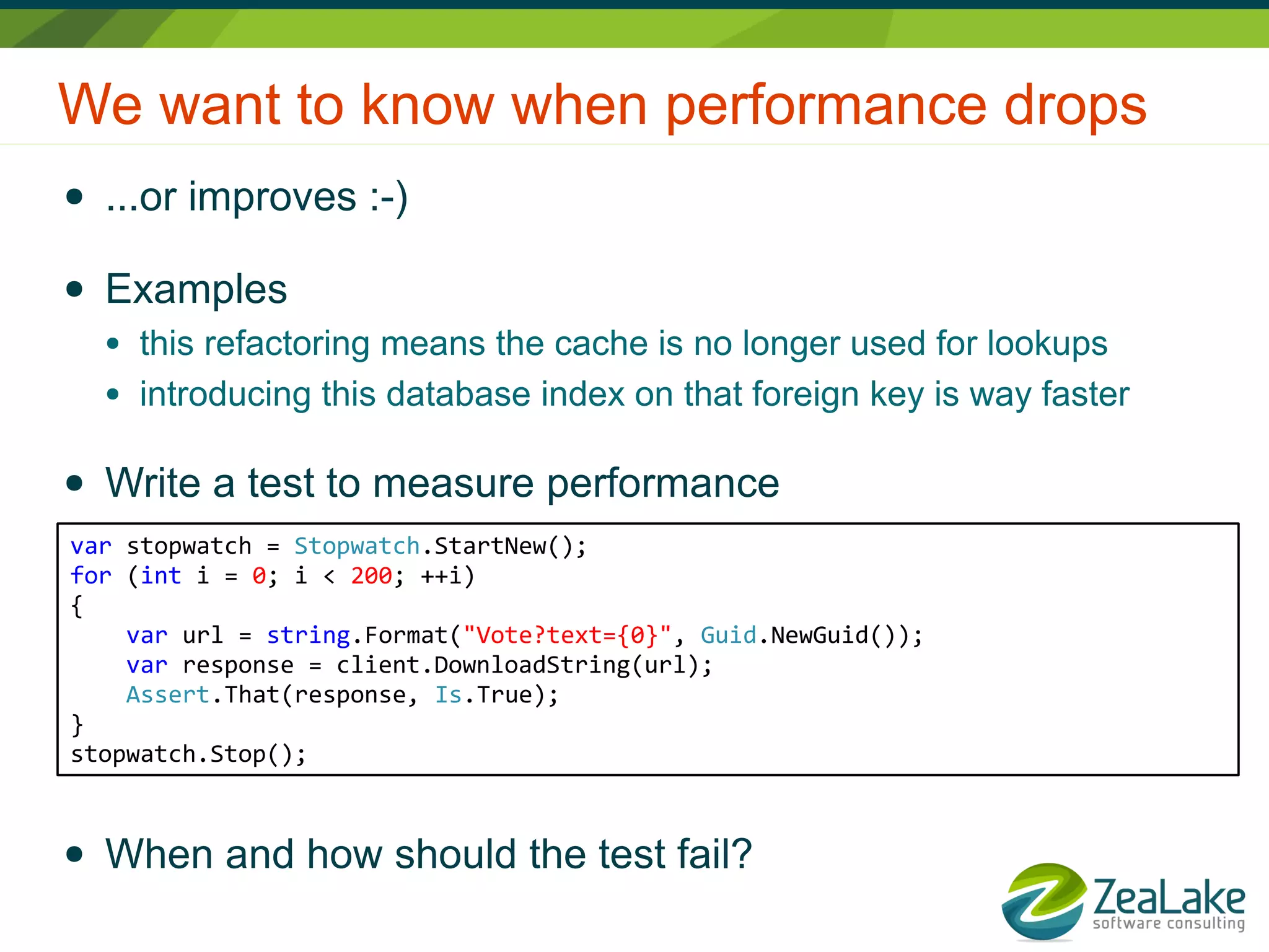

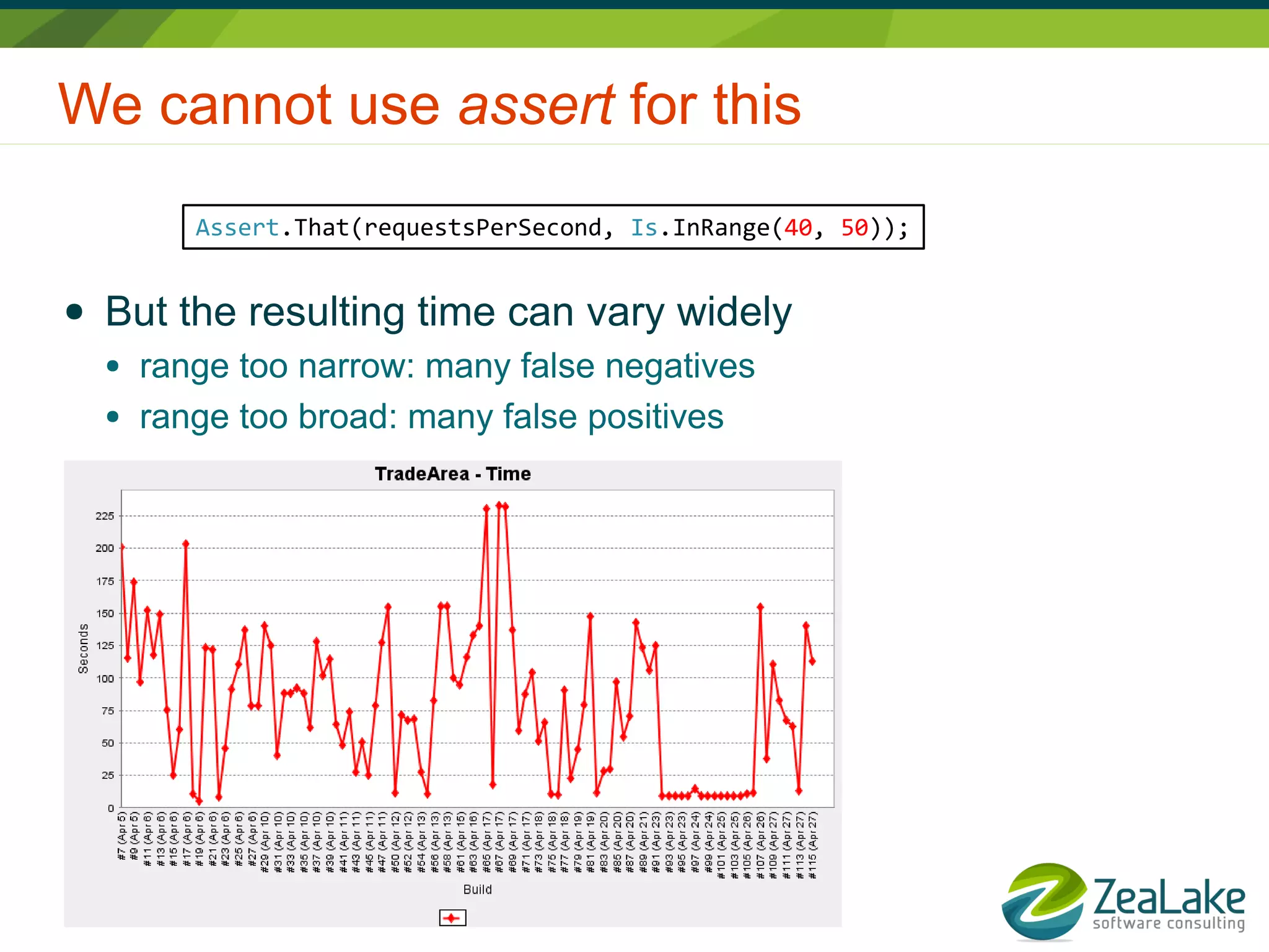

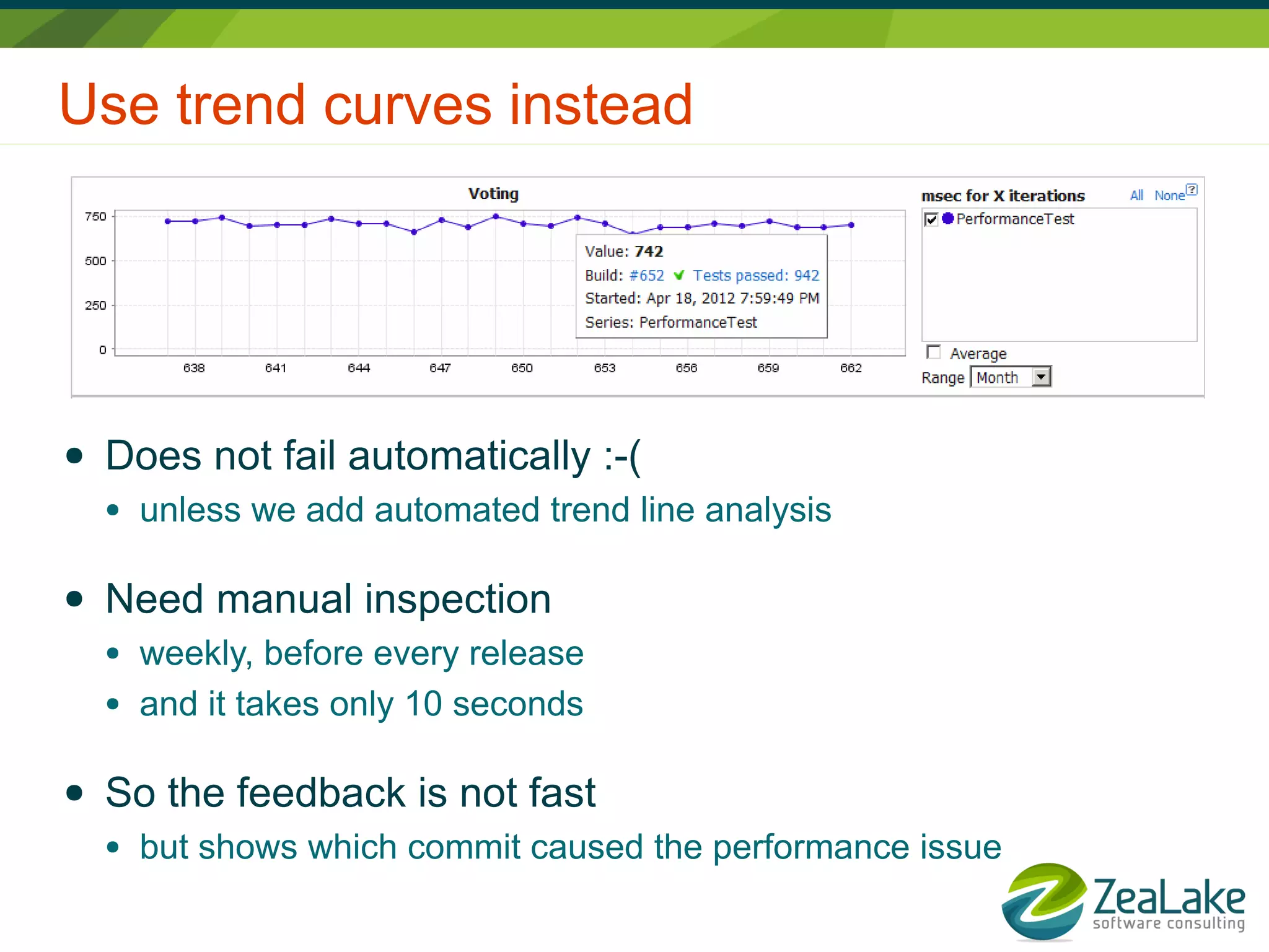

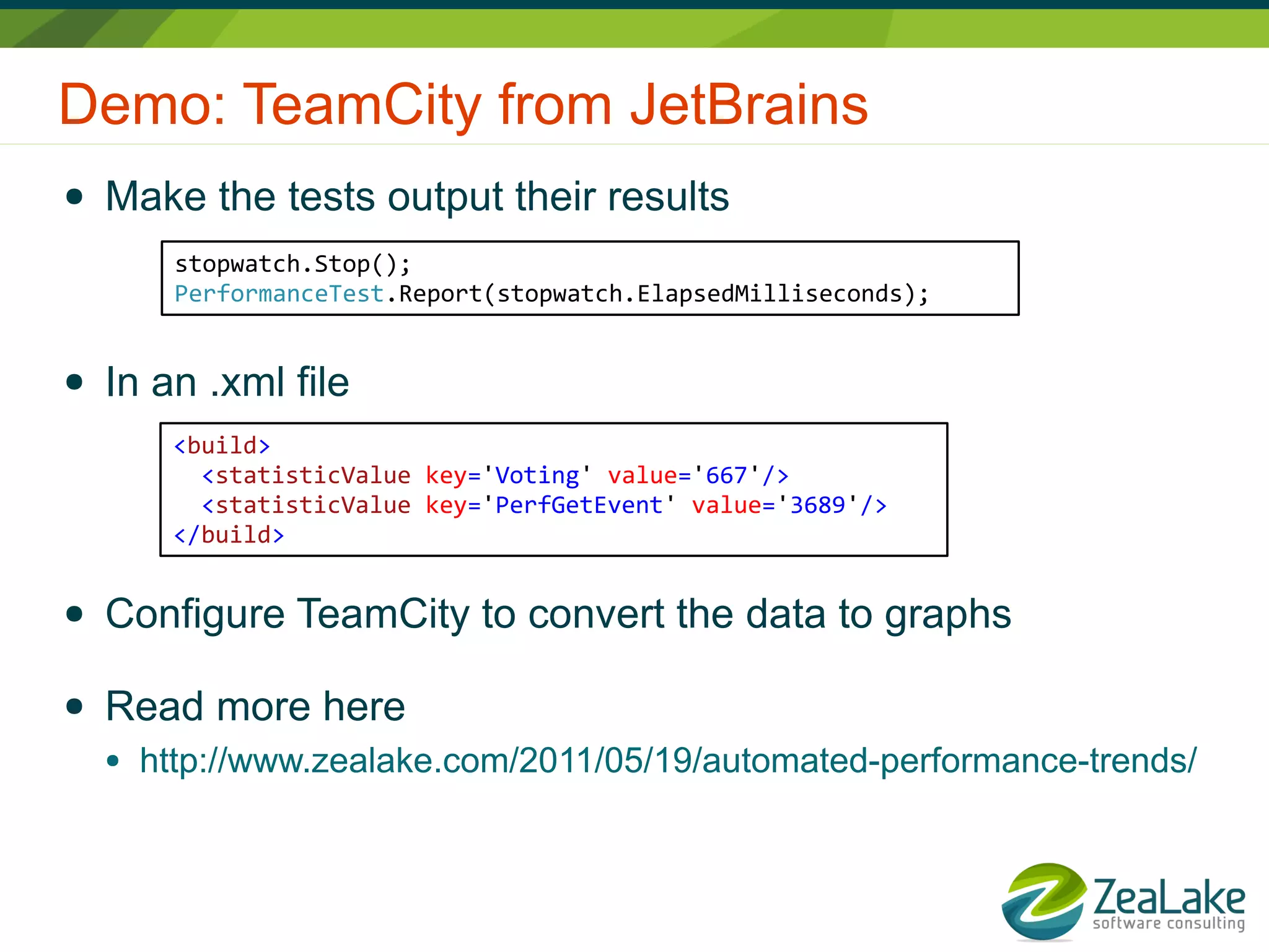

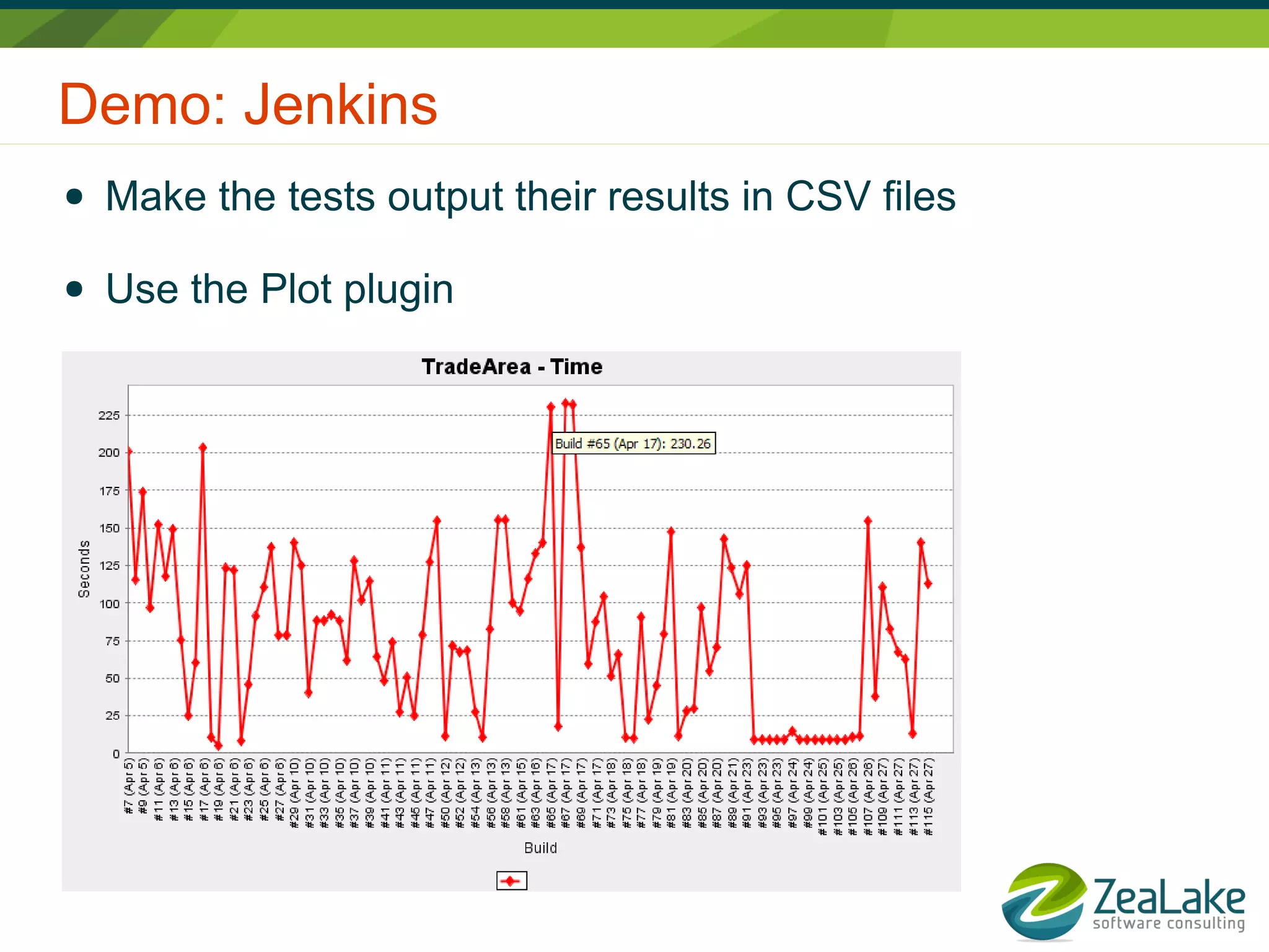

Lars Thorup is a software developer and founder of Zealake, focused on automated performance testing and agile methodologies. The document discusses strategies for measuring performance, including the use of automated tests with tools like TeamCity and Jenkins, and emphasizes the importance of trend analysis for identifying performance issues. It highlights the need for ongoing inspection to maintain performance metrics and describes the performance reporting processes.