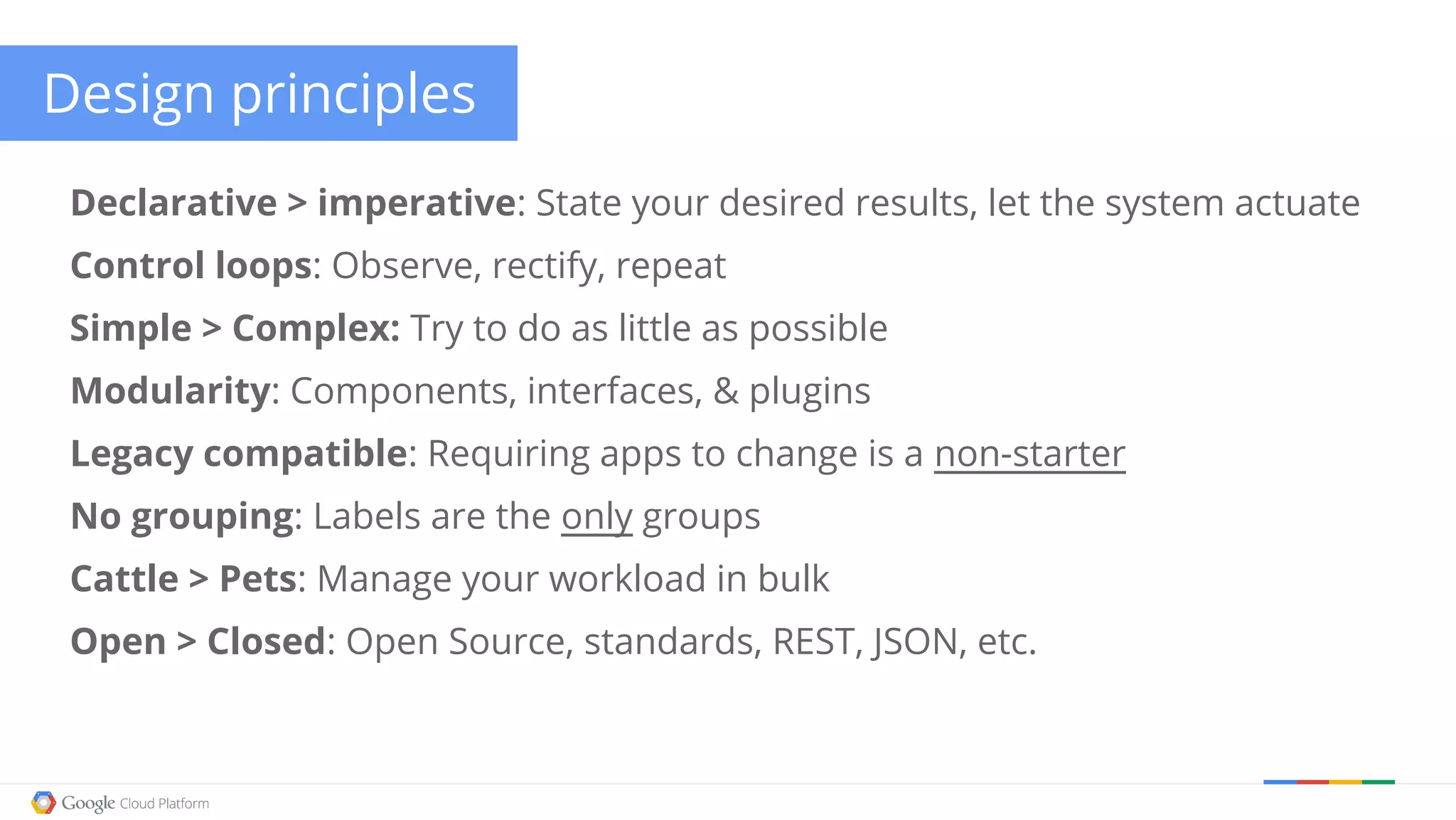

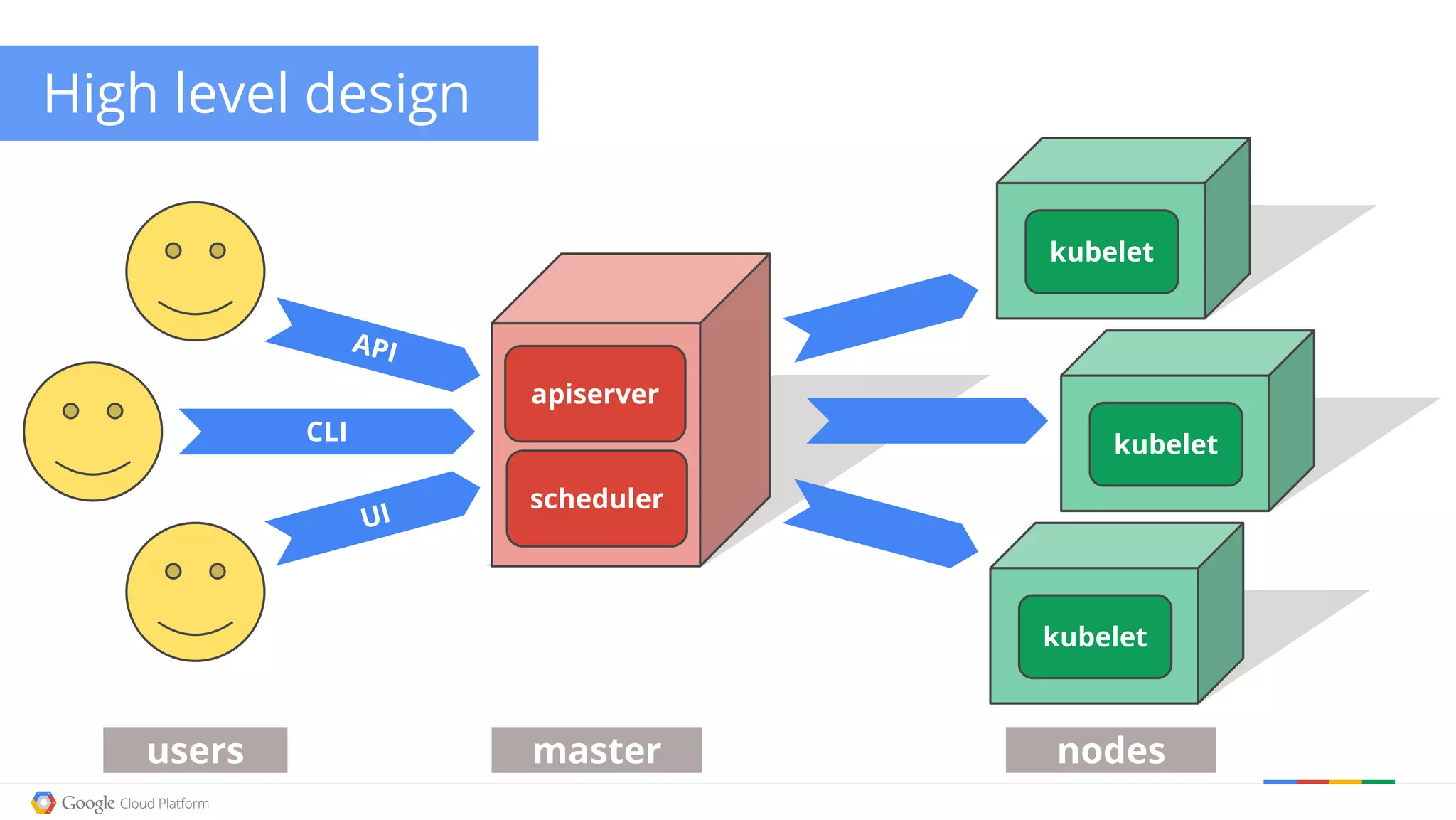

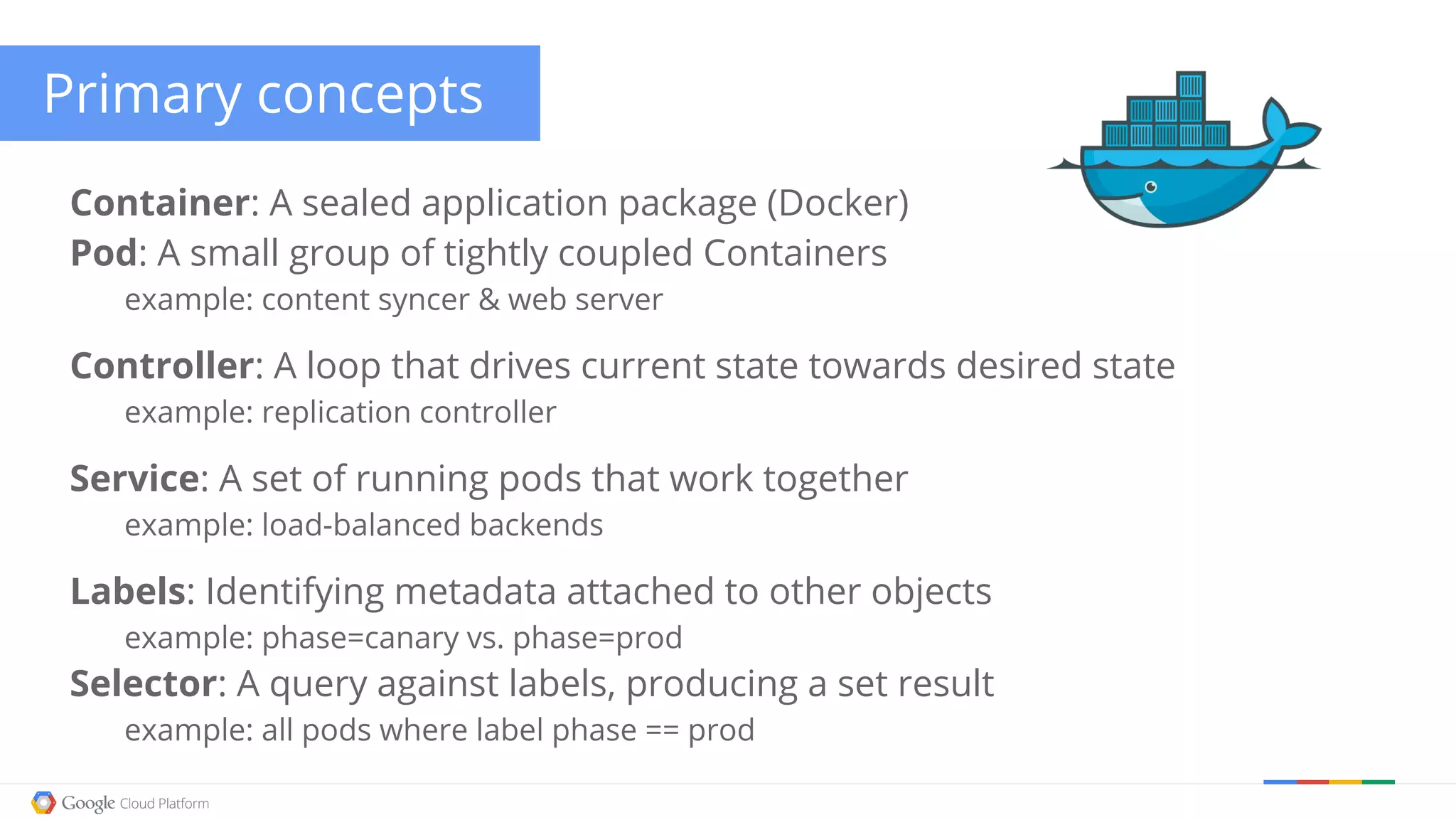

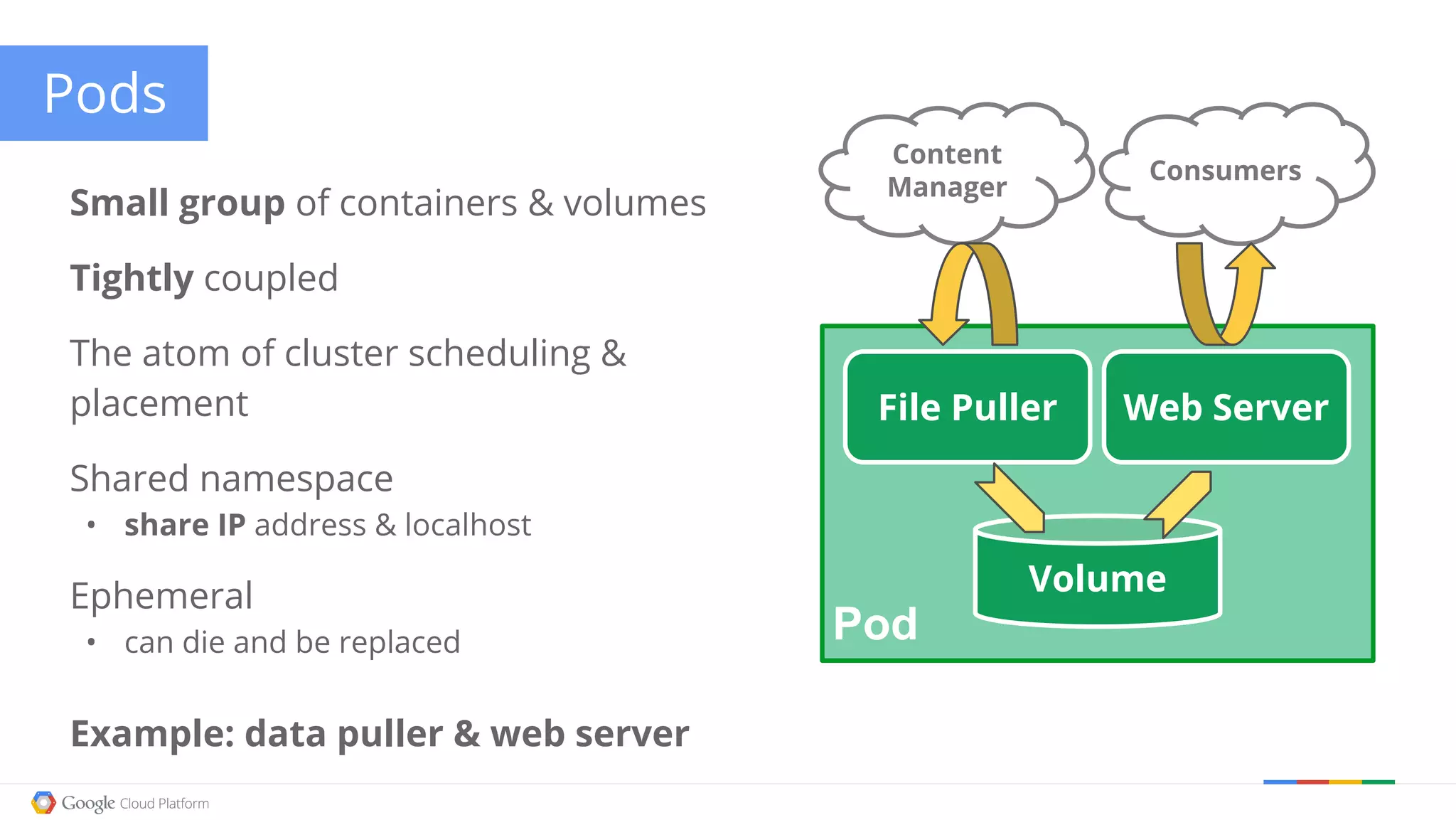

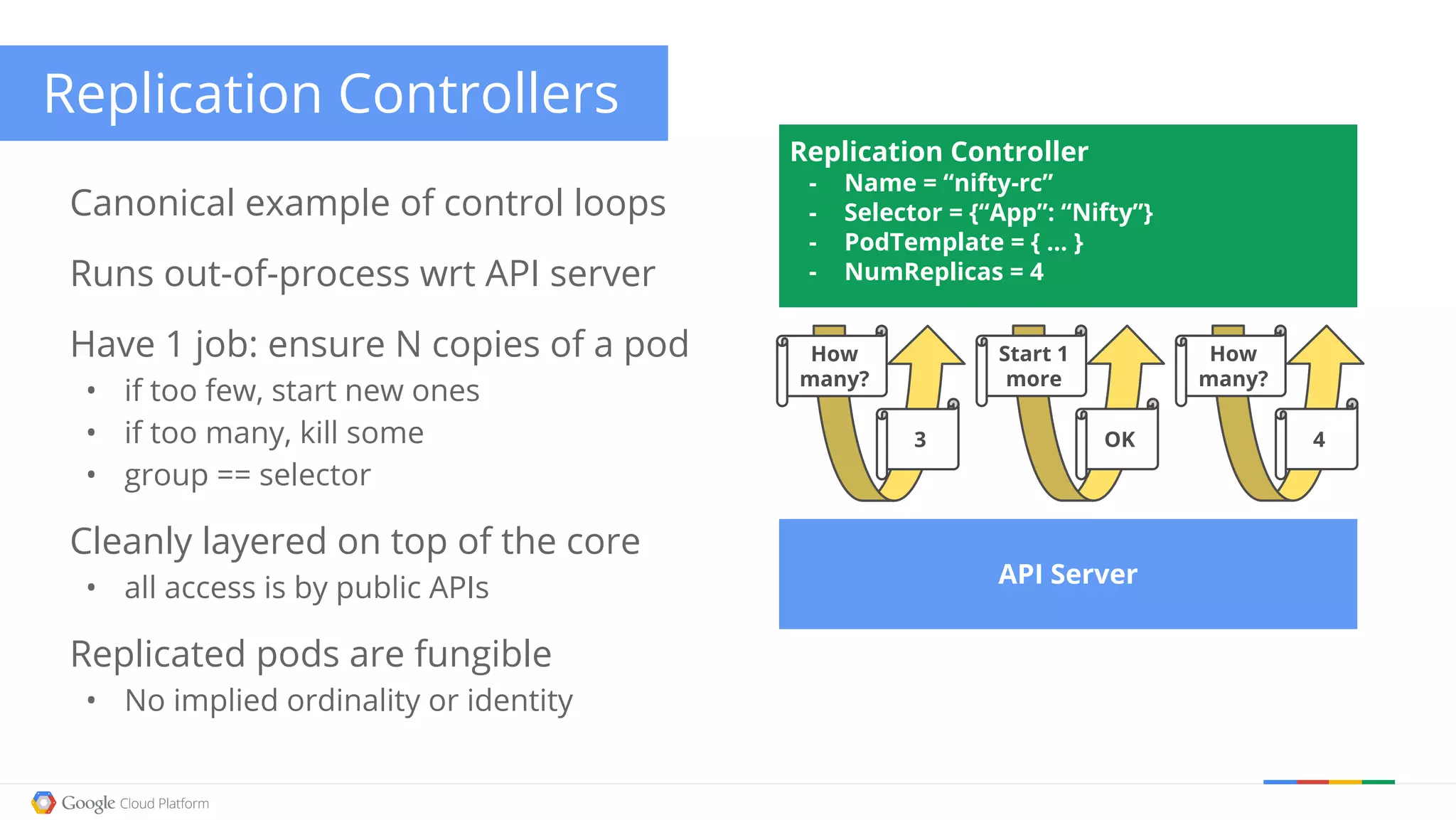

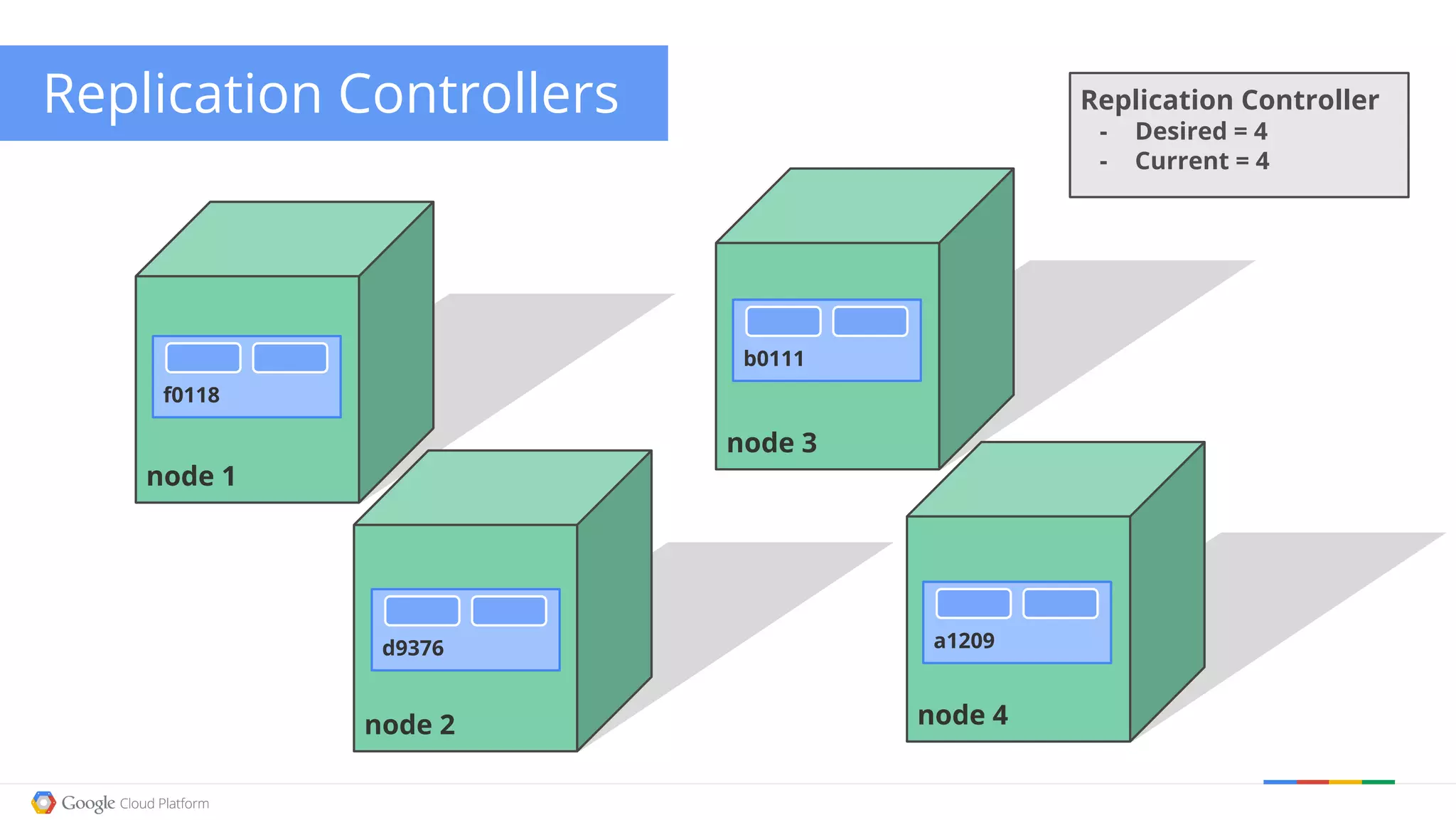

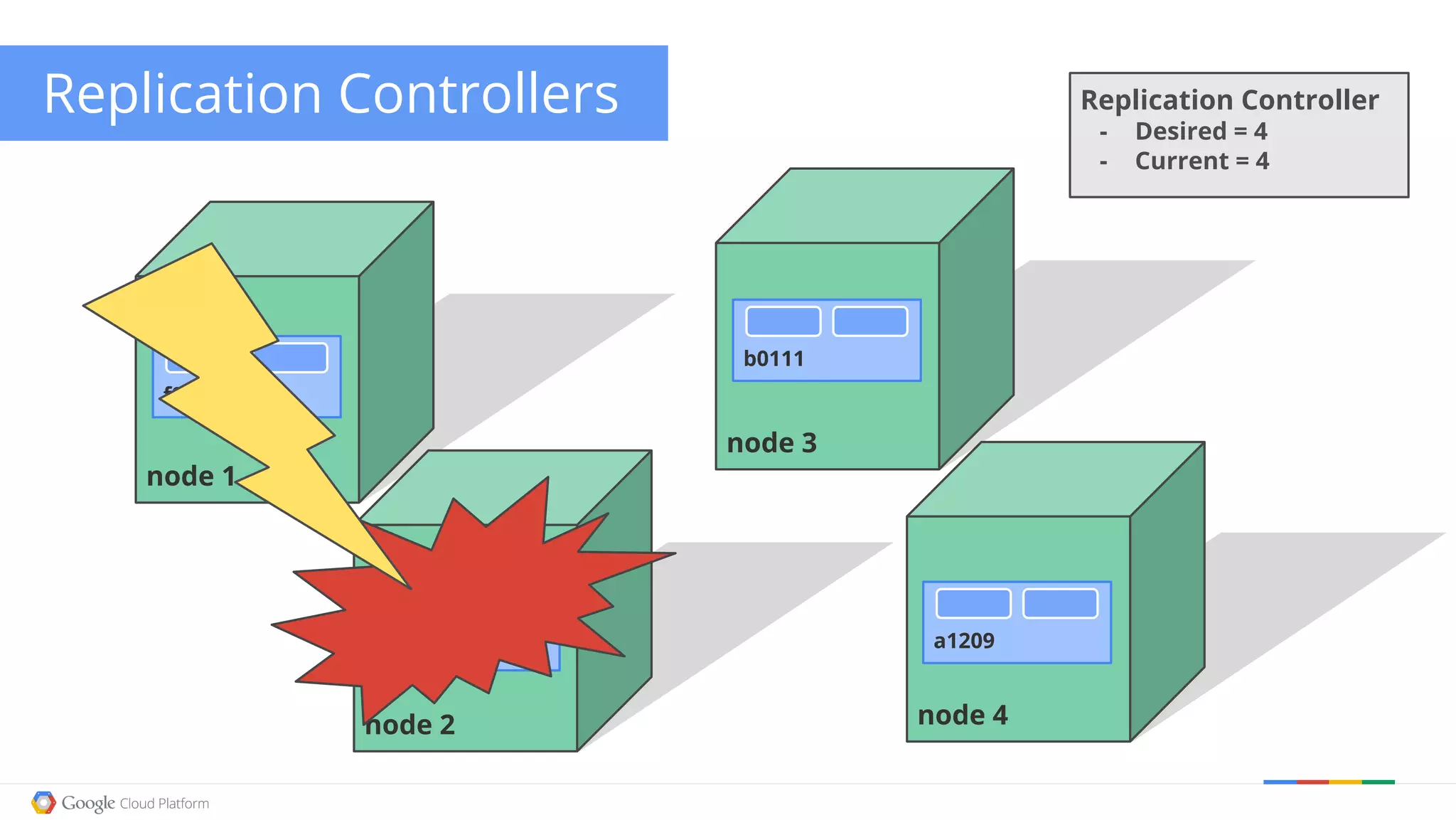

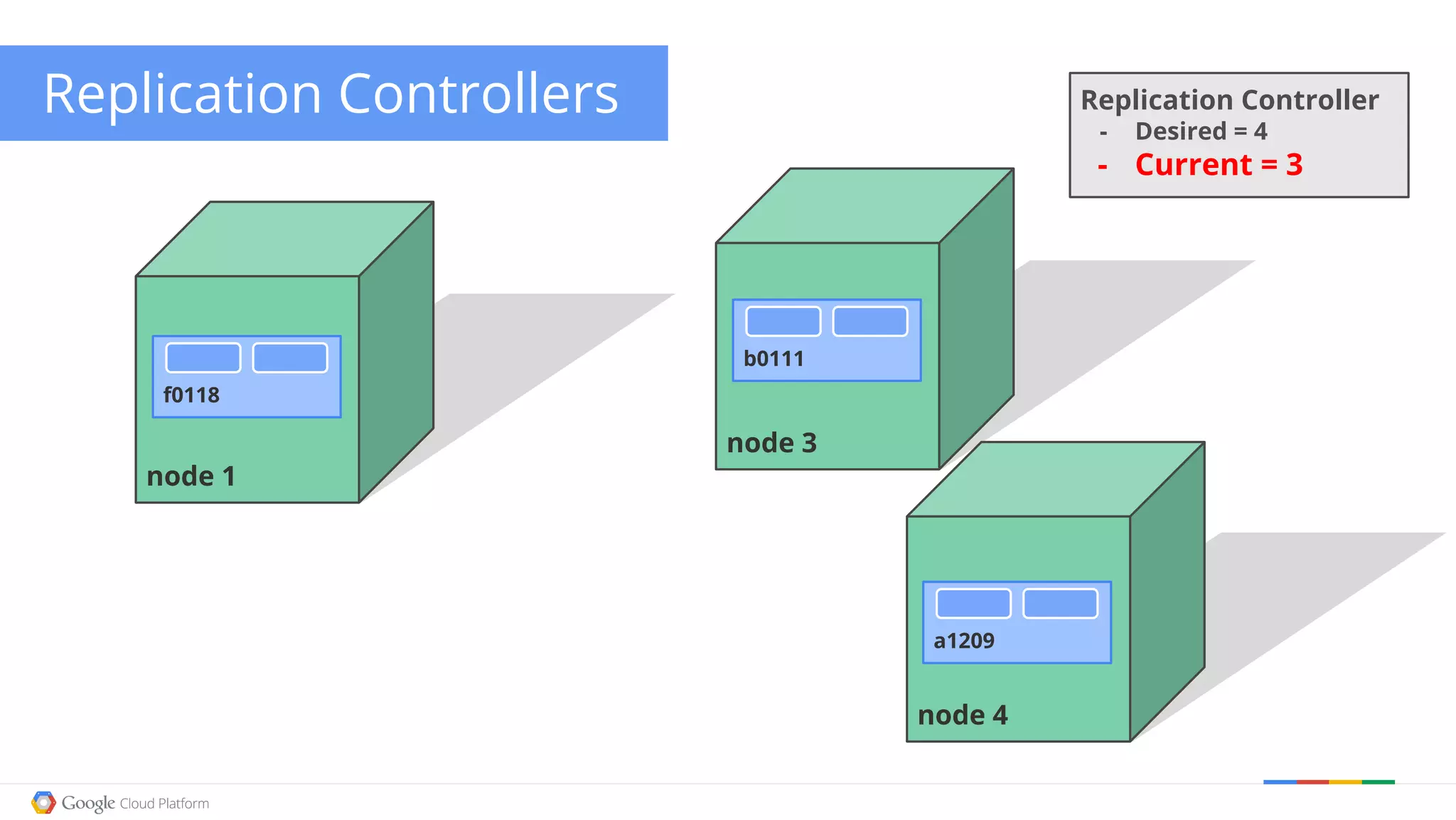

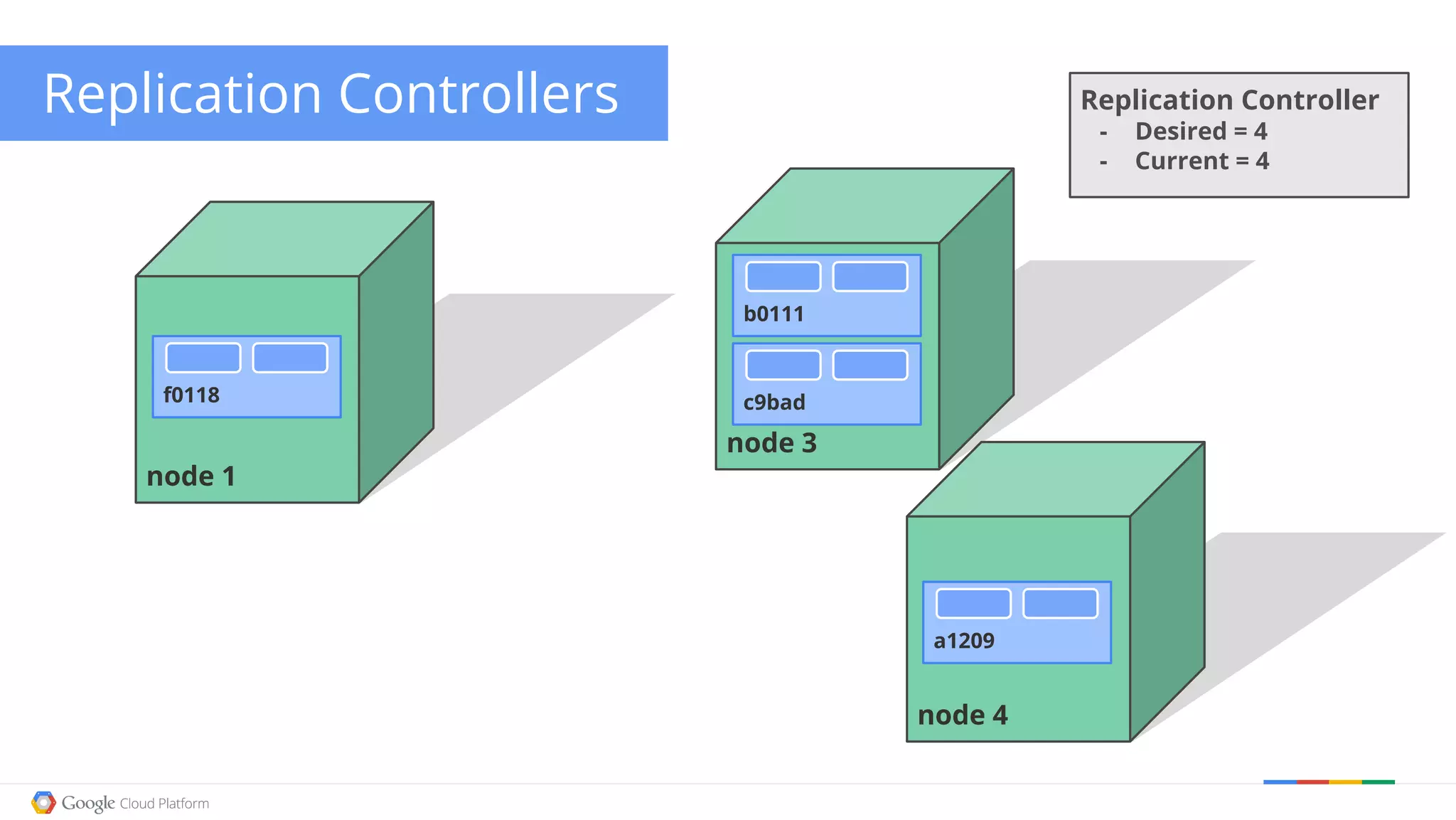

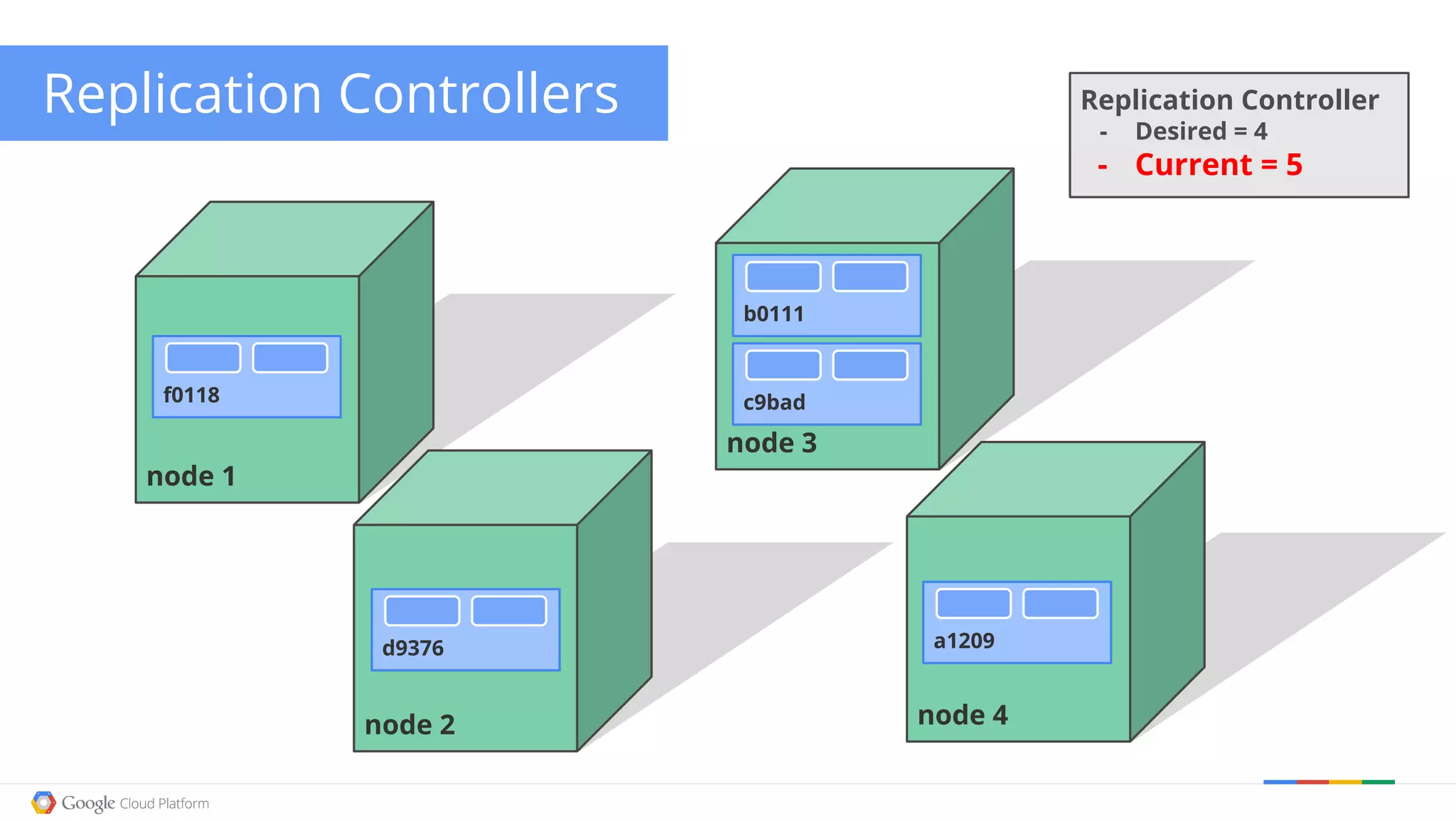

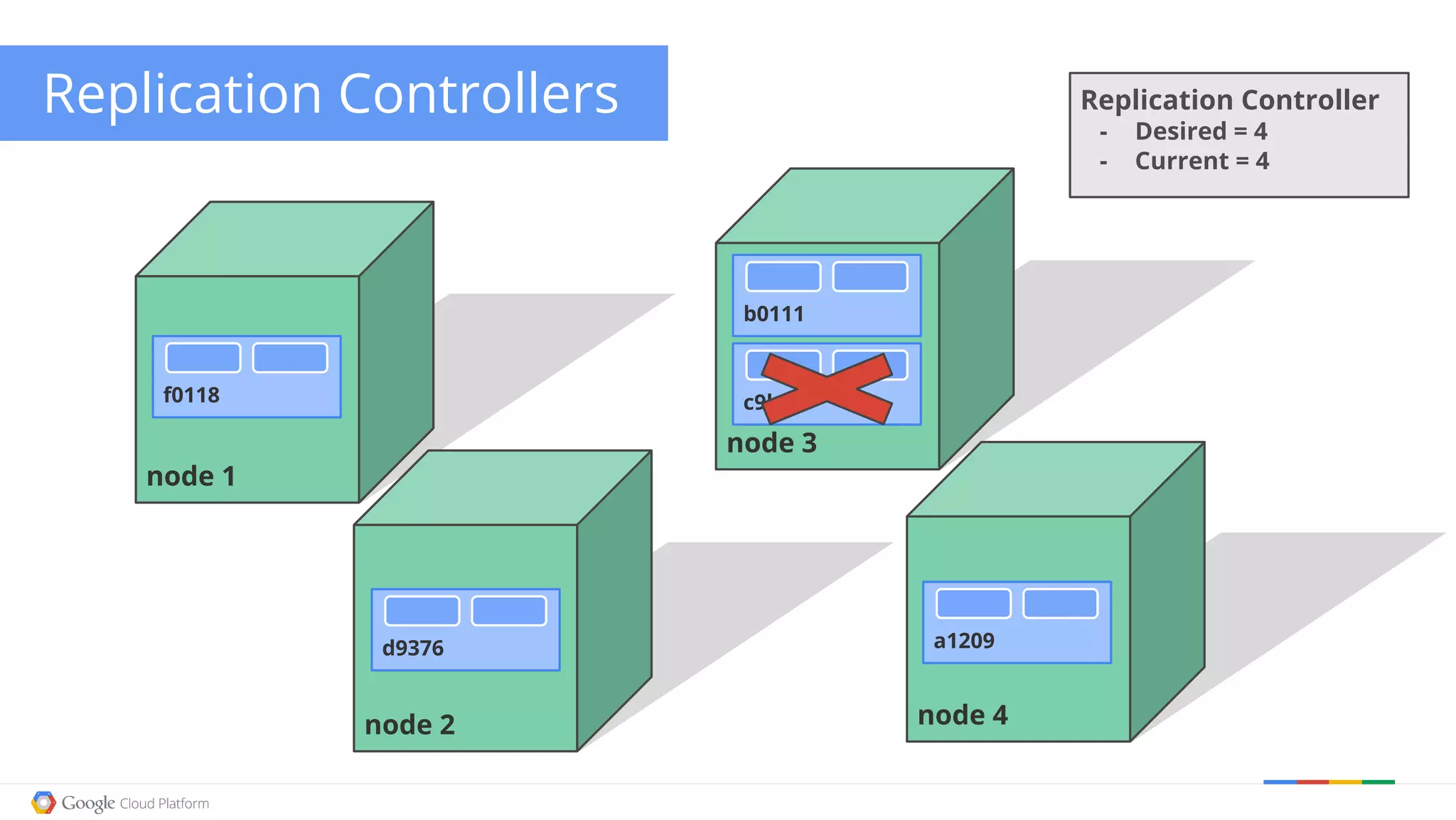

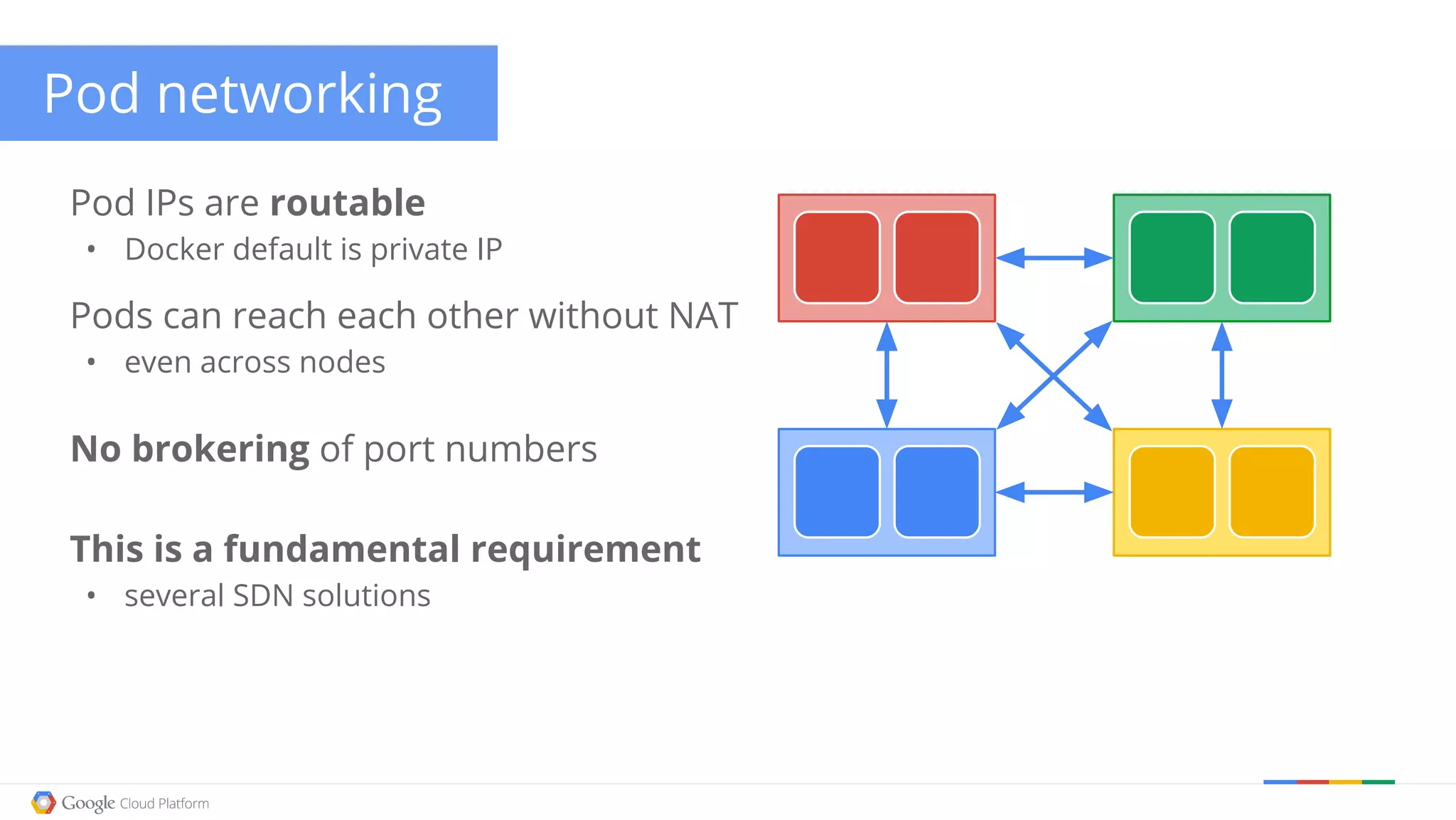

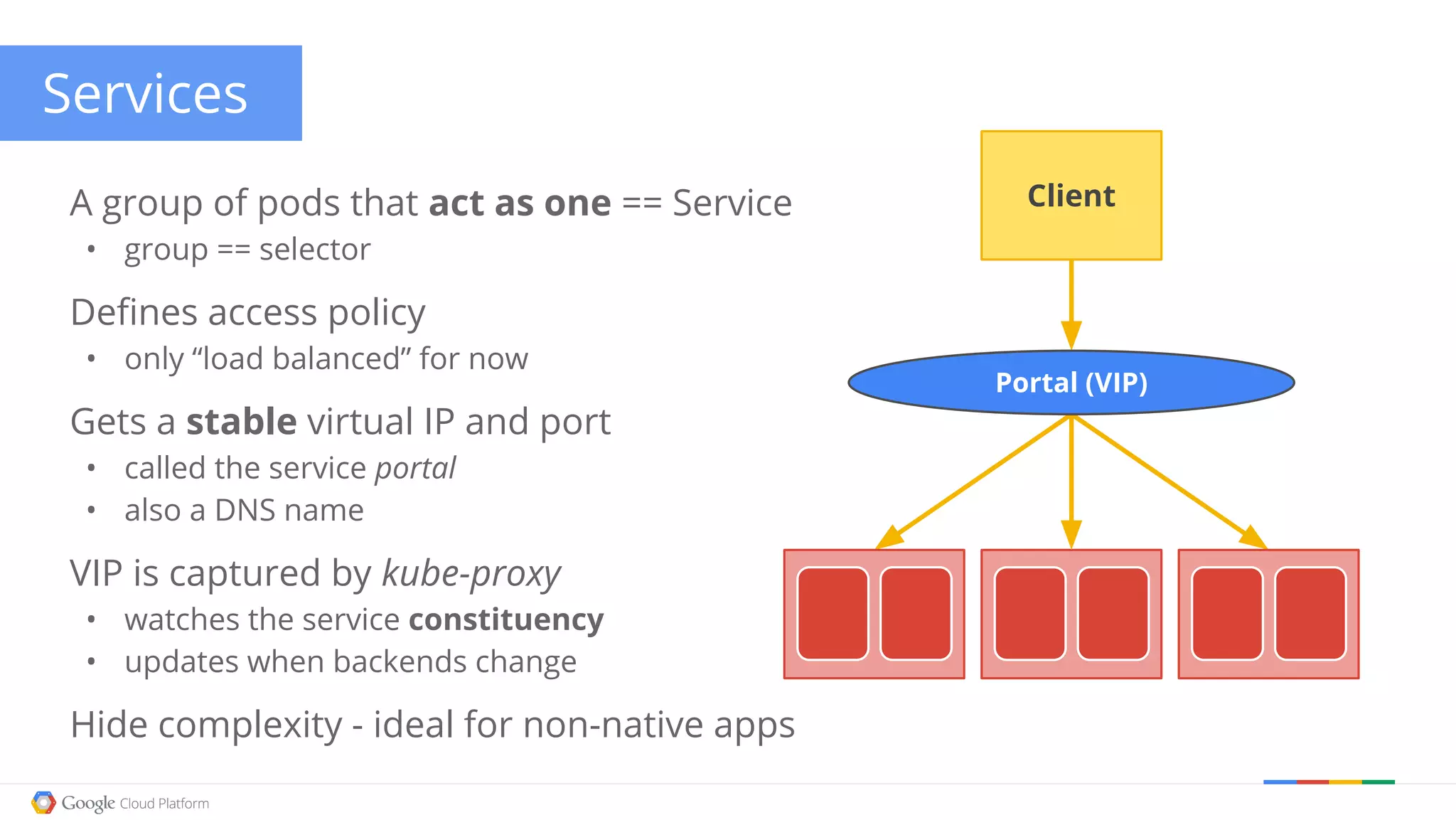

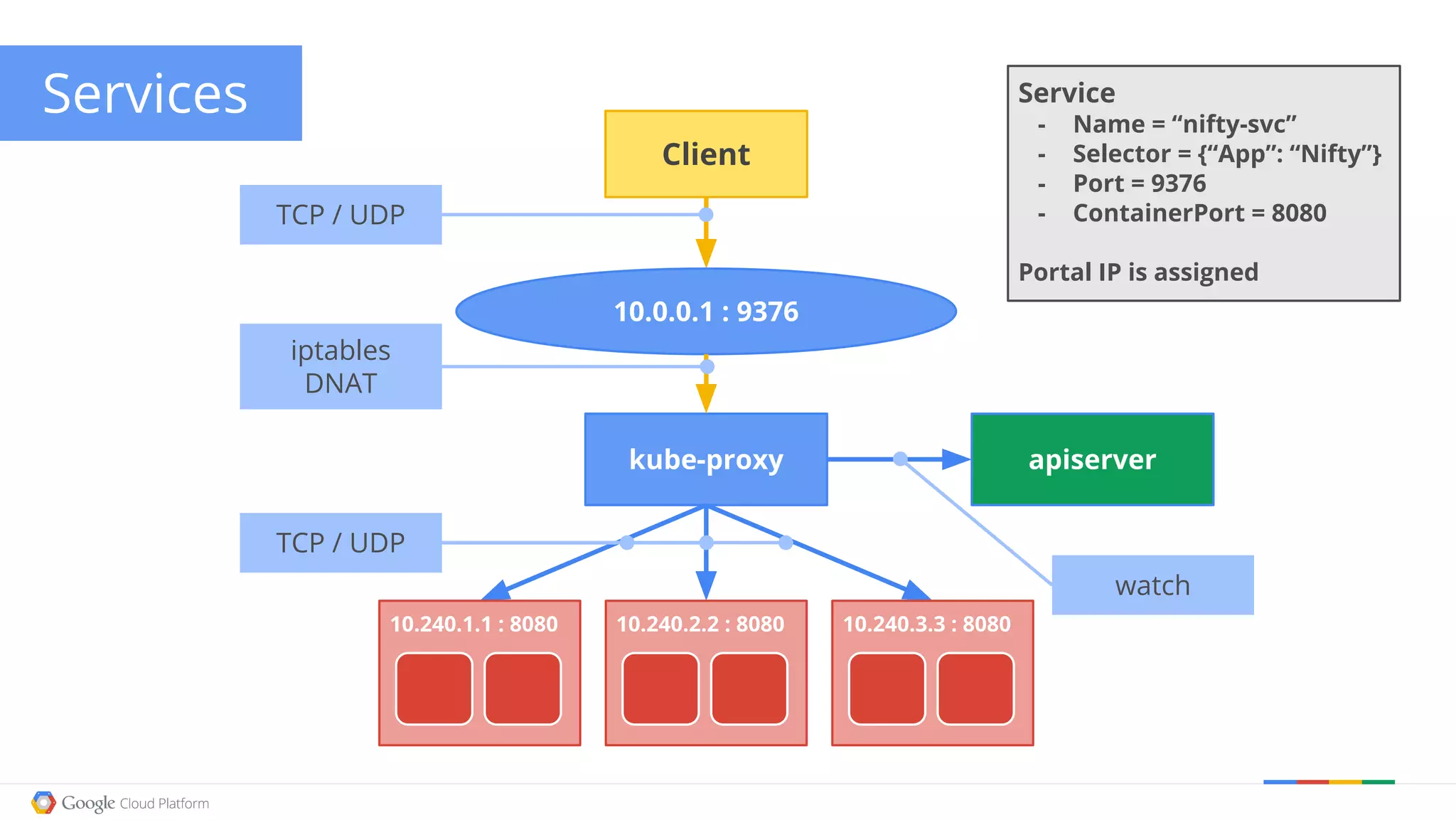

Kubernetes is Google's open-source container orchestration system that manages applications across cloud and bare-metal environments. It operates on a declarative model, allowing users to specify desired states, and utilizes key concepts such as containers, pods, and replication controllers to maintain and manage workloads. The architecture facilitates modularity, scaling, and high-level monitoring while ensuring seamless communication between application components.