Inspiration

Grocery shopping is a surprisingly complex optimization problem that most people solve poorly every single week. You're juggling dietary constraints, nutritional balance, household preferences, budget limits, and the sheer cognitive overhead of deciding what to eat 21 times in seven days — then translating that into a shopping list, finding products at a real store, and getting everything home. We noticed that existing meal planning apps treat these as isolated problems: one app generates recipes, another tracks macros, another builds grocery lists. None of them close the loop from dietary profile → personalized meal plan → optimized grocery list → actual store checkout. We wanted to build a system that treats the entire pipeline as one intelligent, end-to-end workflow — and uses AI not as a gimmick, but as the connective tissue that makes the whole thing adaptive and personal.

What it does

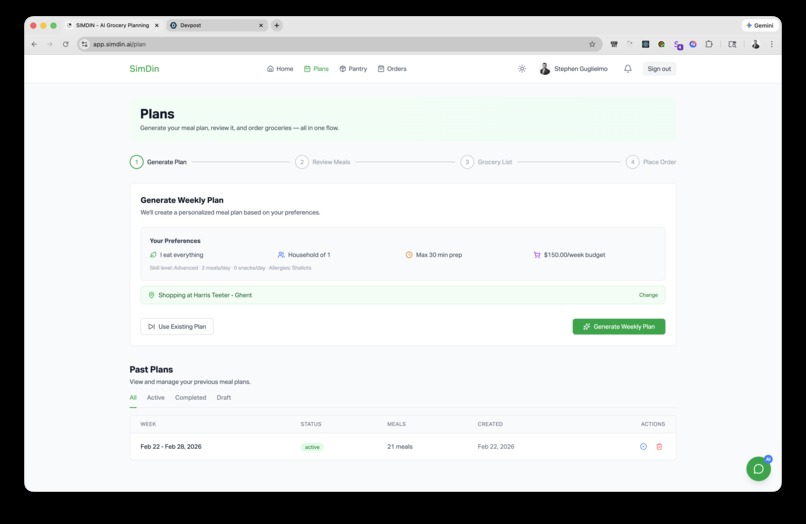

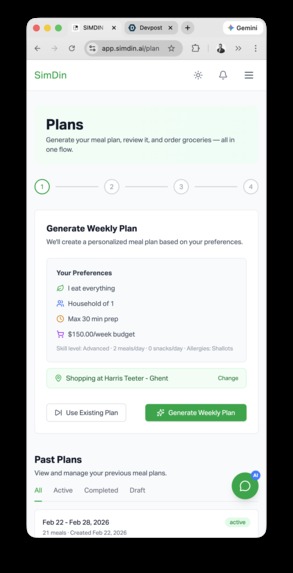

SimDin is an AI-powered grocery planning platform that takes a user from onboarding to doorstep delivery in a single continuous flow. On first launch, a conversational AI agent conducts a structured interview across 15 preference dimensions — dietary type, allergies, intolerances, cuisine affinities, cooking skill level, budget range, household size — and encodes those responses into a 384-dimensional preference embedding stored in PostgreSQL via pgvector. From there, the system generates personalized 7–14 day meal plans using a constrained LLM pipeline (GPT-4 Turbo with structured JSON output), enforcing no-repeat recipes, respecting locked meals, balancing macronutrients, and adapting to cuisine preferences. Users can lock meals they like, regenerate the rest, version their plans, and share them via expiring tokens.

The meal plan automatically feeds into a grocery list generator that maps recipe ingredients to real store products through a hybrid matching engine — rules-based category lookup combined with sentence-transformer embeddings for semantic similarity. Users select a shopping strategy (best value, organic preferred, or budget) and connect their Kroger account via OAuth 2.0 for live product search, pricing, and store selection by ZIP code. The final grocery list flows into a checkout pipeline with real-time price recalculation, tax estimation, and one-click cart submission to the provider's API, with async order status updates pushed back via webhooks and WebSocket notifications.

On top of all this, a collaborative filtering engine (SVD-based matrix factorization) learns from recipe ratings across users, and a content-based scoring layer combines ingredient overlap, cuisine match, and nutrition profile to deliver hybrid recommendations that improve with every interaction. A multi-mode chat assistant handles onboarding, general preference updates, and freeform text import — all backed by implicit preference learning that updates user embeddings incrementally as behavior patterns emerge.

How we built it

SimDin is a polyglot monorepo with three core services, each chosen for what it does best:

API Gateway — Rust (Axum 0.7). The backend runs on Tokio's async runtime with compile-time SQL verification via SQLx. We used hexagonal architecture to keep domain logic pure — business rules for meal plans, orders, pricing, and notifications live in domain/, completely decoupled from database adapters in infrastructure/. The API exposes 22 route modules covering auth (Argon2 hashing, JWT with refresh tokens, email verification), meal planning (generation, locking, versioning, SSE progress streaming), grocery management (list generation, PDF/CSV export), order processing (Kroger cart submission, webhook handlers, price breakdowns), and a real-time notification layer over Redis pub/sub → WebSocket. Rate limiting, Prometheus metrics, request ID tracking, and CORS are all handled at the middleware level. A separate worker binary consumes RabbitMQ queues for long-running tasks like meal generation, order reconciliation, and transactional emails.

AI Service — Python 3.12 (FastAPI). This is where the intelligence lives. Nine specialized ML models orchestrate everything from meal generation to ingredient substitution. The MealGenerator wraps OpenAI's API with structured output constraints and falls back to a curated recipe database (80+ built-in recipes) when the API is unavailable. The ProductMatcher uses a dual pipeline: deterministic rules for category/unit/brand matching, plus 384-dim sentence-transformer embeddings for fuzzy semantic matching against a ~5,000-product catalog. The CollaborativeFilter runs SVD factorization over user-recipe rating matrices with 20 latent factors, retraining hourly. The RecommendationService blends collaborative and content-based signals with weighted scoring bounded to [0, 1]. The ConversationAgent operates in three modes (onboarding, general, import) with mode-specific system prompts and tool detection for meal plan requests, recipe searches, and budget swaps. Supporting services handle USDA nutrition analysis, ingredient substitution via co-occurrence graphs, and incremental preference embedding updates.

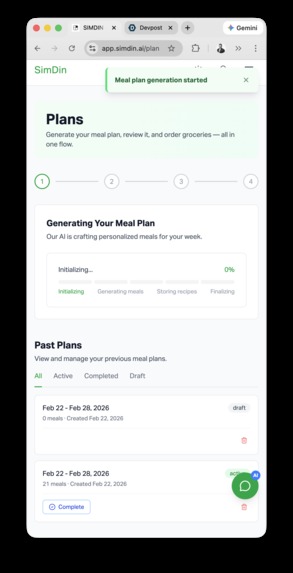

Frontend — React 18 (TypeScript, Vite, TailwindCSS). A single-page application with 15+ lazy-loaded pages, Zustand for auth/theme state, React Query (TanStack) for server state with optimistic mutations, and a custom ApiClient wrapper for REST calls. The meal planner renders an interactive weekly calendar with drag-to-swap meals, lock/unlock toggles, and inline nutrition breakdowns. The grocery flow includes real-time price updates, store selection, and a checkout approval screen. SSE streaming provides live progress during meal plan generation. Dark mode persists via local storage. The app connects to a WebSocket endpoint for push notifications on async operations.

Infrastructure. PostgreSQL 16 with pgvector handles relational data and vector similarity search. Redis 7 manages session caching, rate limit counters, and pub/sub for real-time notifications. RabbitMQ 3 decouples long-running AI workloads from the request/response cycle. The full stack runs in Docker Compose with multi-stage builds (Rust backend compiles to ~50MB, AI service to ~300MB). Prometheus + Grafana + Loki provide metrics, dashboards, and centralized log aggregation. 52 tracked database migrations manage the schema lifecycle.

Challenges we ran into

Bridging the LLM-to-structured-data gap. Getting GPT-4 to consistently output valid, constraint-satisfying meal plans in JSON was harder than expected. The model would hallucinate recipes, ignore dietary restrictions, or produce malformed JSON. We solved this with structured output mode, explicit constraint enumeration in the system prompt, post-generation validation, and a fallback recipe database that guarantees a valid plan even when the LLM fails.

Product matching at scale. Mapping freeform ingredient strings ("2 cups diced Roma tomatoes") to real store products ("Kroger Organic Diced Tomatoes, 14.5oz can") requires understanding units, brands, product forms, and substitutability. Pure string matching fails spectacularly. We built a hybrid pipeline — deterministic rules handle the 80% case (unit conversion, category lookup), and sentence-transformer embeddings catch the long tail — with Redis caching to keep latency under 100ms per ingredient.

Real-time UX for async AI workloads. Generating a full meal plan can take 10–30 seconds depending on LLM response time. We couldn't block the HTTP request that long. The solution was a three-layer async architecture: the API enqueues a job to RabbitMQ, the worker processes it and publishes progress events to Redis pub/sub, and the frontend streams updates via SSE. This required careful state machine design for plan status transitions (draft → generating → active → completed) and idempotent retry logic.

Collaborative filtering cold start. SVD factorization needs density in the user-recipe rating matrix to produce meaningful latent factors. With new users, there's nothing to factorize. We implemented a minimum threshold (≥5 system ratings, ≥5 per-user ratings) below which the system gracefully falls back to pure content-based recommendations, then gradually blends in collaborative signals as the matrix fills.

Keeping three services in sync. A Rust API, Python AI service, and React frontend all evolving simultaneously meant schema drift was a constant threat. SQLx's compile-time query checking caught backend-database mismatches early. Pydantic v2 models enforced contract compliance on the AI service boundary. TypeScript's strict mode (no any, no unsafe casts) prevented frontend type rot. But the integration seams between services still required careful versioning of internal API contracts.

Accomplishments that we're proud of

We shipped a 20,000+ line production-grade system across three languages with clean separation of concerns — the Rust backend has zero unwrap() calls in production paths, the Python AI service has full Pydantic validation on every boundary, and the TypeScript frontend runs in strict mode with no any types.

The hybrid recommendation engine genuinely improves over time. Collaborative filtering captures cross-user taste patterns that content-based scoring alone misses, and the weighted blending means recommendations are useful from day one (content-based) and get smarter with scale (collaborative).

The end-to-end grocery pipeline actually works — from AI-generated meal plan to real Kroger products in a real cart with real pricing. OAuth token management, automatic refresh, store-specific product availability, tax calculation, and webhook-driven order status updates all function as a complete checkout flow.

The preference embedding system using pgvector gives us semantic understanding of user taste. Instead of brittle keyword matching ("likes Italian"), we encode preferences into a 384-dim vector space where similarity search surfaces recipes that feel right even when they don't share obvious surface features.

The observability stack (Prometheus + Grafana + Loki) means we can trace a request from the React frontend through the Rust API into the Python AI service and back, with structured logs, latency histograms, and throughput gauges at every layer. For a hackathon project, this level of production readiness is unusual.

What we learned

Rust's type system is worth the learning curve for API backends. Compile-time guarantees eliminated entire categories of runtime bugs — null pointer errors, type mismatches, unhandled error cases. The AppError enum with exhaustive matching means every failure mode has a deliberate HTTP response. The tradeoff is slower iteration speed, but for a backend that handles money and personal data, correctness matters more than velocity.

LLMs are powerful but unreliable primitives. You can't just prompt GPT-4 and ship the output. Every LLM call needs validation, fallback logic, and graceful degradation. The meal generator works because of the infrastructure around it — structured output parsing, constraint checking, fallback recipes — not because of the model alone.

Embeddings are the right abstraction for preferences. Traditional preference systems use discrete categories (vegetarian, gluten-free) which are rigid and combinatorially explosive. Encoding preferences into continuous vector space lets us do approximate nearest-neighbor search, capture nuanced taste profiles, and update incrementally without schema changes.

Async architecture is essential but adds operational complexity. RabbitMQ + Redis pub/sub + SSE streaming gives users a responsive experience during long AI operations, but it also means debugging requires correlating events across three systems. Structured logging with request ID propagation was the single most valuable investment for developer productivity.

Monorepos with polyglot services need disciplined contracts. The integration boundary between Rust ↔ Python ↔ TypeScript is where bugs hide. Compile-time validation (SQLx, Pydantic, TypeScript strict mode) catches most issues, but you still need integration tests that exercise the full request path across service boundaries.

What's next for SimDin

Multi-provider expansion. The adapter pattern in the backend is designed for exactly this — we want to add Amazon Fresh, Whole Foods, Instacart, and Walmart Grocery as provider options, letting users price-compare across stores for the same grocery list.

Mobile app launch. The React Native (Expo) scaffold is already in the repo. The API is fully mobile-ready with JWT auth and the same REST endpoints — we need to build out the native UI for meal plan browsing, push notifications for order updates, and barcode scanning for pantry tracking.

Waste reduction engine. We're tracking pantry items but not yet using them to influence meal planning. The next iteration will factor in what's already in your fridge, prioritize ingredients approaching expiration, and suggest meals that minimize food waste — turning the grocery planner into a household food optimization system.

Advanced nutrition coaching. The USDA nutrition data is there, but we want to add personalized goal tracking (macro targets, micronutrient gaps), meal plan scoring against user-defined health objectives, and proactive suggestions when a plan is nutritionally unbalanced.

Federated learning for recommendations. As the user base grows, we want to improve collaborative filtering without centralizing sensitive dietary data. Federated learning would let us train recommendation models across users while keeping preference data on-device.

Recipe community and social features. User-submitted recipes, meal plan sharing with friends/family, household accounts with shared grocery lists, and a social feed of highly-rated meals from users with similar taste profiles.

Log in or sign up for Devpost to join the conversation.