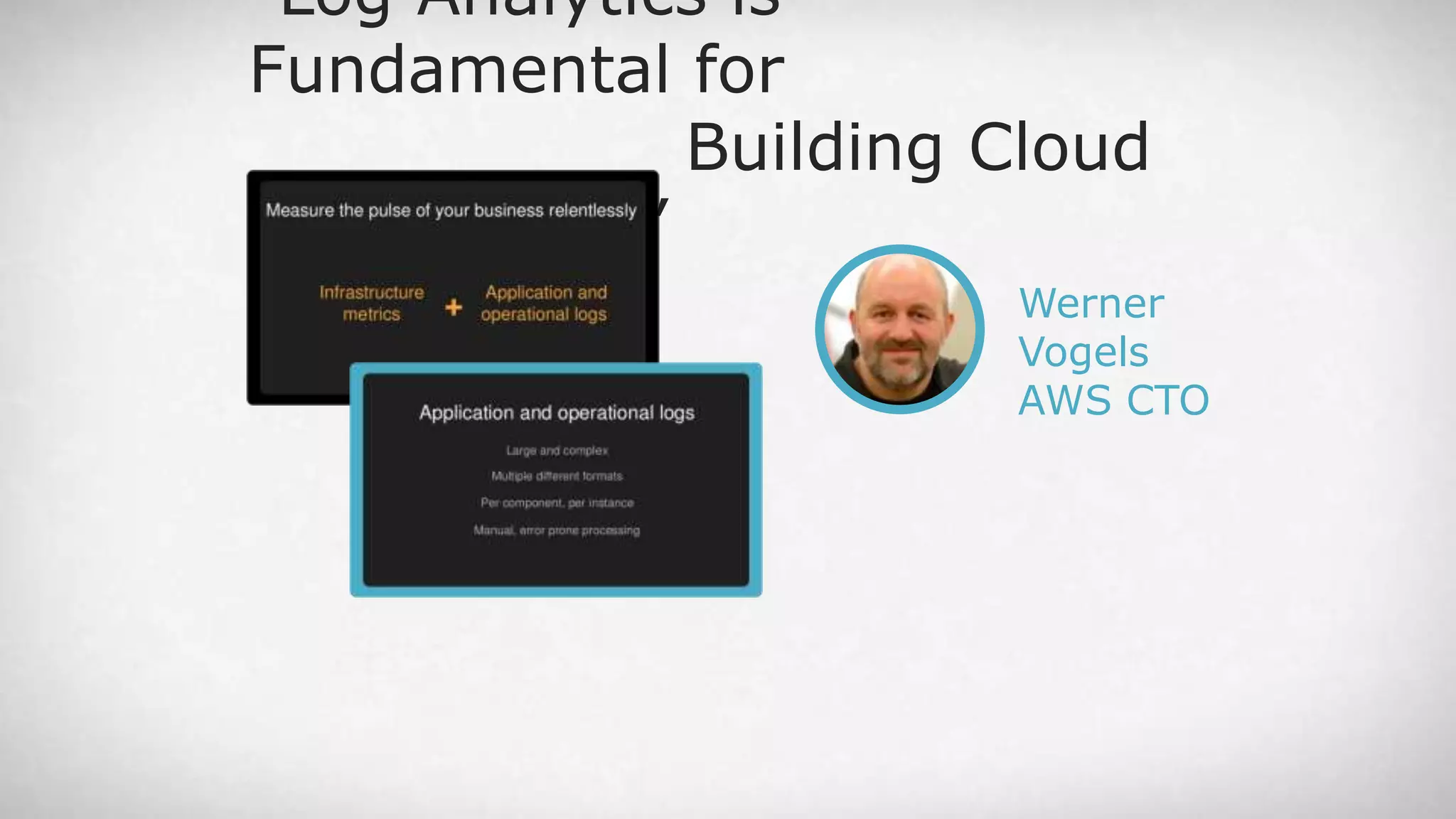

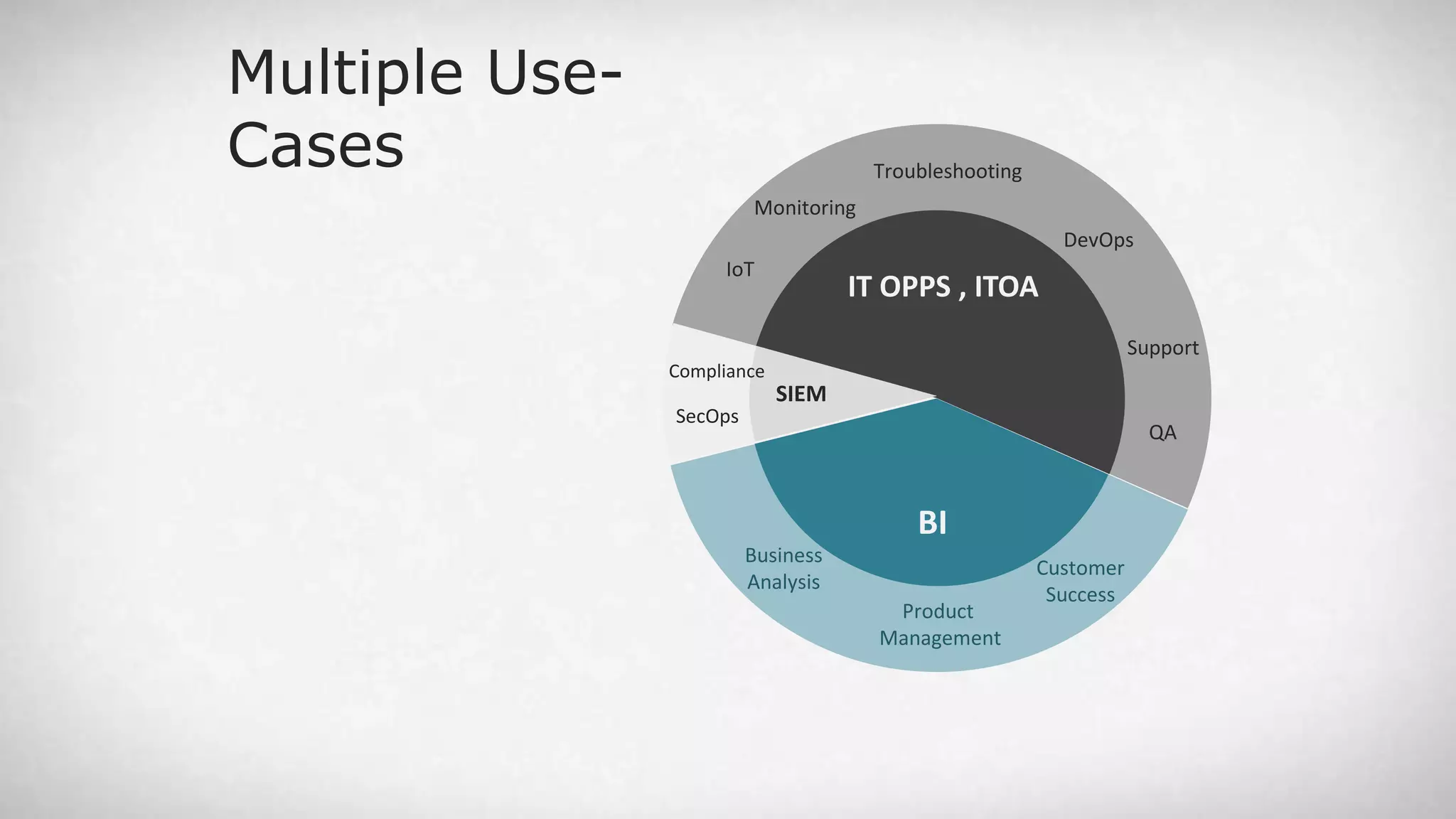

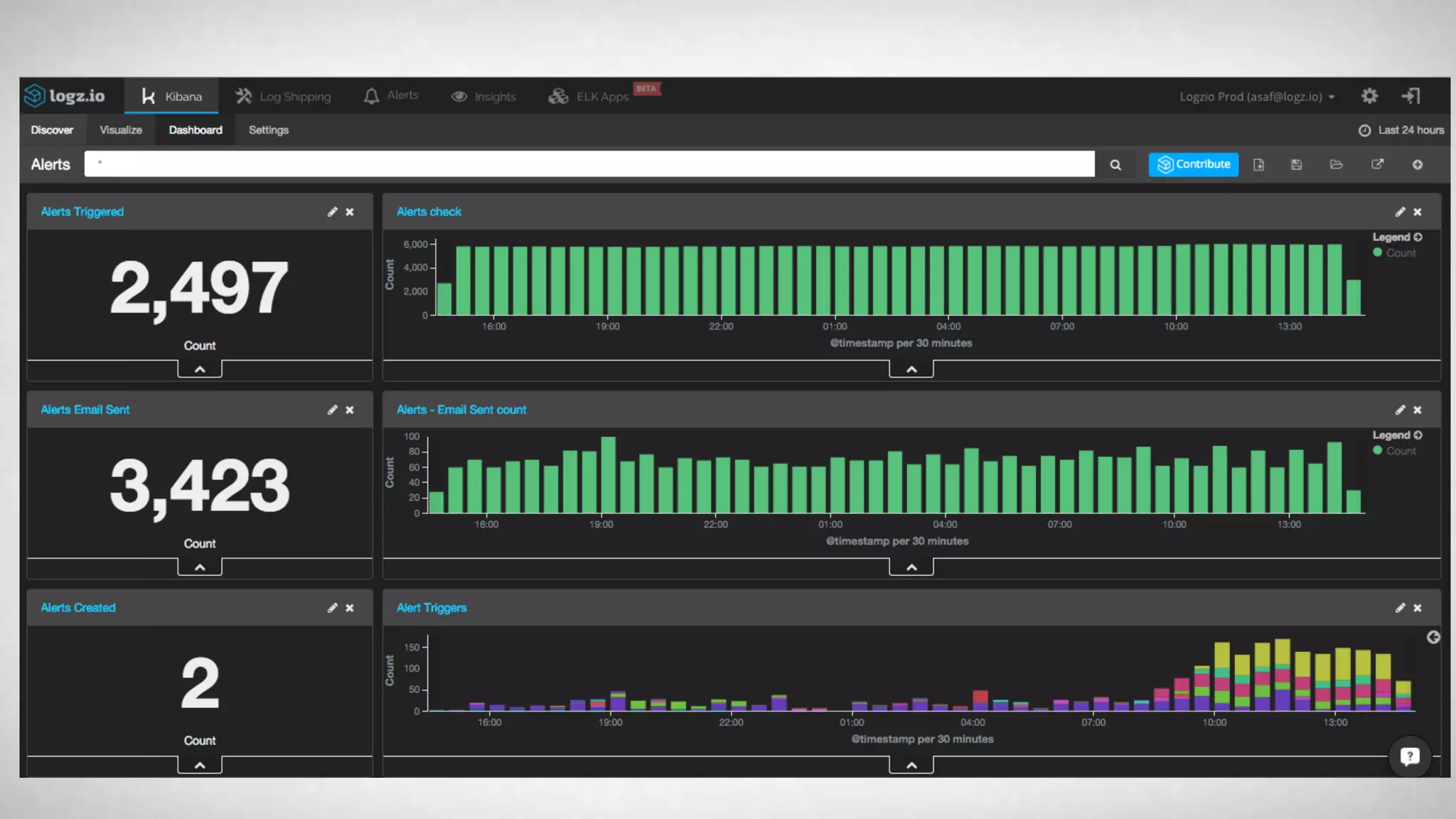

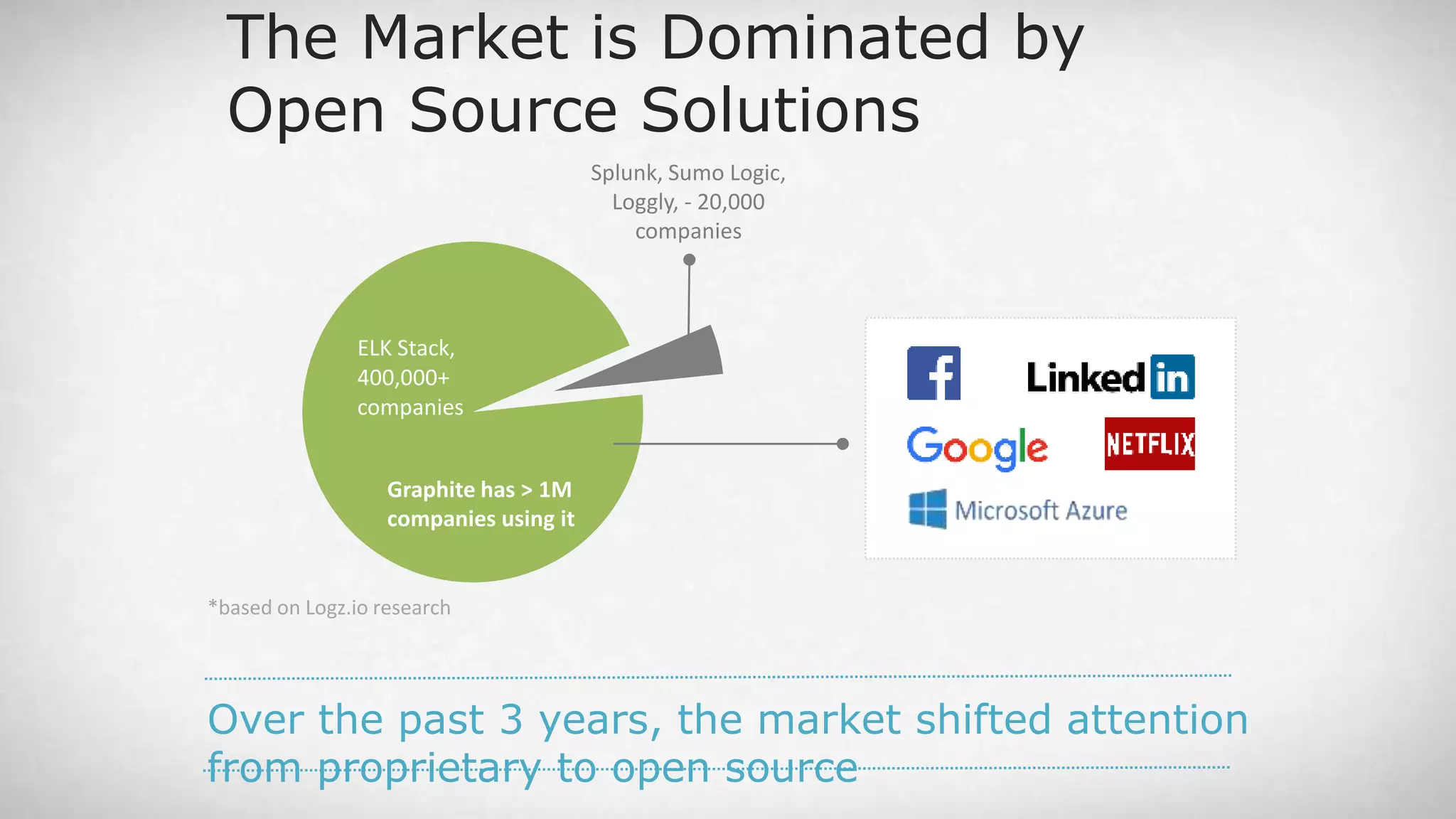

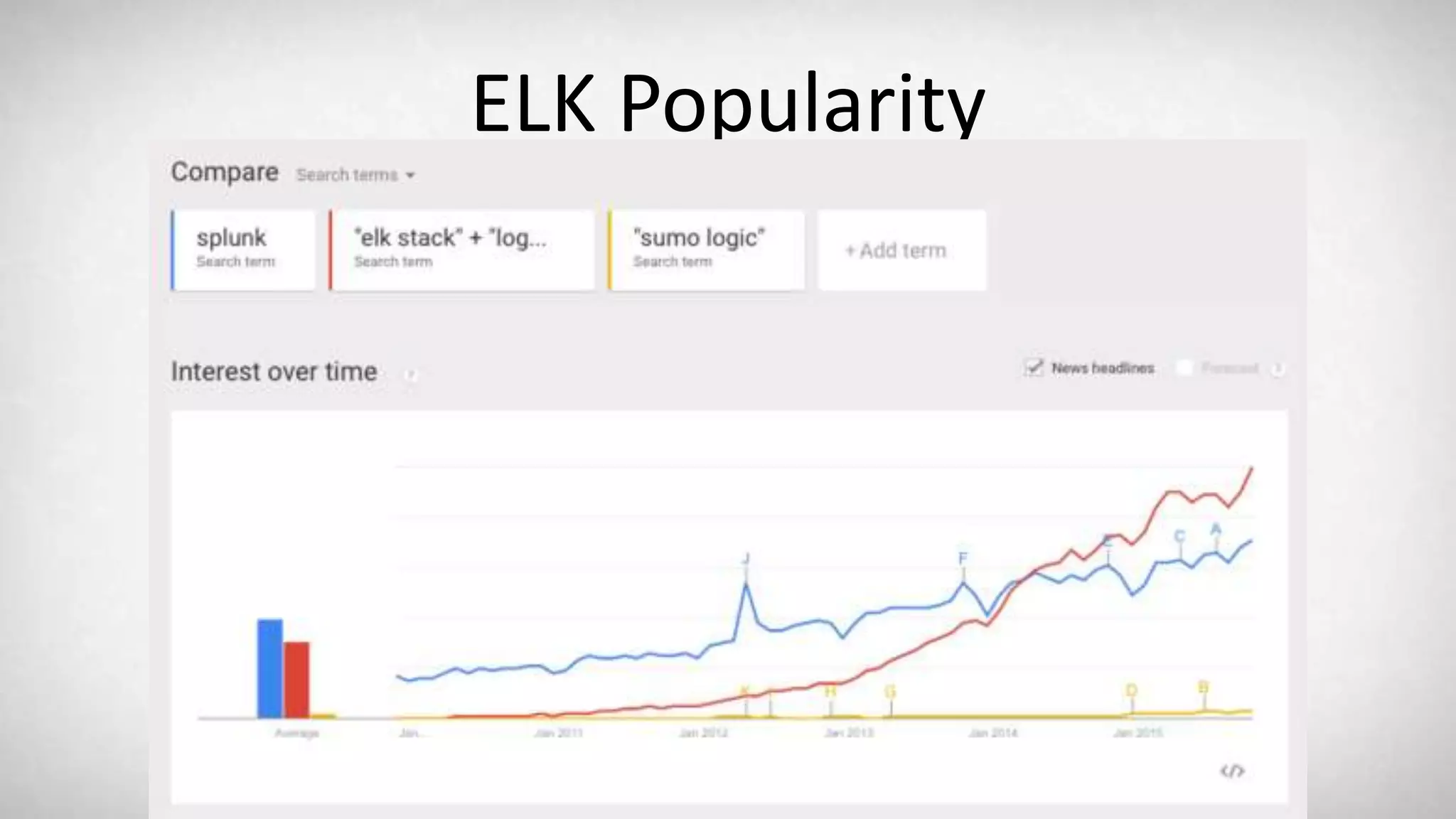

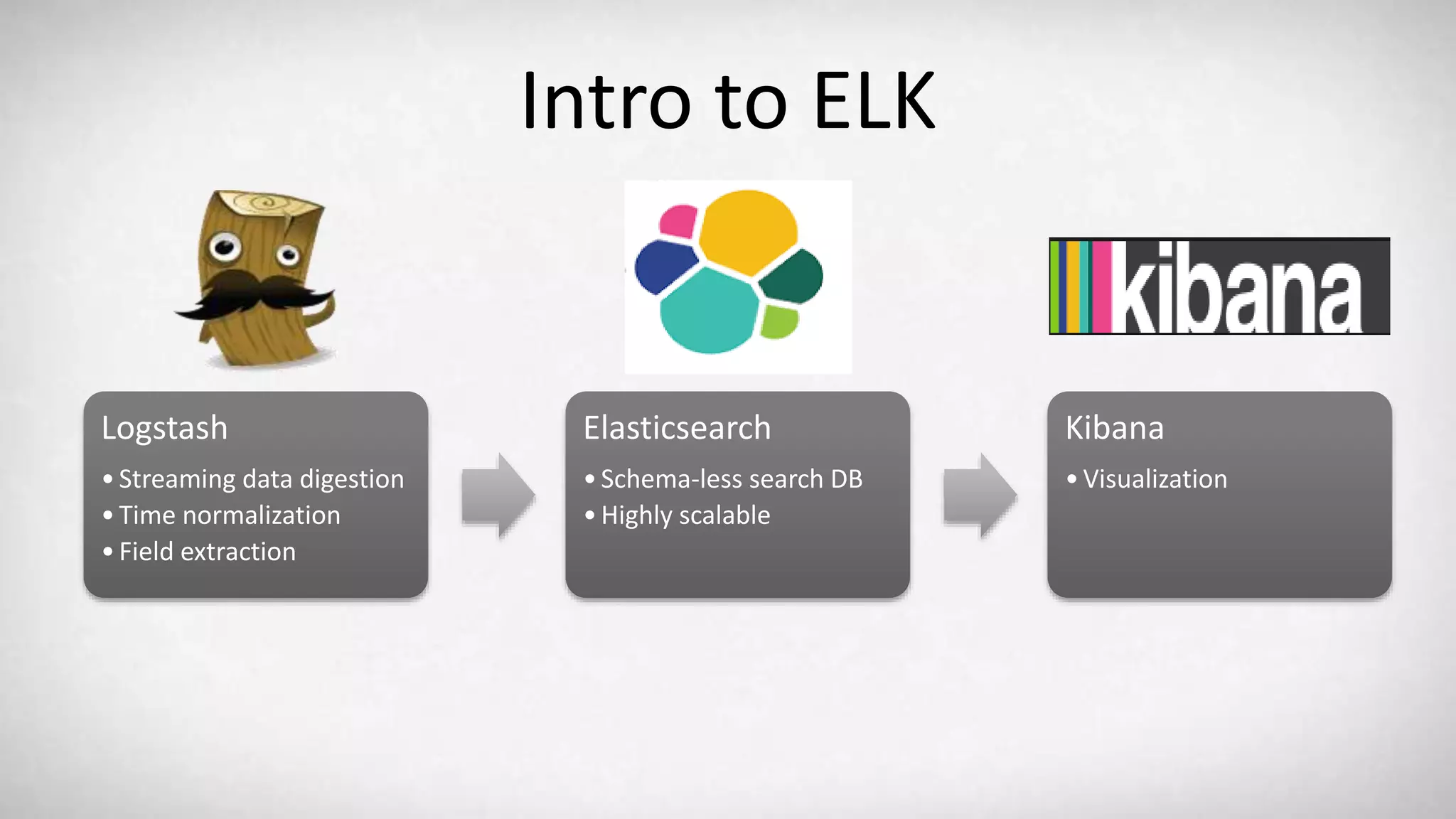

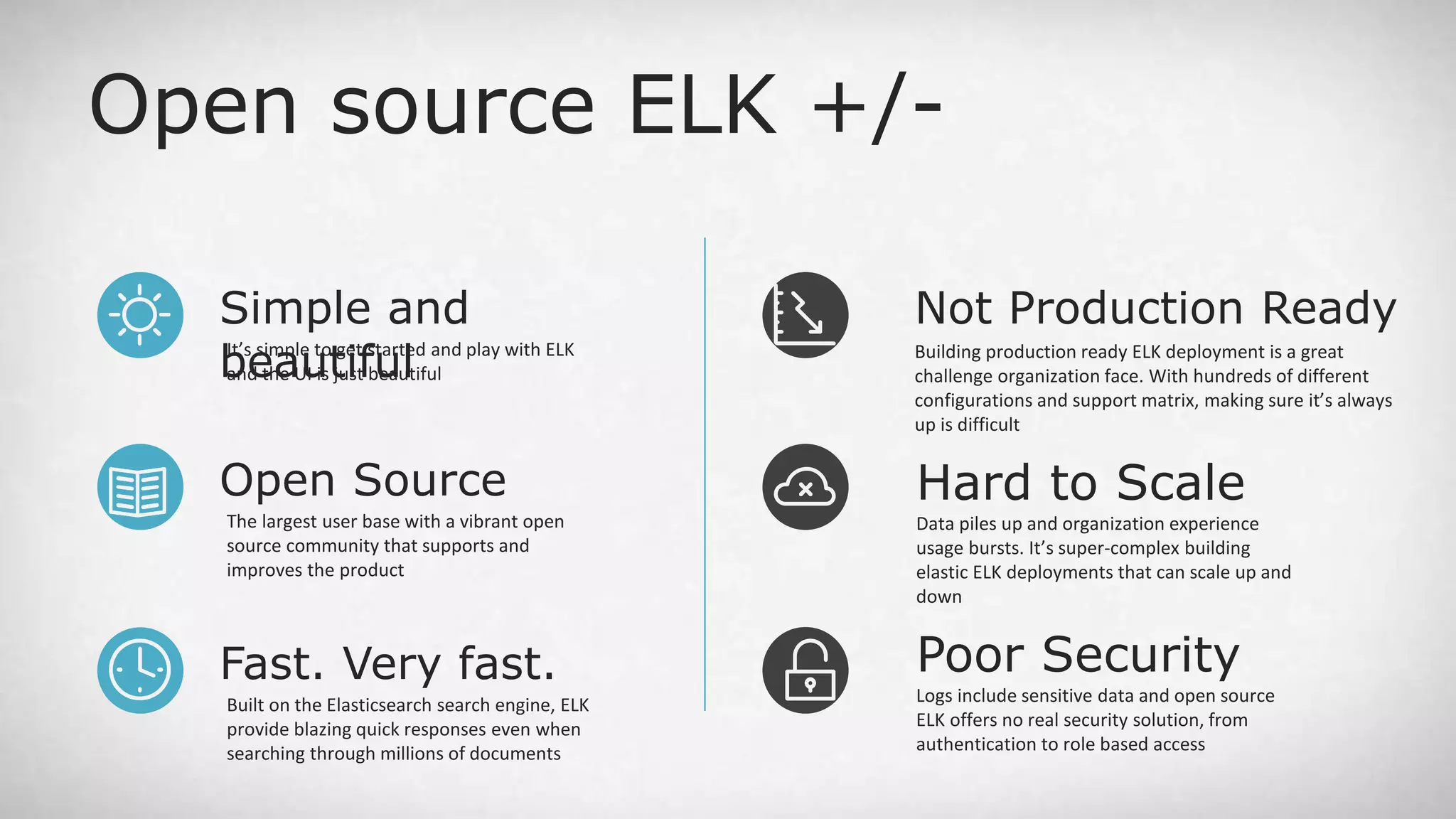

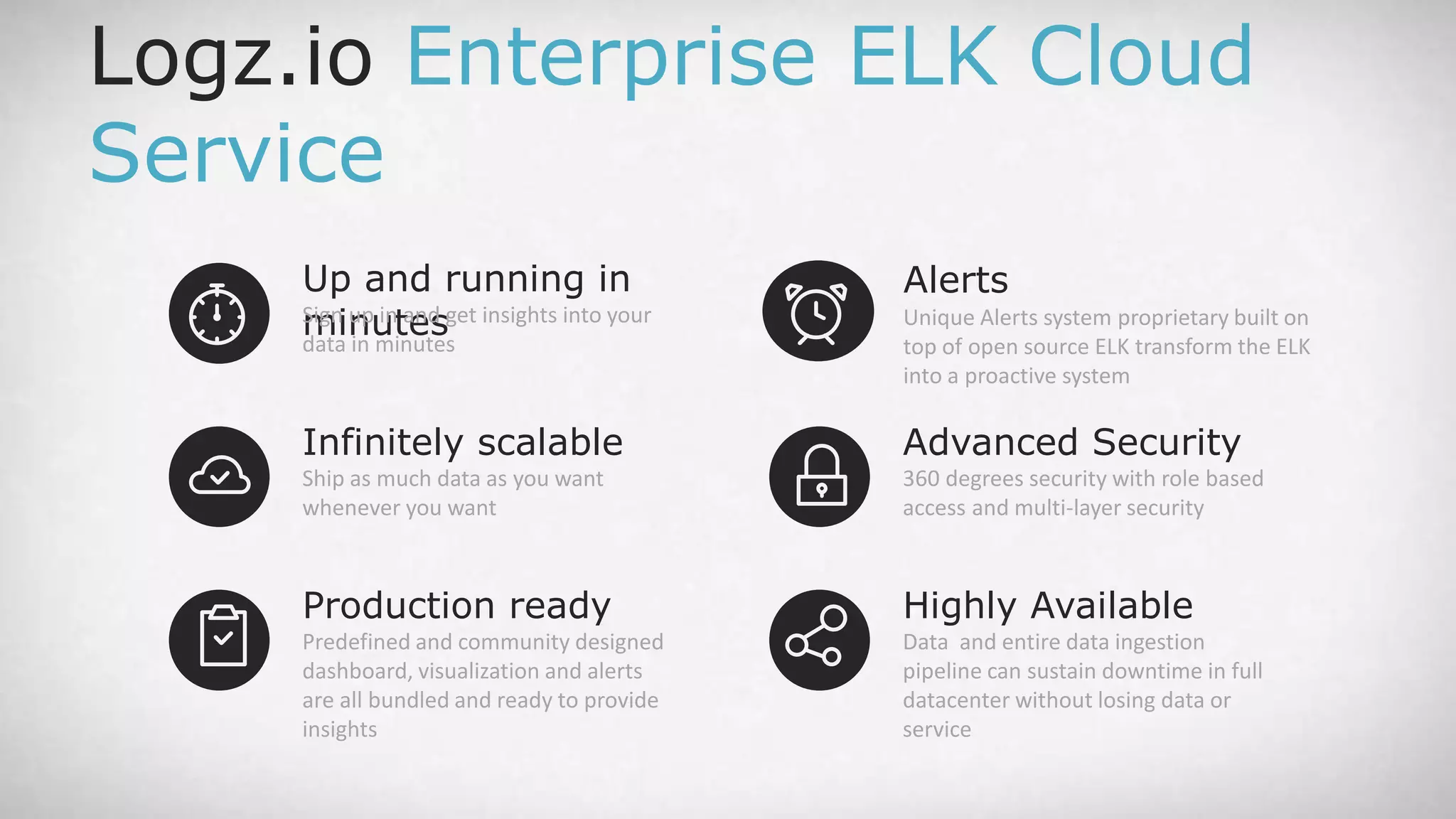

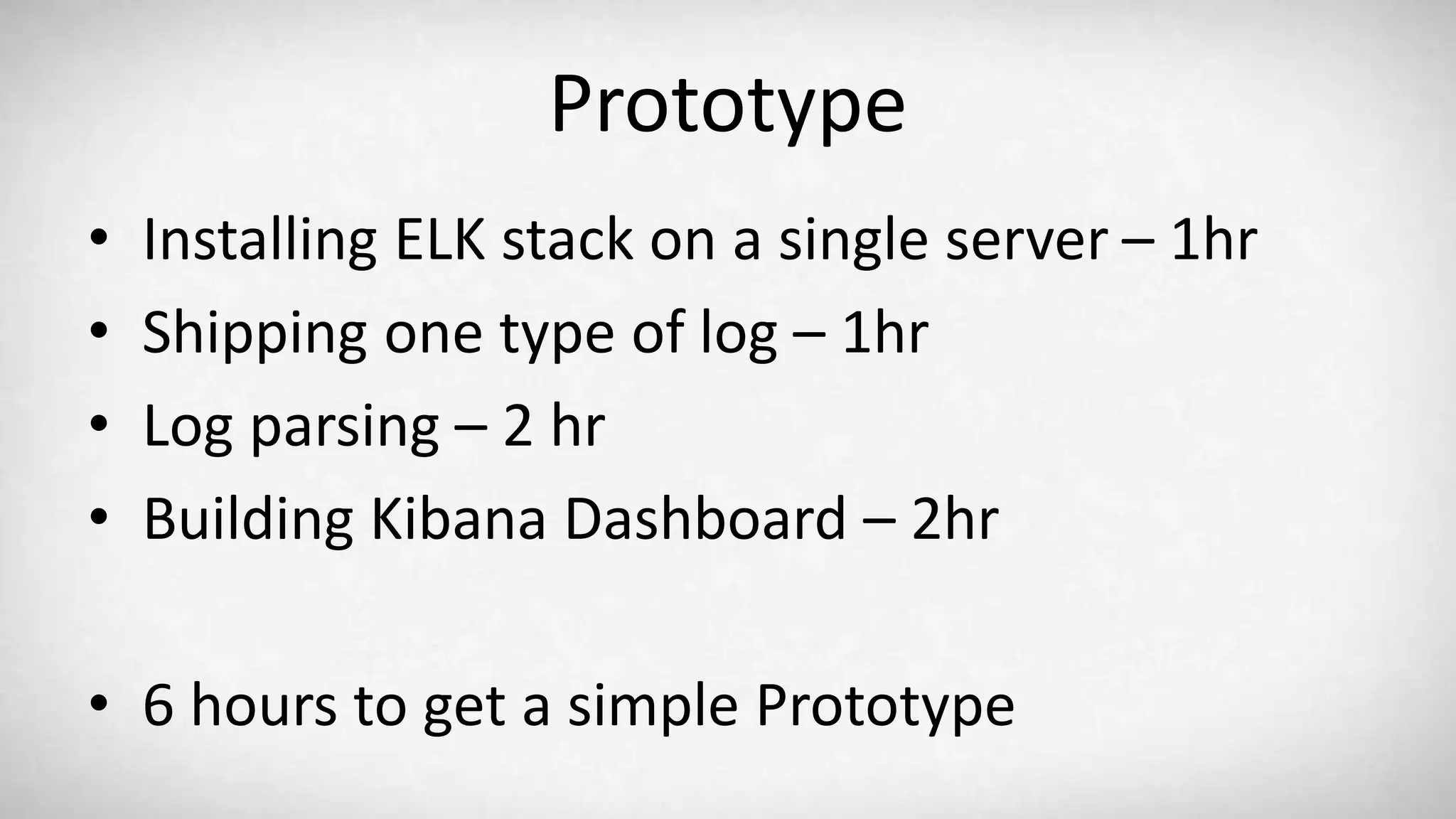

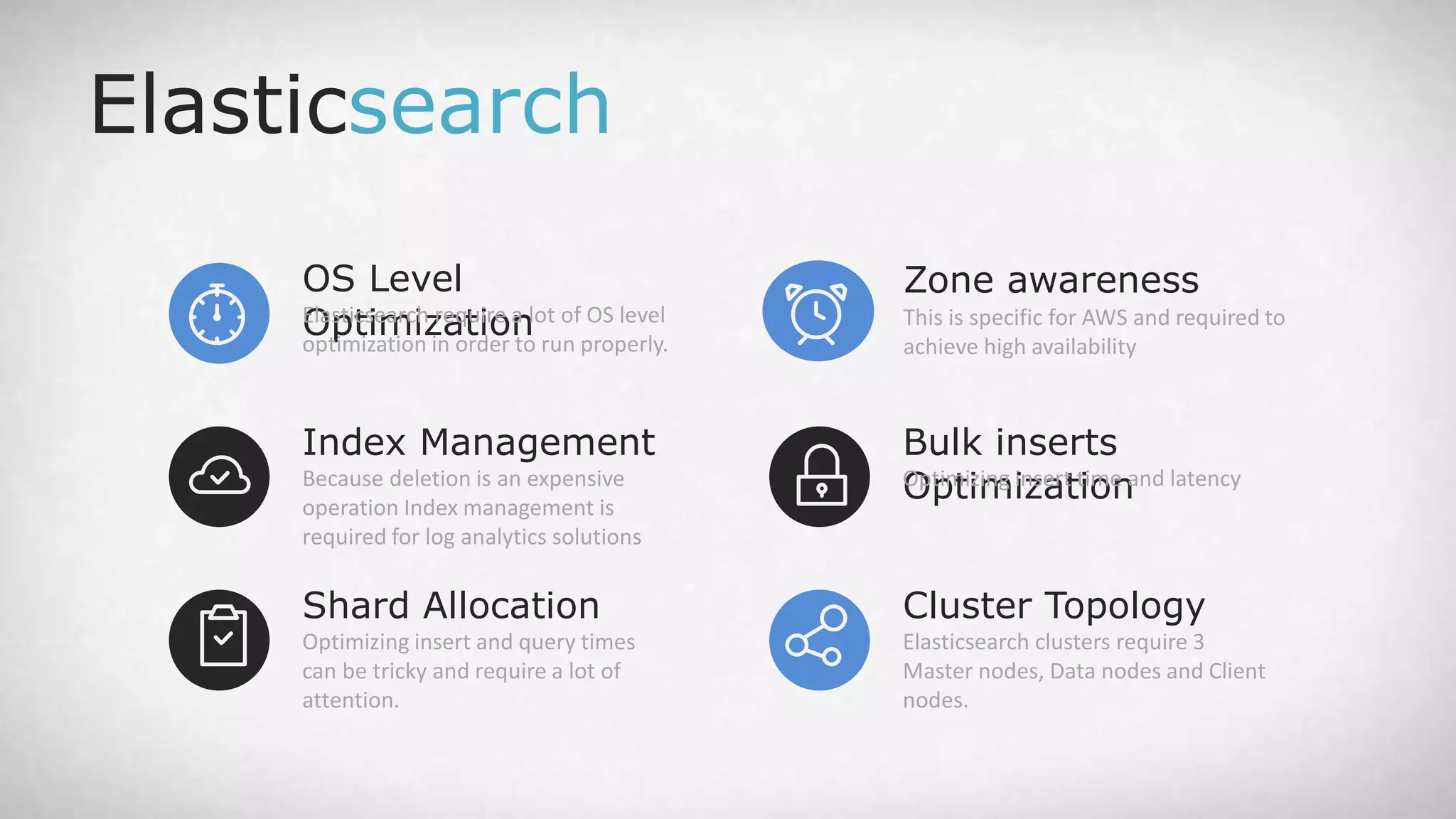

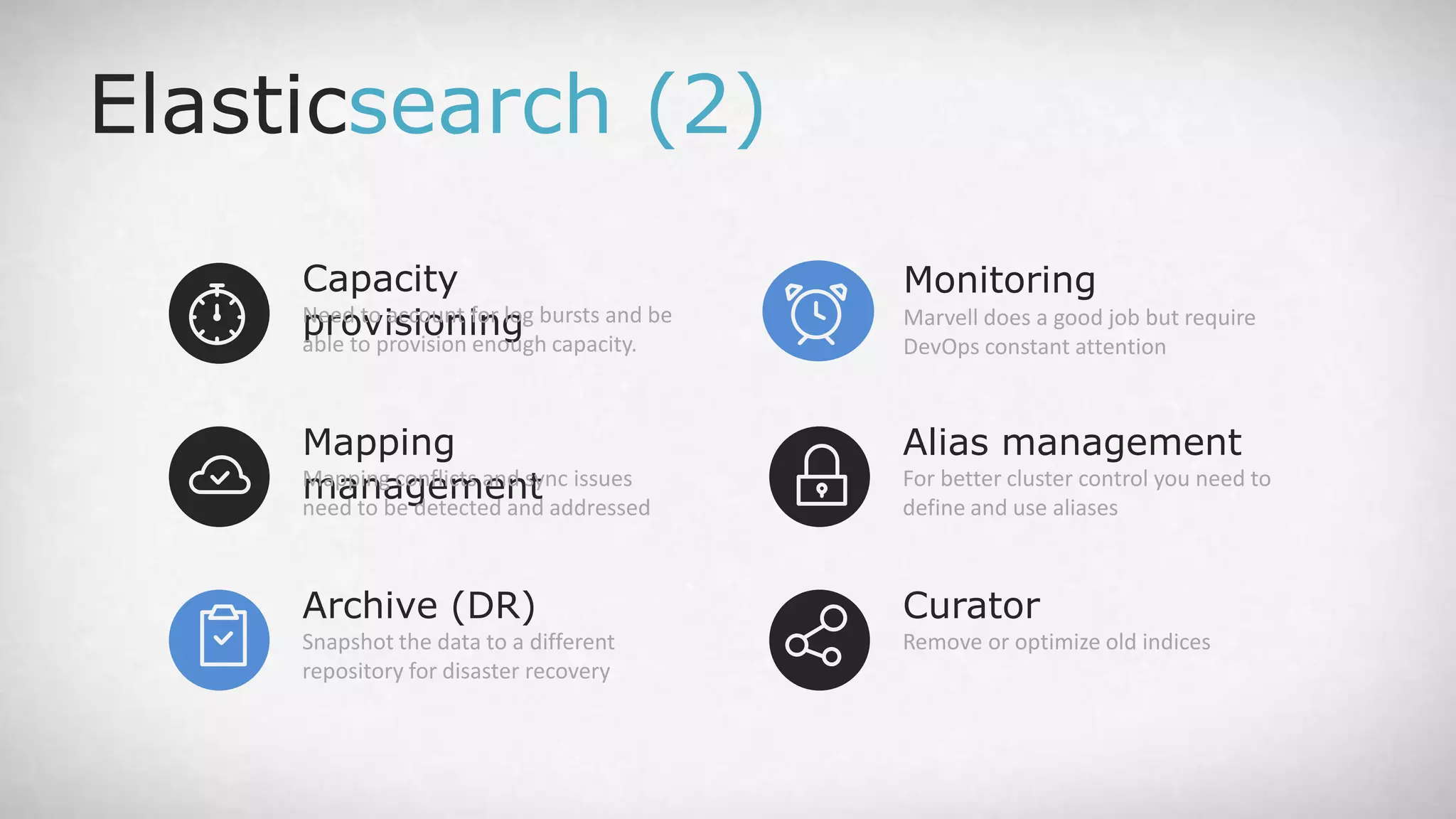

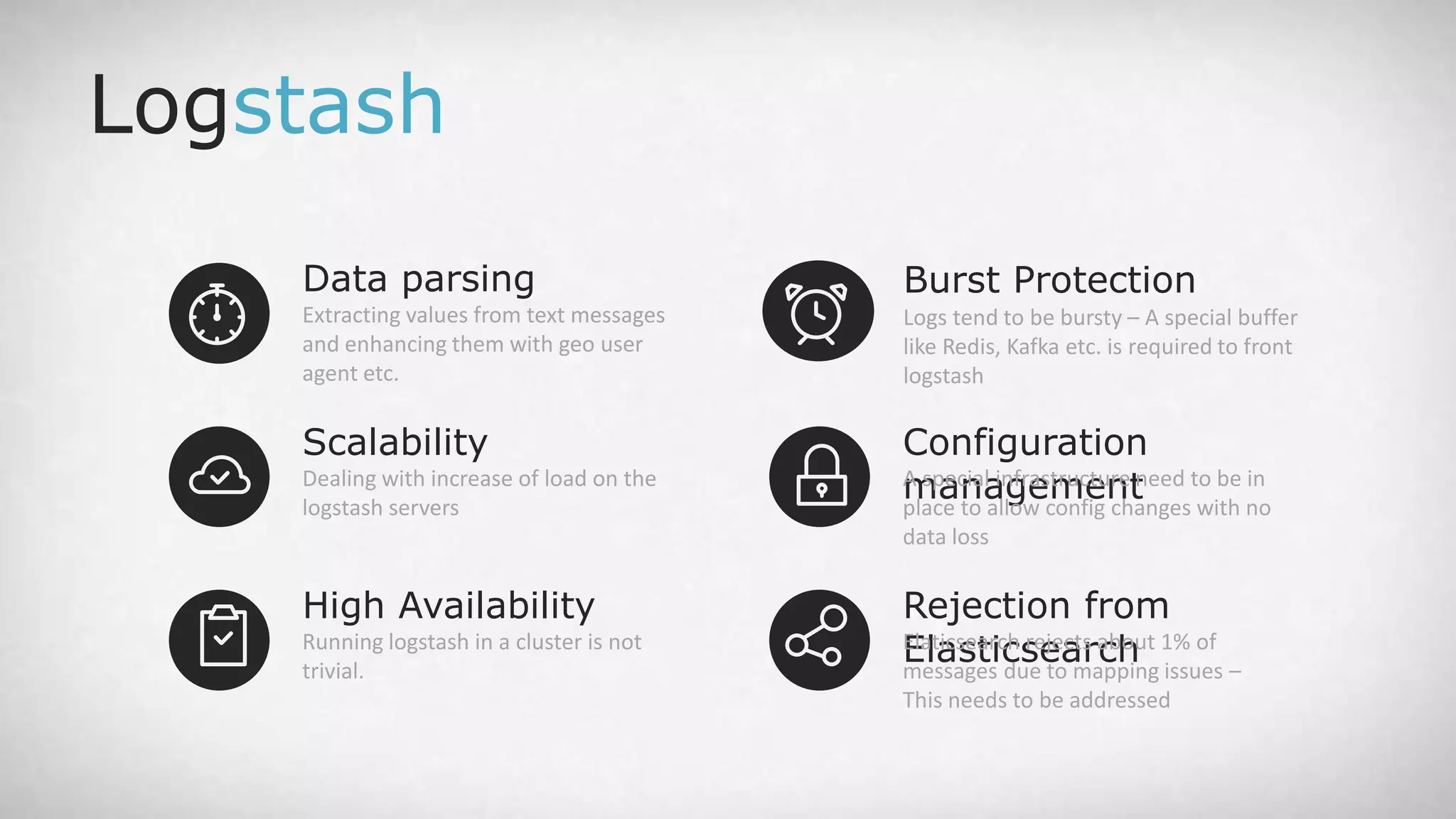

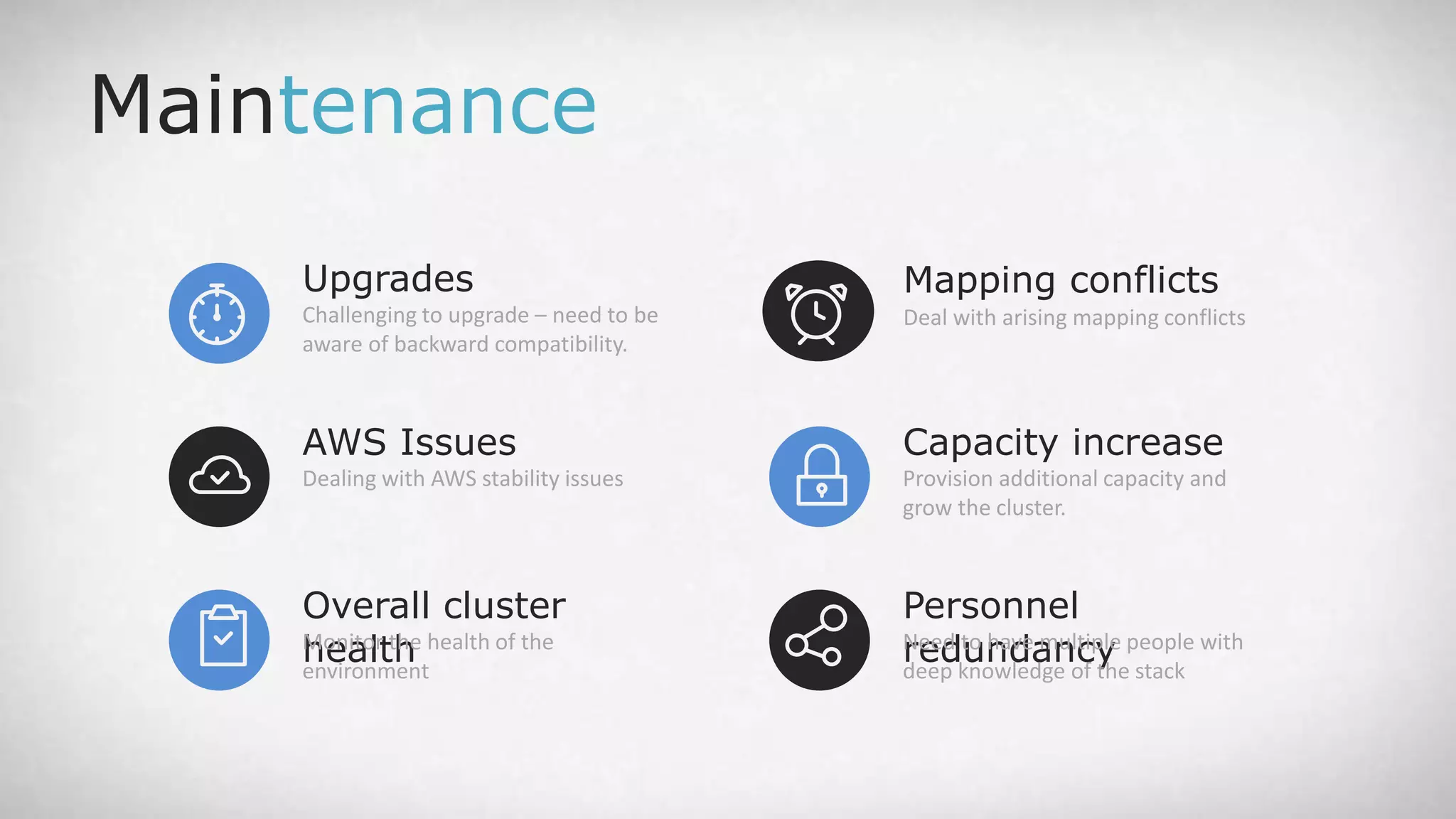

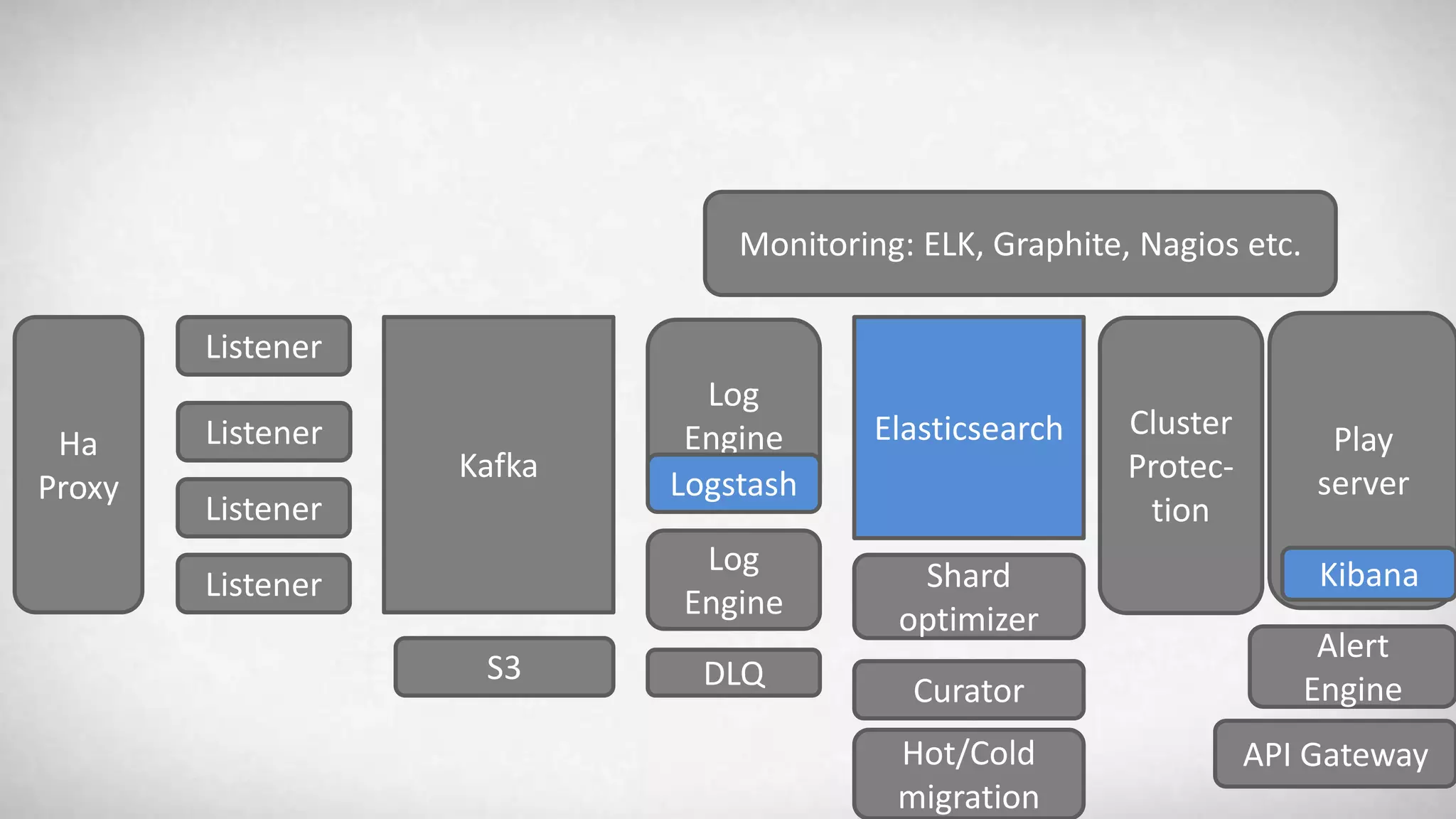

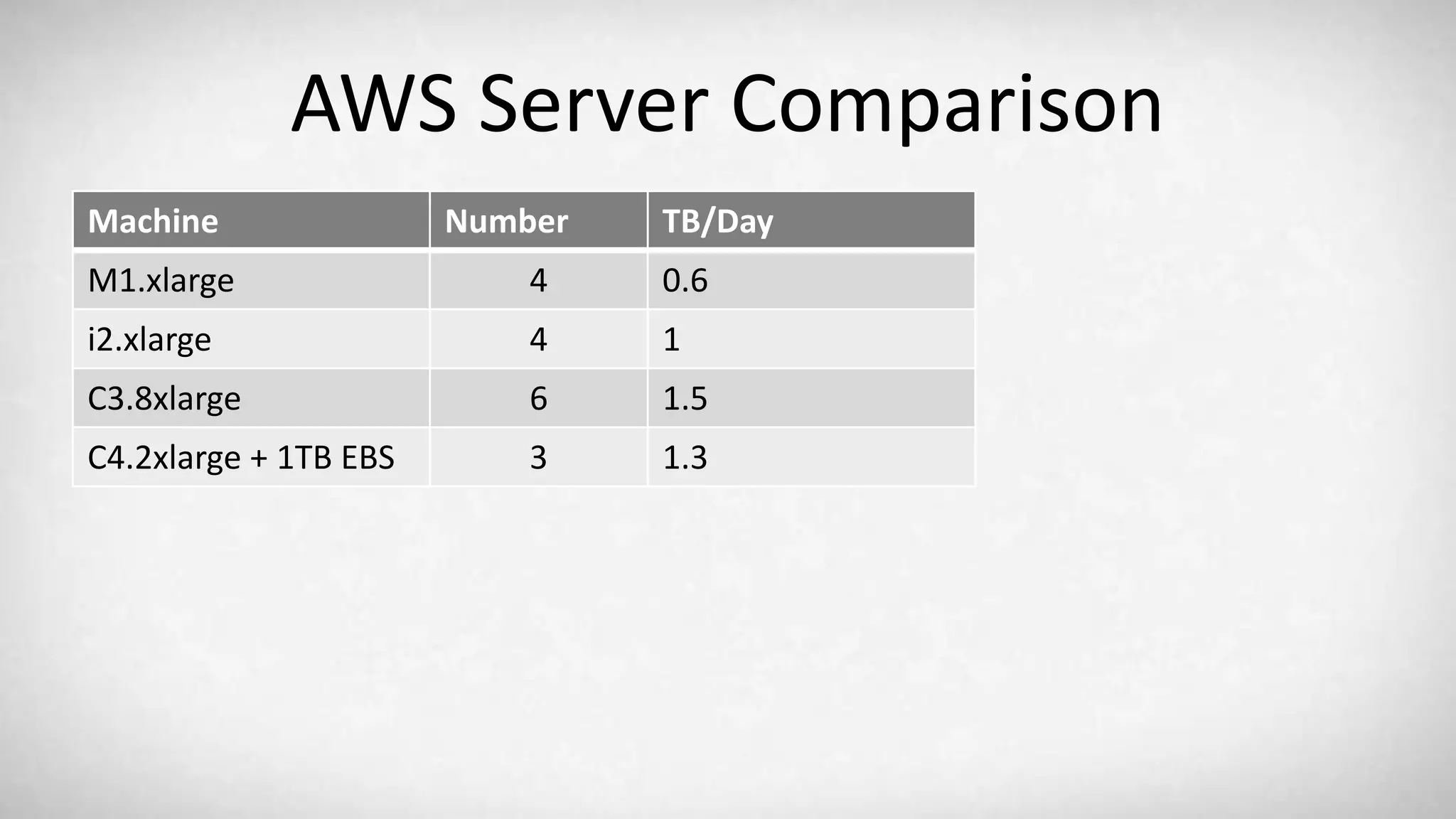

The document outlines a presentation by Asaf Yigal, co-founder of Logz.io, regarding log analytics and the ELK stack. It discusses the importance of log analytics for various applications, the benefits and challenges of using open-source solutions like ELK, and the features of Logz.io's enterprise ELK cloud service. Additionally, it provides insights into the complexity of building production-ready ELK deployments and the architecture of Logz.io's service.